We give a logical characterization of the polynomial-time properties of graphs with excluded minors: For every class C of graphs such that some graph H is not a minor of any graph in C, a property P of graphs in C is decidable in polynomial time if and only if it is definable in fixed-point logic with counting. Furthermore, we prove that for every class C of graphs with excluded minors there is a k such that a simple combinatorial algorithm, namely “the k-dimensional WeisfeilerLehman algorithm,” decides isomorphism of graphs in C in polynomial time.

1. Introduction

Problems that are hard to describe are hard to solve and vice versa. This claim may seem naive or just wrong at first sight, but it is the underlying idea of a deep and fruitful connection between the descriptive complexity and the computational complexity of computational problems. Here descriptive complexity refers to the “language resources” required to express a problem in a formal language, or logic, whereas computational complexity refers to the computational resources needed to solve the problem. The connection goes back to the beginnings of computability theory. For example, the recursively enumerable (or semidecidable) sets of natural numbers are precisely the sets definable by an existential formula of first-order logic in the language of arithmetic.15 Such a formula consists of a prefix of existential quantifiers followed by a Boolean combination of equations between polynomials in several variables.

In today’s computation theory, we are less interested in the classes of computable and recursively enumerable sets than in much smaller complexity classes such as the class PTIME of all polynomial-time solvable problems or the class NP. Furthermore, we rarely model computational problems as sets of natural numbers. Instead, our basic models are finite graphs and other finite structures such as labeled transition systems, Boolean circuits, and relational databases. (For simplicity, we mostly consider graphs in this article.) The starting point of modern descriptive complexity theory is Fagin’s Theorem,3 stating that a property of finite graphs (or other structures) is expressible in existential second-order logic if and only if it is decidable in nondeterministic polynomial time. More concisely, we say that existential second-order logic captures NP. Similar logical characterizations have been obtained for other complexity classes such as the polynomial-time hierarchy PH and PSPACE. However, no logic is known to capture the class PTIME or any natural complexity class below PTIME. Hence we lack a logical characterization of the problems we are most interested in—those that can be solved efficiently!

The question of whether there is a logic that captures PTIME has first been raised by Chandra and Harel2 in 1982 in a fundamental paper on database query languages. Chandra and Harel asked for a query language for relational databases expressing precisely those queries that can be evaluated in polynomial time. Clearly, it would be very nice to have such a language: It would allow the user to ask only queries that can be answered efficiently, while at the same time guaranteeing that all such queries can be asked. Gurevich7 rephrased the question in terms of logic. His precise definition of a “logic capturing PTIME” is subtle; we will discuss it below. Gurevich7 conjectured that there is no logic capturing PTIME. Observe that this conjecture implies PTIME ≠ NP, because by Fagin’s Theorem there is a logic that captures NP. The question of whether there is a logic capturing PTIME is still open today, and it is viewed as one of the main open problems in finite model theory and database theory. Only partial positive answers are known, most notably the ImmermanVardi Theorem10, 22 stating that least fixed-point logic captures PTIME on ordered structures (see Section 1.2).

1.1. Logics capturing polynomial time

1.1. Logics capturing polynomial time

So what exactly constitutes a logic that captures PTIME? A first requirement (L1) is that a logic L should have a decidable syntax, that is, a computable set of sentences. To define the semantics, we associate a property Pφ of finite graphs (or other structures) with each sentence φ of L. We say that φ defines Pφ and that Pφ is definable in L. For L to capture PTIME, we require (L2) each graph property decidable in PTIME to be definable in L and, conversely, each property definable in L to be decidable in PTIME. But this is not enough; we are still missing an effective link between sentences and the properties they define. Hence we require (L3) the existence of an algorithm that associates with each sentence φ of L an “evaluation algorithm” Aφ deciding the property Pφ in polynomial time. This completes the definition: we say that a logic captures PTIME if it satisfies (L1)(L3).

EXAMPLE 1. Consider first-order predicate logic FO. For instance, the FO-sentence

x

x

y E(x, y) in the language of graphs defines the graph property of having no isolated vertices. (“Each vertex has a neighbor.”) It can be shown that

y E(x, y) in the language of graphs defines the graph property of having no isolated vertices. (“Each vertex has a neighbor.”) It can be shown that FO satisfies criteria (L1) and (L3), but not (L2). Hence it is not a logic capturing PTIME.

So why are we interested in a logic capturing PTIME? After all, logic is just another formalism that can be used to describe computation, like Turing machines, the lambda calculus, various grammars and rewriting systems, or “real” programming languages. But there is an important difference between logics capturing complexity classes and the other formalisms: Logics speak directly about graphs and structures, whereas most other formalisms operate on encodings of structures by strings or terms. Hence a logical characterization of a complexity class is representation-independent. This may seem like a minor technical issue, but actually is quite significant, mainly because we have no natural canonical representations of structures by strings or terms, but have to make arbitrary choices when we construct such representations.

EXAMPLE 2. A standard way of representing graphs is by their adjacency matrices; once we have an adjacency matrix we can obtain a {0, 1}-string encoding the graph by concatenating the rows of the matrix.

Consider the graph displayed in Figure 1(a). Figure 1(b) shows three different adjacency matrices of this graph, leading to three different string encodings shown in Figure 1(c). Indeed, the graph has 4! = 24 adjacency matrices, one for each linear order of the vertices (the three matrices in Figure 1 correspond to the orders abcd, acbd, cabd). Not all 24 matrices are different, but there is no distinguished one that we may declare to be the “right” representation.

We are used to thinking of graphs as abstract models and of properties of graphs as only depending on the graphs and not on their specific representations. Examples of graph properties are “connectedness,” “acyclicity,” or “Hamiltonicity” (i.e., having a cycle that traverses each vertex exactly once). We would not view a statement like “the first vertex has degree 3” as describing a graph property, because a graph has no distinguished “first” vertex.

This level of abstraction is an integral part of our models. For example, if we use a graph model of a network then we abstract from the physical location of the nodes on purpose (otherwise we should use a different model). Similarly, it is a feature and not a bug of the relational database model to separate the logical from the physical level of a database.

There is a mismatch between these abstract models and the typical algorithms working on them. A graph algorithm does not get an abstract graph as input, but a specific representation of this graph, typically an adjacency matrix or adjacency lists. Nevertheless, its result is expected to be independent of the specific representation. But how can we guarantee that a particular graph algorithm is representation-independent? Unfortunately, it is not decidable whether an algorithm has this property. As a matter of fact, many of the standard graph algorithms we know internally depend on the specific representation of the input and only happen to be representation-independent in their output. A typical example is a connectivity algorithm based on depth-first search. At any vertex of the graph, a depth-first search chooses the “first” neighbor of the vertex to continue the search. But there is no canonical first neighbor; it depends on the specific representation of the input graph which neighbor comes first.

We may ask if there is a programming language that enforces representation independence, in the sense that all syntactically correct programs are representation-independent, and at the same time allows us to implement algorithms for all decidable properties of graphs. It is not too hard to come up with such a language, albeit not a very natural one. But the price to pay appears to be a huge loss in efficiency. The question of whether there is a logic capturing PTIME can be recast as asking for a programming language that enforces representation independence and at the same time allows us to implement polynomial-time algorithms for all properties of graphs that can be decided in polynomial time by conventional algorithms working on specific representations of graphs. (By polynomial-time algorithms in our new language we mean programs that can be executed in polynomial time on a conventional machine.)

1.2. Capturing polynomial time on graphs with excluded minors

1.2. Capturing polynomial time on graphs with excluded minors

As mentioned earlier, the question of whether there is a logic capturing PTIME is still open, but there are partial positive results for specific classes of structures. The main theorem of this article is such a result. Informally, a logic L captures PTIME on a class C of structures if precisely the polynomial-time properties of structures in C are definable in L. (We refrain from giving the formal definition, which is a straightforward adaptation of the general definition of a logic capturing PTIME.)

A structure consists of a universe, which is simply a finite set, and a finite collection of relations, functions, and constants on this universe. (In general, structures may have infinite universes and infinitely many relations, functions, and constants, but in descriptive complexity we restrict our attention to finite structures.) An ordered structure is a structure that has one distinguished binary relation < which is a linear order of the universe. For example, a graph G = (V, E) is a structure with universe V and one binary “edge” relation E. An ordered graph is a triple (V, E, <), where (V, E) is a graph and < is a linear order of V. Ordered structures play a special role in descriptive complexity theory, because they admit a canonical string representation. Recall Example 2. We saw there that for each linear order of the vertices of a graph there is one adjacency matrix, and from this adjacency matrix we can obtain a {0, 1}-string representing the graph. Hence for an ordered graph G = (V, E, <) we have a canonical string representation: We represent G by the string derived from the adjacency matrix of (V, E) corresponding to the linear order < of V. This can be generalized from ordered graphs to arbitrary ordered structures. Thus the issue of representation independence disappears when we deal with ordered structures.

In 1982, Immerman10 and Vardi,22 independently, proved that least fixed-point logic FP captures polynomial time on the class of all ordered structures. FP is an extension of first-order predicate logic by a fixed-point operator that allows it to formalize inductive definitions. We will not see many logical formulas in this article and treat logics on a high level that does not require any detailed knowledge of their syntax and semantics. However, since FP will play a central role, it may be worthwhile to briefly look at its main mechanism:

EXAMPLE 3. Suppose we want to define the transitive closure T of the edge relation of a graph G = (V, E). It is well known that this is not possible in first-order logic. But the transitive closure admits the following inductive definition: We let T1:= E, and for all i we let Ti + 1 be the set of all pairs (u, v) such that there is a vertex w with (v, w)

Ti and (w, u)

Ti and (w, u)

Ti. Then T is the union of all the Ti. Equivalently, we may define T as the least fixed point of the (monotone) operator

Ti. Then T is the union of all the Ti. Equivalently, we may define T as the least fixed point of the (monotone) operator

Here

and

and

denote disjunction and conjunction, respectively. In

denote disjunction and conjunction, respectively. In FP, we can form a formula

to define the transitive closure. If we call this formula tc(v, w), then the FP–sentence conn:=

v

v

w(v = w

w(v = w

tc(v,w)) defines the property of an (undirected) graph being connected.

It can be shown by standard techniques that FP does not capture polynomial time on all structures, the most blatant reason being that the logic lacks the ability to count. For example, the graph property of having an even number of vertices is not definable in FP. In the late 1980s, Immerman11 conjectured that fixed-point logic with counting FP+C, an extension of least-fixed point logic by a mechanism that allows it to define the cardinalities of definable sets, might capture polynomial time. In addition to the vertices of a graph, in FP+C we can also speak about integers in the range from 0 to the size of the graph. Standard arithmetic operations on these integers are available in the logic. The main purpose of the integers is to express cardinalities.

EXAMPLE 4. Recall that a Eulerian cycle in a graph is a closed walk on which every edge occurs exactly once. A graph is Eulerian if it has a Eulerian cycle. It is a well-known fact (going back to Euler) that a graph is Eulerian if and only if it is connected and every vertex has even degree.

The term #x’ E(x, x’) counts the number neighbors x’ of a vertex x in a graph. Hence the formula

where x, x’ are variables ranging over the vertices of a graph and y is a variable ranging over the integers, says that vertex x has even degree. Finally, the FP+C-sentence

where conn is the FP–sentence defined in Example 3, says that a graph is Eulerian.

Although in 1992 Cai et al.1 refuted Immerman’s conjecture that FP+C captures polynomial time, it has turned out since then that FP+C does capture polynomial time on many interesting classes of structures, among them trees,12 graphs of bounded tree width,6 planar graphs,5 and interval graphs.13 Hella et al.8 proved that FP+C captures polynomial time on almost all structures (in a precise technical sense).

Our main theorem generalizes many of these results. To state it, we need to define graph minors. A graph H is a minor of a graph G if H is isomorphic to a graph obtained from a subgraph of G by contracting edges (see Figure 2 for an example). We say that H is an excluded minor for a class C of graphs if H is not a minor of any graph in C.

THEOREM 1. Let C be a class of graphs with at least one excluded minor. Then fixed-point logic with counting (FP+C) captures polynomial time on C.

Examples of classes of graphs with an excluded minor are the class of planar graphs (the complete graph with 5 vertices, K5, is an excluded minor), for every surface S the class of all graphs embeddable in S (every complete graph Kk such that (k−3)(k−4)/6 is greater than the genus of S is an excluded minor), the class of all graphs that can be embedded into R3 such that no cycle is nontrivially knotted (K7 is an excluded minor), for every k the class of all graphs that have a vertex cover of size at most k (Kk + 2 is an excluded minor), for every k the class of all graphs that have a feedback vertex set of size at most k (Kk + 3 is an excluded minor), and for every k the class of all graphs of tree width at most k (Kk + 2 is an excluded minor). To show the limitations of our theorem, let us also mention that the class of all cubic graphs (i.e., graphs in which every vertex has exactly three neighbors) does not exclude any graph as a minor.

A consequence of Theorem 1 is a simple polynomial-time isomorphism test for all classes of graphs with excluded minors: For each such class C a generic combinatorial “color refinement” algorithm, known as the k-dimensional WeisfeilerLehman algorithm, decides isomorphism of graphs in C. Remarkably, the algorithm does not build on any of the special graph-theoretic properties the graphs in C may have, only the parameter k depends on C. Neither does the algorithm use logic in any way; logic is only used to prove that it works. We describe the algorithm in Section 6, which can be read independently of the rest of the article.

To prove Theorem 1, we develop a definable structure theory for graphs with excluded minors. It builds on the structure theory for graphs with excluded minors developed by Robertson and Seymour (see Section 2), but ultimately leads to quite different structural decompositions of the graphs. On the graph theoretic side, the main new aspect of our theory is that we need to identify structural properties of graphs with excluded minors that are invariant under automorphisms, which is a prerequisite for making them definable (see the discussion in Section 3.1). On the logical side, we have to find a notion of definable decomposition that is flexible enough to be widely applicable, yet still carries enough information to enable us to prove Theorem 1. Definable (ordered) treelike decompositions, which will be discussed in Section 4, fulfill these requirements. Much of the definable structure theory we develop is fairly general and not tailored toward graphs with excluded minors, and it may find other applications.

The full proof of Theorem 1 is very long, more than 200 pages, and will be published in a monograph, a first draft of which can be found at http://www2.informatik.hu-berlin.de/~grohe/pub/cap/.

2. The Structure of Graphs with Excluded Minors

The structure of graphs with excluded minors has strong topological aspects. Hence before we describe it, we briefly review a few facts about surface topology. Surfaces are topological spaces that locally look like the plane (“two-manifolds”); in addition, surfaces are required to be connected and compact. They can be classified into two infinite families: The first family of orientable surfaces consists of the sphere, the torus (“donut”), the double-torus, the triple-torus (“pretzel”), etc. The surfaces in the second family of nonorientable surfaces are obtained by cutting finitely many disjoint disks out of a sphere and then glueing Möbius strips on all the resulting holes (see Figure 3). With one Möbius strip this yields the projective plane and with two Möbius strips the Klein bottle. A convenient parameter to characterize a surface is its Euler genus, which for an orientable surface is twice the number of holes (e.g., six for a pretzel) and for a nonorientable surface is the number of Möbius strips used in its construction. A graph is embeddable in a surface if it can be drawn on the surface without edges crossing.

In long series of papers, Robertson and Seymour19 developed a structure theory for graphs with excluded minors. In Robertson and Seymour,20 they proved a structure theorem which says that graphs with excluded minors can be decomposed into pieces that are almost embeddable into some surface. The pieces are arranged in a treelike fashion. Intuitively, almost embedding a graph into a surface means first removing a bounded number of vertices from the graph (these vertices are called apices) and then drawing the rest of the graph in the surface with no edges crossing except in a bounded number of regions (called vortices) in which the surface structure may be violated. The high-level structure is illustrated in Figure 4. Each vortex is attached to the boundary of a “hole” in the disk. It is beyond the scope of this article to describe the structure of the vortices; the main parameter, called width of a vortex, is the maximum number of disjoint paths in a vortex “across the hole.” This width of the vortices is supposed to be bounded in an almost embeddable graph. Thus overall there are four parameters in the definition of almost embeddability: The surface, the number of apices, the number of vortices, and the width of the vortices. These parameters depend on the excluded minor.

The structure theorem for graphs with excluded minors plays a central role in Robertson and Seymour’s proof of their famous Graph Minor Theorem,21 stating that every class of graphs that is closed under taking minors can be characterized by finitely many excluded minors. The structure theorem has also found various algorithmic applications.

3. Definable Orders and Definable Canonization

Ultimately, all known results about capturing polynomial time on classes of structures are proved by a reduction to ordered structures and the ImmermanVardi Theorem, and our Theorem 1 is no exception. These reductions are obtained either by defining linear orders on the structures under consideration or by defining ordered copies of the structures (a method known as definable canonization). In this section, we will briefly discuss both methods and illustrate them with a few examples. Then in Section 4 we will introduce the key concept of our definable structure theory, definable ordered treelike decompositions. Essentially, these are hierarchical decompositions of graphs into pieces on which we can define linear orders. We will see that once we have defined such decompositions in the logic FP on a class C of graphs, then we can prove that FP + C captures PTIME on C. The reader not interested in the technical details may safely skip the rest of this and the following section and continue with Section 5, where we show that graph classes with excluded minors admit ordered treelike decompositions definable in FP.

3.1. Graphs, isomorphisms, and definable relations

3.1. Graphs, isomorphisms, and definable relations

The vertex set of a graph G is denoted by V(G) and the edge set by E(G). An isomorphism from a graph G to a graph H is a one-to-one mapping f from V(G) onto V(H) such that for all vertices u, v

V(G) we have uv

V(G) we have uv

E(G) if and only if f(u)f(v)

E(G) if and only if f(u)f(v)

E(H). The representation-independence of graph properties discussed in Section 1.1 can be recast as invariance under isomorphisms: If a graph G has a property P and there is an isomorphism from G to a graph H, then H has property P as well. An automorphism of a graph G is an isomorphism f from G to G; it is nontrivial if f(v) ≠ v for at least one v.

E(H). The representation-independence of graph properties discussed in Section 1.1 can be recast as invariance under isomorphisms: If a graph G has a property P and there is an isomorphism from G to a graph H, then H has property P as well. An automorphism of a graph G is an isomorphism f from G to G; it is nontrivial if f(v) ≠ v for at least one v.

All formulas that appear in this article are FP formulas or FP+C formulas in the language of graphs. Free variables are always ranging over the vertices of a graph. By writing φ(x, y) we indicate that the free variables of the formula φ are among x, y, and G ⊨ φ[u, v] means that G satisfies φ if x is interpreted by u and y is interpreted by v. It is an important fact about definability (not only in FP and FP+C, but any logic) that definable sets and relations are invariant under automorphisms. That is, if φ(x1, …, xk) is a formula, G is a graph, and f is an automorphism of G then for all v1, …, vk

V(G) we have G ⊨ φ[v1,…, vk] if and only if G ⊨ φ[f(v1),…, f(vk)].

V(G) we have G ⊨ φ[v1,…, vk] if and only if G ⊨ φ[f(v1),…, f(vk)].

The easiest way to prove that a logic captures PTIME on a class C of graphs via the ImmermanVardi Theorem is by defining linear orders on the graphs in C in the logic. For example, on directed paths we can easily define linear orders in FP: The transitive closure of the edge relation of a directed path happens to be a linear order of the path’s vertices, and we have seen in Example 3 that the transitive closure is definable in FP. On other structures, we cannot define linear orders directly, but need “parameters” in our definition. For example, on an undirected path, it is impossible to define a linear order without parameters, simply because there is no canonical way of defining which endpoint of a path comes first. More generally, if a graph has a nontrivial automorphism then it is impossible to define a linear order on this graph. However, if we fix one endpoint of an undirected path then we can define a linear order in the logic FP. Similarly, if we fix two adjacent vertices in a cycle we can define a linear order on the cycle (see Figure 5). In general, we say that a formula φ (x, y, z1, …, zk) defines a linear order on a graph G with parameters z1, …, zk if there are vertices w1, …, wk such that the binary relation {(u, v)

V(G)2 | G ⊨ φ[u, v, w1,…, wk]} is a linear order of V(G). We say that a class C of graphs admits

V(G)2 | G ⊨ φ[u, v, w1,…, wk]} is a linear order of V(G). We say that a class C of graphs admits FP–definable linear orders if there is an FP-formula that defines a linear order on all graphs in G

C. It follows from the ImmermanVardi Theorem that if a class C admits

C. It follows from the ImmermanVardi Theorem that if a class C admits FP-definable linear orders then FP captures PTIME on C.

Graphs that admit FP-definable linear orders tend to be rare; even the trivial graphs with no edges do not admit FP-definable linear orders. Fortunately, there is a way to circumvent this problem. Instead of an order, we define an ordered copy of a graph. Note the difference: If we define an order on the graph, we know exactly which vertex comes first, second, etc. If we just have an ordered copy (but no mapping associating the vertices of the original graph with the vertices of the copy), it does not give us any additional information about the individual vertices of the original graph. Yet an ordered copy can be very useful. For example, we can use it to obtain a canonical adjacency matrix of our graph. But how can we define an ordered copy of a graph in logic without actually defining a linear order on the vertices? We can do this with a triple (φV(x), φE(x, y), φ< (x, y)) of formulas: applied to a graph G, we want these formulas to define a set V’ := {a|G ⊨ φv[a]}, a binary relation E’ := {ab | G ⊨ φE[a, b]}, and a binary relation <‘ := {ab | G ⊨ φ<[a, b]} such that G‘:= (V‘, E‘) is a graph isomorphic to G and <‘ is a linear order of V‘. The triple (φV (x), φE (x, y), φ< (x, y)) is called a transduction or syntactical interpretation. We usually work with transductions consisting of FP+C formulas. Remember that in FP+C we can not only speak about the vertices of a graph, but also about an initial segment of the integers. The trick is to use the integers to represent the vertices of the copy of the input graph and then use the natural order of the integers as an order on these vertices.

EXAMPLE 5. A k-star has k + 1 vertices, one center and k rays. Each ray has an edge to the center, and there are no other edges. Exploiting the fact that stars have many automorphisms, it can be shown that the class of all stars does not admit definable linear orders.

We can define an ordered copy (V‘, E‘, <) of a k-star S by letting V‘:= {0, 1, …, k} and E‘:= {0i | 1 ≤ i ≤ k} and letting <‘ be the natural order on V’ (see Figure 6). The definition of (V‘, E‘, <‘) can easily be formalized by an FP+C transduction (that does not depend on k). Counting is crucial here, because fixed-point logic without counting cannot determine how many rays a star has.

We say that a class C of graphs admits FP+C–definable canonization if there is an FP+C transduction defining an ordered copy of each graph in C. It follows from the ImmermanVardi Theorem that if a class C admits FP+C-definable canonization then FP+C captures PTIME on C. The idea of using definable canonization to capture polynomial time goes back to Immerman and Lander.12 It has been developed to great depth by Otto.17 Example 5 shows that the class of stars admits FP+C-definable canonization. By applying the same idea recursively, Immerman and Lander12 were able to prove that the class of all trees admits FP+C-definable canonization.

4. Ordered Treelike Decompositions

The key idea in the proof of Theorem 1 is to decompose graphs with excluded minors in a treelike fashion into “simple” pieces on which we can define linear orders in FP and then use an argument similar to the definable tree canonization by Immerman and Lander12 to show that the graphs admit FP-definable canonization.

The standard graph theoretic decomposition method, which is underlying Robertson and Seymour’s structure theorem for graphs with excluded minors (see Section 2), is based on tree decompositions. A tree decomposition of a graph G is a pair (T, β) consisting of a tree T and a mapping β associating with every node t of T a set β(t) ⊆ V(G), called the bag of t, subject to certain technical conditions making sure that the structure of the tree T approximates the connectivity structure of G. To utilize tree decompositions in our context we would have to define them in FP or FP+C. This is impossible because tree decompositions are usually not invariant under automorphisms of the underlying graph. We resolve this problem by introducing a more general notion of decomposition that we call treelike. In a treelike decomposition, we replace the tree T underlying a tree decomposition by a directed acyclic graph D. The idea is that certain restrictions of D to subtrees yield tree decompositions of G, and by including many such decompositions we can close the tree-like decompositions under automorphisms of a graph.

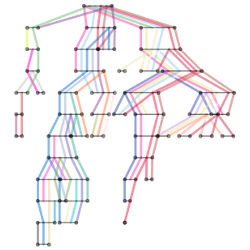

To get some idea how treelike decompositions look, consider Figure 7, which shows a treelike decomposition of the cycle C4:= ({1, 2, 3, 4}, {12, 23, 34, 41}). The numbers in the nodes of the decomposition describe the bags. The three red nodes form a tree decomposition of C4, and so do the two blue nodes. There are many other tree decompositions of C4 contained in the treelike decomposition in a similar way. Note the cyclic structure of the whole decomposition, which reflects the structure of the underlying cycle and is the reason for the invariance of the decomposition under automorphisms of the cycle. This cyclic structure is lost in a tree decomposition like the one consisting of the three red nodes.

A treelike decomposition of a graph G is FP–definable if there are FP formulas defining the vertex and edge set of the directed acyclic graph D and the bags β(t) of the nodes t

V (D). For example, the treelike decomposition of the cycle C4 shown in Figure 7 is

V (D). For example, the treelike decomposition of the cycle C4 shown in Figure 7 is FP-definable.

An ordered treelike decomposition of a graph G consists of a treelike decomposition together with a linear order of each bag. An ordered treelike decomposition is FP–definable if its underlying treelike decomposition is FP-definable and its bags admit FP-definable linear orders. A class C of graphs admits FP–definable ordered treelike decompositions if there are formulas defining an ordered treelike decomposition on every graph in C. The following lemma is a far reaching generalization of Immerman and Lander’s FP+C-definable canonization of trees.

LEMMA 1. Let C be a class of graphs that admits FP+C–definable ordered treelike decompositions. Then C admits FP+C–definable canonization.

It turns out that in our application of the lemma we can even define the ordered treelike decompositions in the logic FP.

5. The Definable Structure Theorem

THEOREM 2 (DEFINABLE STRUCTURE THEOREM). Every class of graphs with at least one excluded minor admits FP–definable ordered treelike decompositions.

This theorem is our main technical result. It implies Theorem 1 via Lemma 1 and the ImmermanVardi Theorem. To prove the Definable Structure Theorem, we built up the decomposition in stages described in the following six steps.

Step 1: Planar graphs. We start by proving that three-connected planar graphs admit FP-definable linear orders. Remember that a graph is three-connected if the deletion of any two vertices leaves it connected. The key step in the proof is to define the facial cycles of a three-connected planar graph in FP. We use the fact, going back to Whitney, that the facial cycles (boundaries of the faces) of a three-connected graph embedded in the plane are precisely the chordless and nonseparating cycles. In particular, this means that the facial cycles are the same for every embedding of the graph. Once we have defined the facial cycles, we can use three parameters to fix one facial cycle and then define in FP a linear order by “walking around this cycle in spirals.”

To show that arbitrary planar graphs admit FP-definable ordered treelike decompositions, we prove that the standard decomposition of a graph into its three-connected components yields an FP-definable treelike decomposition.

Step 2: Graphs embeddable in a surface. We exploit the fact that every surface of positive Euler genus has a noncontractible cycle. Cutting the surface open along such a cycle and glueing disks on the hole(s) yields one or two surfaces of strictly smaller Euler genus.

To define ordered treelike decompositions on graphs embeddable in a surface, we proceed by induction on the Euler genus of the surface. Planar graphs are the base case. In the inductive step, we try to define the facial cycles of a graph embedded in a surface. Either we succeed, then we can use the facial cycles to define a linear order in a similar way as for planar graphs. Or we find a noncontractible cycle along the way. Then we can delete this cycle, apply the induction hypothesis to the resulting graph embeddable in one or two surfaces of strictly smaller Euler genus, and lift the decomposition we obtain to the original graph, exploiting the fact that we can define in FP an order on the deleted cycle with just two parameters.

Step 3: Graphs of bounded tree width. A graph has tree width at most k if it has a tree decomposition, or equivalently, a treelike decomposition, where every bag contains at most k + 1 vertices. The width of a decomposition is 1 plus the maximum cardinality of the bags. It turns out that we can actually define treelike decompositions of width k on graphs of tree width at most k. We do this by inductively defining partial decompositions in a bottom up fashion. Once we have a treelike decomposition of width at most k we can trivially define linear orders on the bags of size (k + 1) using (k + 1) parameters.

Step 4: Almost planar graphs. Remember our informal description of almost embeddable graphs in Section 2. Let A(p, q, r, s) be the class of all graphs almost embeddable in a surface of Euler genus at most r with at most s apices and at most q vortices, each of width at most p. In this and the following step, we want to prove that for all p, q, r, s the class A(p, q, r, s) admits FP-definable ordered treelike decompositions. We proceed by induction on q + r. We already know how to deal with the class A(0, 0, 0, 0) of planar graphs and more generally the class A(0, 0, r, 0) of all graphs embeddable in a surface of Euler genus at most r.

In this step, we consider the classes A(p, 1, 0, 0) of almost planar graphs. We think of almost planar graphs as graphs being embedded into a disk with a vortex glued on the boundary of the disk. Figure 8 shows an example. In the key lemma of this step, and actually one of the most difficult lemmas of the whole proof of Theorem 1, we show that the facial cycles of an almost planar graph that are sufficiently far from the vortex do not depend on the specific embedding. That is, no matter how we divide the graph into a vortex and a part embedded in the disk, these cycles will end up in the disk, and they will be facial cycles. Moreover, these cycles are FP-definable. We call the subgraph of the graph induced by these cycles the center of the graph. Using the facial cycles, we can define a linear order on each connected component of the center. If we contract each connected component of the center to a single vertex, we obtain a graph of tree width bounded by O(p2). Using Step 3, we can define an ordered treelike decomposition of this graph, and we can extend the decomposition to an ordered treelike decomposition of the original graph using the linear orders of the components of the center.

Step 5: Almost embeddable graphs. We prove that the classes A(p, q, r, s) admit FP-definable ordered treelike decompositions by an inductive construction similar to, but far more complicated than the one in Step 2.

Step 6: Graphs with excluded minors. Let C be a class of graphs with excluded minors. By Robertson and Seymour’s structure theorem, there are p, q, r, s such that all graphs in C have a tree decomposition into pieces from A(p, q, r, s). If we could define such a decomposition in FP, then we could use the definable decomposition of the graphs in A(p, q, r, s) obtained in previous step to define ordered treelike decompositions of the graphs in C. But unfortunately I do not know how to define such a decomposition in FP.

Instead, we repeatedly apply the construction of the previous steps to inductively build up an ordered treelike decomposition of a graph in C from partial decompositions in a bottom up fashion similar to Step 3.

6. Application to Isomorphism Testing

It is a long standing open problem whether there is a polynomial-time algorithm deciding if two graphs are isomorphic. Polynomial-time isomorphism tests are known for many natural classes of graphs including the class of planar graphs,9 classes of graphs embeddable in a fixed surface4, 16 and more generally classes of graphs with excluded minors,18 and classes of graphs of bounded degree.14 The isomorphism test for graphs of bounded degree due to Luks14 involves some nontrivial group theory, and many later isomorphism algorithms built on the group theoretic techniques developed by Babai, Luks, and others in the early 1980s. In particular, Ponomarenko’s18 isomorphism algorithm for graphs with excluded minors heavily builds on these techniques.

Much simpler than the group theoretic algorithms is a combinatorial algorithm known as color refinement or vertex classification. It computes a coloring of the vertices of a graph by the following iterative procedure: initially, all vertices have the same color. Then in each round, the coloring is refined by assigning different colors to vertices that have a different number of neighbors of at least one color assigned in the previous round. Thus after the first round, two vertices have the same color if and only if they have the same degree. After the second round, two vertices have the same color if and only if they have the same degree and for each d the same number of neighbors of degree d. The algorithm stops if no further refinement is achieved; this happens after at most n rounds, where n is the number of vertices of the input graph. To use color refinement as an isomorphism test, it is applied to the disjoint union of two graphs. If after the refinement process the colorings of the two graphs differ, that is, for some color c the graphs have a different number of vertices of color c, then we know that they are nonisomorphic. Unfortunately, if both graphs have the same coloring, they may still be nonisomorphic. As an example, consider two nonisomorphic regular graphs with the same number of vertices, such as a cycle of length 6 and the disjoint union of two cycles of length 3. They will get the same coloring even though they are nonisomorphic. We say that color refinement distinguishes two graphs if they get different colorings.

The k-dimensional WeisfeilerLehman algorithm (for short: k-WL) is a generalization of the color refinement algorithm that colors k-tuples of vertices instead of vertices (see Cai et al.1 for a history of the algorithm). Let G be the input graph. Initially, two tuples (v1, …, vk), (w1, …, wk)

V(G)k get the same color if the mapping

V(G)k get the same color if the mapping

is an isomorphism from the induced subgraph G[{v1, …, vk}] to the induced subgraph G[{w1, …, wk}]. For i ≤ k, we say that a tuple (v1, …, vk) is an i-neighbor of a tuple (w1, …, wk) if vj = wj for all j ≠ i. In each round of the algorithm, the coloring is refined by assigning different colors to tuples that for some i

is an isomorphism from the induced subgraph G[{v1, …, vk}] to the induced subgraph G[{w1, …, wk}]. For i ≤ k, we say that a tuple (v1, …, vk) is an i-neighbor of a tuple (w1, …, wk) if vj = wj for all j ≠ i. In each round of the algorithm, the coloring is refined by assigning different colors to tuples that for some i

[k] and some color c have different numbers of i-neighbors of color c. The algorithm stops if no further refinement is achieved; this happens after at most nk rounds. Again, isomorphic graphs obviously get the same coloring, and we say that k-WL distinguishes two nonisomorphic graphs if they get different colorings. It is not so obvious that there are nonisomorphic graphs that are not distinguished by k-WL for any fixed k ≥ 3, let alone k = log n. By a beautiful construction, which has found several other applications in finite model theory, Cai et al.1 proved that for each n there are nonisomorphic three-regular n-vertex graphs Gn, Hn that cannot be distinguished by k-WL for k = o(n). They also noted that there is a connection between the WeisfeilerLehman algorithm and definability in fixed-point logic with counting.

[k] and some color c have different numbers of i-neighbors of color c. The algorithm stops if no further refinement is achieved; this happens after at most nk rounds. Again, isomorphic graphs obviously get the same coloring, and we say that k-WL distinguishes two nonisomorphic graphs if they get different colorings. It is not so obvious that there are nonisomorphic graphs that are not distinguished by k-WL for any fixed k ≥ 3, let alone k = log n. By a beautiful construction, which has found several other applications in finite model theory, Cai et al.1 proved that for each n there are nonisomorphic three-regular n-vertex graphs Gn, Hn that cannot be distinguished by k-WL for k = o(n). They also noted that there is a connection between the WeisfeilerLehman algorithm and definability in fixed-point logic with counting.

Based on this connection, we can prove our second main result, which states that a constant dimensional WL suffices to distinguish graphs with excluded minors. Thus the WeisfeilerLehman algorithm yields a simple combinatorial polynomial-time isomorphism test for every class C of graphs with excluded minors. This algorithm completely avoids the sophisticated group theoretic machinery of Ponomarenko’s algorithm. Actually, it is a generic algorithm that is not tailored for the specific input graphs in any way except by choosing the parameter k appropriately.

THEOREM 3. For every class C of graphs with excluded minors there is a constant k such that k-WL distinguishes any two nonisomorphic graphs in C.

Theorem 3 follows from the Definable Structure Theorem 2 and Lemma 1 (or directly from Theorem 1 via a result due to Otto17). Remarkably, Theorem 3 is not referring to logic in any way—the WeisfeilerLehman algorithm is a purely combinatorial algorithmyet our proof of the theorem heavily relies on logic.

Acknowledgment

I’d like to thank Martín Abadi, Dieter van Melkebeek, Moshe Vardi, and Thomas Wilke for very helpful comments on earlier versions of this article.

Figures

Figure 1. Three different adjacency matrices of a graph and the corresponding string encodings (cf. Example 2).

Figure 1. Three different adjacency matrices of a graph and the corresponding string encodings (cf. Example 2).

Figure 2. The graph in (c) is a minor of the graph in (a) that is obtained by deleting all blue edges and vertices and contracting all red edges in (b).

Figure 2. The graph in (c) is a minor of the graph in (a) that is obtained by deleting all blue edges and vertices and contracting all red edges in (b).

Figure 3. Construction of a projective plane: The boundary cycle of the Möbius strip is identified with the boundary of a hole in the sphere.

Figure 3. Construction of a projective plane: The boundary cycle of the Möbius strip is identified with the boundary of a hole in the sphere.

Figure 4. A graph almost embedded in a double torus with four vortices and six apices.

Figure 4. A graph almost embedded in a double torus with four vortices and six apices.

Figure 5. Defining an order on a path and cycle; the colored vertices are parameters of the definition.

Figure 5. Defining an order on a path and cycle; the colored vertices are parameters of the definition.

Figure 6. Canonization of a star.

Figure 6. Canonization of a star.

Figure 7. A treelike decomposition of the cycle C4.

Figure 7. A treelike decomposition of the cycle C4.

Figure 8. An almost planar graph (the outer edges connect vertices of distance two on the cycle).

Figure 8. An almost planar graph (the outer edges connect vertices of distance two on the cycle).

Join the Discussion (0)

Become a Member or Sign In to Post a Comment