On Sunday January 28, 2007, noted computer scientist Jim Gray disappeared at sea in his sloop Tenacious. He was sailing singlehanded, with plans to scatter his mother’s ashes near the Farallon Islands, some 27 miles outside San Francisco’s Golden Gate. As news of Gray’s disappearance spread through his social network, his friends and colleagues began discussing ways to mobilize their skills and resources to help authorities locate Tenacious and rescue Gray. That discussion evolved over days and weeks into an unprecedented civilian search-and-rescue (SARa) exercise involving satellites, private planes, automated image analysis, ocean current simulations, and crowdsourced human computing, in collaboration with the U.S. Coast Guard. The team that emerged included computer scientists, engineers, graduate students, oceanographers, astronomers, business leaders, venture capitalists, and entrepreneurs, many of whom had never met one another before. There was ample access to funds, technology, organizational skills and know-how, and a willingness to work round the clock.

Even with these advantages, the odds of finding Tenacious were never good. On February 16, 2007, in consultation with the Coast Guard and Gray’s family, the team agreed to call off the search. Tenacious remains lost to this day, despite a subsequent extensive underwater search of the San Francisco coastline.4

Gray was famous for many things, including his determination to work with practitioners to transform the practical challenges they faced into scientific questions that could be formalized and addressed by the research community. As the search for Tenacious wound down, a number of us felt that even though the effort was not successful on its own terms, it offered a Jim-Gray-like opportunity to convert the particulars of the experience into higher-level technical observations of more general interest. One goal was to encourage efforts to “democratize” the ability of families and friends to use technology to assist SAR, so people whose social network is not as well-connected as Gray’s could undertake analogous efforts. In addition, we hoped to review the techniques we used and ask how to improve them further to make the next search effort more effective. To that end, in May 2008, the day after a public tribute to Gray at the University of California, Berkeley, we convened a meeting of search participants, including the Coast Guard. This was the first opportunity for the virtual organization that had searched for Tenacious to meet face-to-face and compare stories and perspectives.

One sober conclusion the group quickly reached was that its specific lessons on maritime SAR could have only modest impact, as we detail here. However, we still felt it would be constructive to cull lessons learned and identify technical challenges. First, maritime search is not a solved problem, and even though the number of lives to be saved is small, each life is precious. Second, history shows that technologies developed in one application setting often have greater impact in others. We were hopeful that lessons learned searching for Gray could inform efforts launched during larger life-threatening scenarios, including civilian-driven efforts toward disaster response and SAR during natural disasters and military conflict. Moreover, as part of the meeting, we also brainstormed about the challenges of safety and prevention.

This article aims to distill some of that discussion within computer science, which is increasingly interested in disaster response (such as following the 2007 Kenyan election crisis1 and 2010 Haiti earthquake2). We document the emergent structure of the team and its communication, the “polytechture” of the systems built during the search, and some of the related challenges; a longer version of this article3 includes additional figures, discussion, and technical challenges.

Background

The amateur effort to find Tenacious and its skipper began with optimism but little context as to the task at hand. We had no awareness of SAR practice and technology, and only a vague sense of the special resources Gray’s friends could bring to bear on a problem. With the benefit of hindsight, we provide a backdrop for our discussion of computer science challenges in SAR, reflecting first on the unique character of the search for Tenacious, then on the basics of maritime SAR as practiced today.

Tenacious SAR. The search for Tenacious was in some ways unique and in others a typical volunteer SAR. The uniqueness had its roots in Gray’s persona. In addition to being a singular scientist and engineer, he was distinctly social, cultivating friendships and collaborations across industries and sciences. The social network he built over decades brought enormous advantages to many aspects of the search, in ways that would be very difficult to replicate. First, the team that assembled to find Tenacious included leaders in such diverse areas as computing, astronomy, oceanography, and business management. Second, due to Gray’s many contacts in the business and scientific worlds, funds and resources were essentially unlimited, including planes, pilots, satellite imagery, and control of well-provisioned computing resources. Finally, the story of famous-scientist-gone-missing attracted significant media interest, providing public awareness that attracted help with manual image analysis and information on sightings of debris and wreckage.

On the other hand, a number of general features the team would wrestle with seem relatively universal to volunteer SAR efforts. First, the search got off to a slow start, as volunteers emerged and organized to take concrete action. By the time all the expertise was in place, the odds of finding a survivor or even a boat were significantly diminished. Second, almost no one involved in the volunteer search had any SAR experience. Finally, at every stage of the search, the supposition was that it would last only a day or two more. As a result, there were disincentives to invest time in improving existing practices and tools and positive incentives for decentralized and lightweight development of custom-crafted tools and practices.

If there are lessons to be learned, they revolve around questions of both the uniqueness of the case and its universal properties. The first category motivated efforts to democratize techniques used to search for Tenacious, some of which didn’t have to be as complex or expensive as they were in this instance. The second category motivated efforts to address common technological problems arising in any volunteer emergency-response situation.

Maritime SAR. Given our experience, maritime SAR is the focus of our discussion here. As it happens, maritime SAR in the U.S. is better understood and more professionally conducted than land-based SAR. Maritime SAR is the responsibility of a single federal agency: the Coast Guard, a branch of the U.S. Department of Homeland Security. By contrast, land-based SAR is managed in an ad hoc manner by local law-enforcement authorities. Our experience with the Coast Guard was altogether positive; not only were its members eminently good at their jobs, they were technically sophisticated and encouraging of our (often naïve) ideas, providing advice and coordination despite their own limited time and resources. In the U.S. at least, maritime settings are a good incubator for development of SAR technology, and the Coast Guard is a promising research partner. As of the time of writing, its funding is modest, so synergies and advocacy from well-funded computer-science projects would likely be welcome.

In hindsight, the clearest lessons for the volunteer search team were that the ocean is enormous, and the Coast Guard has a sophisticated and effective maritime SAR program. The meeting in Berkeley opened with a briefing from Arthur Allen, an oceanographer at the Coast Guard Headquarters Office of Search and Rescue, which oversees all Coast Guard searches, with an area of responsibility covering most of the Pacific, half of the Atlantic, and half of the Arctic Oceans. Here, we review some of the main points Allen raised at the meeting.

SAR technology is needed only when people get into trouble. From a public-policy perspective, it is cheaper and more effective to invest in preventing people from getting into trouble than in ways of saving them later; further discussion of boating safety can be found at http://www.uscgboating.org. We cannot overemphasize the importance of safety and prevention in saving lives; the longer version of this article3 includes more on voluntary tracking technologies and possible extensions.

Even with excellent public safety, SAR efforts are needed to handle the steady stream of low-probability events triggered by people getting into trouble. When notification of trouble is quick, the planning and search phases become trivial, and the SAR activity can jump straight to rescue recovery. SAR is more difficult when notification is delayed, as it was with Gray. This leads to an iterative process of planning and search. Initial planning is intended to be quick, often consisting simply of the decision to deploy planes for a visual sweep of the area where a boat is expected to be. When an initial “alpha” search is not successful, the planning phase becomes more deliberate. The second, or “bravo,” search is planned via software using statistical methods to model probabilities of a boat’s location. The Coast Guard developed a software package for this process called SAROPS,5 which treats the boat-location task as a probabilistic planning problem it addresses with Bayesian machine-learning techniques. The software accounts for prior information about weather and ocean conditions and the properties of the missing vessel, as well as the negative information from the alpha search. It uses a Monte Carlo particle-filtering approach to infer a distribution of boat locations, making suggestions for optimal search patterns. SAROPS is an ongoing effort updated with models of various vessels in different states, including broken mast, rudder missing, and keel missing. The statistical training experiments to parameterize these models are expensive exercises that place vessels underway to track their movement. The Coast Guard continues to conduct these experiments on various parameters as funds and time permit. No equivalent software package or methodology is currently available for land-based SAR.

Gray was famous for many things, including his determination to work with practitioners to transform the practical challenges they faced into scientific questions that could be formalized and addressed by the research community.

Allen shared the Coast Guard’s SAR statistics, 20032006, which are included in the longer version of this article.3 They show that most cases occur close to shore, with many involving land-based vehicles going into the ocean. The opportunities for technologists to assist with maritime SAR are modest. In Allen’s U.S. statistics, fewer than 1,000 lives were confirmed lost in boating accidents each year, and only 200 to 300 deaths occur after the Coast Guard had been notified and thus might have been avoided through rescue. A further 600 people per year remain unaccounted for, and, while it is unknown how many of them remained alive post-notification, some fraction of them are believe to have committed suicide. Relative to other opportunities to save lives through technology, the margin for improvement in maritime SAR is relatively small. This reality frames the rest of our discussion, focusing on learning lessons from our experience that apply to SAR and hopefully other important settings as well.

Communication and Coordination

As in many situations involving groups of people sharing a common goal, communication and coordination were major aspects of the volunteer search for Gray. Organizing these “back-office” tasks were ad hoc and evolving, and, in retrospect, interesting patterns emerged around themes related to social computing, including organizational development, brokering of volunteers and know-how, and communicating with the media and general public. Many could be improved through better software.

Experience. The volunteer effort began via overlapping email threads among Gray’s colleagues and friends in the hours and days following his disappearance. Various people exchanged ideas about getting access to satellite imagery, hiring planes, and putting up missing-person posters. Many involved reaching out in a coordinated and thoughtful manner to third parties, but it was unclear who heard what information and who might be contacting which third parties. To solve that problem a blog called “Tenacious Search” was set up to allow a broadcast style of communication among the first group of participants. Initially, authorship rights on the blog were left wide open. This simple “blog-as-bulletin-board” worked well for a day or two for coordinating those involved in the search, loosely documenting our questions, efforts, skills, and interests in a way that helped define the group’s effort and organization.

Within a few days the story of Gray’s disappearance was widely known, however, and the blog transitioned from in-group communication medium to widely read publishing venue for status reports on the search effort, serving this role for the remainder of the volunteer search. This function was quickly taken seriously, so authorship on the blog was closed to additional members, and a separate “Friends of Jim” mailing list was set up for internal team communications. This transition led to an increased sense of organizational and social structure within the core group of volunteers.

Over the next few days, various individuals stepped into unofficial central roles for reasons of expedience or unique skills or both. The blog administrator evolved into a general “communications coordinator,” handling messages sent to a public email box for tips, brokering skill-matching for volunteers, and serving as a point of contact with outside parties. Another volunteer emerged as “aircraft coordinator,” managing efforts to find, pilot, and route private planes and boats to search for Gray. A third volunteer assumed the role of “analysis coordinator,” organizing various teams on image analysis and ocean-drift modeling at a various organizations in the U.S. A fourth was chosen by Gray’s family to serve as “media coordinator,” the sole contact for press and public relations. These coordinator roles were identified in retrospect, and the role names were coined for this article to clarify the discussion. Individuals with management experience in the business world provided guidance along the way, but much of the organizational development happened in an organic “bottom-up” mode.

Interesting patterns emerged around themes related to social computing, including organizational development, brokering of volunteers and know-how, and communicating with the media and general public.

On the communications front, an important role that quickly emerged was the brokering of tasks between skilled or well-resourced volunteers and people who could take advantage of those assets. This began in an ad hoc broadcast mode on the blog and email lists, but, as the search progressed, offers of help came from unexpected sources, and coordination and task brokering became more complex. Volunteers with science and military backgrounds emerged with offers of specific technical expertise and suggestions for acquiring and analyzing particular satellite imagery. Others offered to search in private planes and boats, sometimes at serious risk to their own lives, and so were discouraged by the team and the Coast Guard. Yet others offered to post “Missing Sailor” posters in marinas, also requiring coordination. Even psychic assistance was offered. Each offer took time from the communications coordinator to diplomatically pursue and route or deflect. As subteams emerged within the organization, this responsibility became easier; the communications coordinator could skim an inbound message and route it to one of the other volunteer coordinators for follow-up.

Similar information-brokering challenges arose in handling thousands of messages from the general public, after being encouraged by the media to keep their eyes open for boats and debris, reporting to a public email address. The utility of many of these messages was ambiguous, and, given the sense of urgency, it was often difficult to decide whether to bring them to the attention of busy people: the Coast Guard, the police, Gray’s family, and technical experts in image analysis and oceanography. In some cases, tipsters got in contact repeatedly, and it became necessary to assemble conversations over several days to establish a particular tipster’s credibility. This became burdensome as email volume grew.

Discussion. On reflection, the organization’s evolution was one of the most interesting aspects of its development. Leadership roles emerged fairly organically, and subgroups formed with little discussion or contention over process or outcome. Some people had certain baseline competencies; for example, the aircraft coordinator was a recreational pilot, and the analysis coordinator had both management experience and contacts with image-processing experts in industry and government. In general, though, leadership developed by individuals stepping up to take responsibility and others stepping back to let them do their jobs, then jumping in to help as needed. The grace with which this happened was a bit surprising, given the kind of ambitious people who had surrounded Gray, and the fact that the organization evolved largely through email. The evolution of the team seems worthy of a case study in ad hoc organizational development during crisis.

It became clear that better software is needed to facilitate group communication and coordination during crises. By the end of the search for Tenacious—February 16, 2007—various standard communication methods were in use, including point-to-point email and telephony, broadcast via blogs and Web pages, and multicast via conference calls, wikis, and mailing lists. This mix of technologies was natural and expedient in the moment but meant communication and coordination were a challenge. It was difficult to work with the information being exchanged, represented in natural-language text and stored in multiple separate repositories. As a matter of expedience in the first week, the communications coordinator relied on mental models of basic information, like who knew what information and who was working on what tasks. Emphasizing mental note taking made sense in the short term but limited the coordinator’s ability to share responsibility with others as the “crisis watch” extended from hours to days to weeks.

Various aspects of this problem are addressable through well-known information-management techniques. But in using current communication software and online services, it remains difficult to manage an evolving discussion that includes individuals, restricted groups, and public announcements, especially in a quickly changing “crisis mode” of operation. Identifying people and their relationships is challenging across multiple communication tools and recipient endpoints. Standard search and visualization metaphors—folders, tags, threads—are not well-matched to group coordination.

Brokering volunteers and tasks introduces further challenges, some discussed in more detail in the longer version of this article.3 In any software approach to addressing them, one constraint is critical: In an emergency, people do not reach for new software tools, so it is important to attack the challenges in a way that augments popular tools, rather than seeking to replace or recreate them.

Imagery Acquisition

When the volunteer search began, our hope was to use our special skills and resources to augment the Coast Guard with satellite imagery and private planes. However, as we learned, realtime search for boats at sea is not as simple as getting a satellite feed from a mapping service or borrowing a private jet.

Experience. The day after Tenacious went missing, Gray’s friends and colleagues began trying to access satellite imagery and planes. One of the first connections was to colleagues in earth science with expertise in remote sensing. In an email message in the first few days concerning the difficulty of using satellite imagery to find Tenacious, one earth scientist said, “The problem is that the kind of sensors that can see a 40ft (12m) boat have a correspondingly narrow field of view, i.e., they can’t see too far either side of straight down… So if they don’t just happen to be overhead when you need them, you may have a long wait before they show up again. …[A]t this resolution, it’s strictly target-of-opportunity.”

Undeterred, the team pursued multiple avenues to acquire remote imagery through connections at NASA and other government agencies, as well as at various commercial satellite-imagery providers, while the satellite-data teams at both Google and Microsoft directed us to their commercial provider, Digital Globe. The table here outlines the data sources considered during the search.

As we discovered, distribution of satellite data is governed by national and international law. We attempted from the start to get data from the SPOT-5 satellite but were halted by the U.S. State Department, which invoked the International Charter on Space and Major Disasters to claim exclusive access to the data over the study area, retroactive to the day before our request. We also learned, when getting data from Digital Globe’s QuickBird satellite, that full-resolution imagery is available only after a government-mandated 24-hour delay; before that time, Digital Globe could provide only reduced-resolution images.

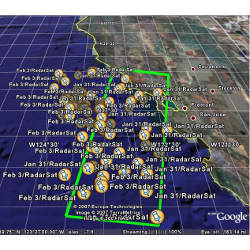

The first data acquired from the QuickBird satellite was focused well south of San Francisco, near Catalina Island, and the odds of Tenacious being found in that region were short. On the other hand, it seemed important to begin experimenting with real data to see how effectively the team could process it, and this early learning proved critical to getting the various pieces of the image-processing pipeline in place and tested. As the search progressed, Digital Globe was able to acquire imagery solidly within the primary search area, and the image captures provided to the team were some of the biggest data products Digital Globe had ever generated: more than 87 gigapixels. Even so, the areas covered by the satellite captures were dwarfed by the airborne search conducted by the Coast Guard immediately after Gray went missing (see Figure 5 of the longer version of this article3).

We were able to establish contacts at NASA regarding planned flights of its ER-2 “flying laboratory” aircraft over the California coast. The ER-2 is typically booked on scientific missions and requires resources—fuel, airport time, staffing, wear-and-tear—to launch under any circumstances. As it happened, the ER-2 was scheduled for training flights in the area where Tenacious disappeared. Our contacts were able to arrange flight plans to pass over specific areas of interest and record various forms of digital imagery due to a combination of fortunate circumstance and a well-connected social network. Unfortunately, a camera failure early in the ER-2 flight limited data collection.

In addition to these relatively rare imaging resources, we chartered private planes to fly over the ocean, enabling volunteer spotters to look for Tenacious with their naked eyes and record digital imagery. This effort ended up being more limited than we expected. One cannot simply charter or borrow a private jet and fly it out over the ocean. Light planes are not designed or allowed to fly far offshore. Few people maintain planes equipped for deep-sea search, and flights over deep sea can be undertaken only by pilots with appropriate maritime survival training and certification. Finally, aircraft of any size require a flight plan to be filed and approved with a U.S. Flight Service Station in order to cross the U.S. Air Defense Identification Zone beginning a few miles offshore. As a result of these limitations and many days of bad weather, we were able to arrange only a small number of private overflights, with all but one close to shore.

Another source of imagery considered was land-based video cameras that could perhaps have more accurately established a time of departure for Tenacious, beyond what we knew from Gray’s mobile phone calls to family members on his way out. The Coast Guard operates a camera on the San Francisco Bay that is sometimes pointed out toward the Golden Gate and the ocean, but much of the imagery captured for that day was in a state of “white-out,” rather than useful imagery, perhaps due to foggy weather.

Discussion. The search effort was predicated on quick access to satellite imagery and was surprisingly successful, with more than 87 gigapixels of satellite imagery acquired from Digital Globe alone within about four days of capture. Yet in retrospect we would have wanted much more data, with fewer delays. The longer version of this article3 reviews some of the limitations we encountered, as well as ideas for improving the ability to acquire imagery in life-threatening emergencies.

Policy concerns naturally come up when discussing large volumes of remote imagery, and various members of the amateur team voiced concern about personal privacy during the process. Although popular media-sharing Web sites provide widespread access to crowdsourced and aggregated imagery, they have largely confined themselves to benign settings (such as tourism and ornithology), whereas maritime SAR applications (such as monitoring marinas and shipping lanes) seem closer to pure surveillance. The potential for infringing on privacy raises understandable concern, and the policy issues are not simple. Perhaps our main observation on this front was the need for a contextual treatment of policy, balancing general-case social concerns against specific circumstances for using the data, in our case, trying to rescue a friend. On the other hand, while the search for Tenacious and its lone sailor was uniquely urgent for us, similar life-and-death scenarios occur on a national scale with some frequency. So, we would encourage research into technical solutions that can aggressively harvest and process imagery while provably respecting policies that limit image release based on context.

From Imagery to Coordinates

Here, we discuss the processing pipeline(s) and coordination mechanisms used to reduce the raw image data to qualified search coordinates—the locations to which planes were dispatched for a closer look. This aspect of the search was largely data-driven, involving significant technical expertise and much more structured and tool-intensive processes than those described earlier. On the other hand, since time was short and the relevant expertise so specialized, it also led to simple interfaces between teams and their software. The resulting amalgam of software was not the result of a specific architecture, in the usual sense of the word (archi- “chief” + techton “builder”). A more apt term for the software and workflow described here might be a polytechture, the kind of system that emerges from the design efforts of many independent actors.

Overview. The figure here outlines the ultimate critical-path data and control flow that emerged, depicting the ad hoc pipeline developed for Digital Globe’s satellite imagery. In the paragraphs that follow, we also discuss the Mechanical Turk pipeline developed early on and used to process NASA ER-2 overflight imagery but that was replaced by the ad hoc pipeline.

Before exploring the details, it would be instructive to work “upstream” through the pipeline, from final qualified targets back to initial imagery. The objective of the image-processing effort was to identify one or more sets of qualified search coordinates to which aircraft could be dispatched (lower right of the figure). To do so, it was not sufficient to simply identify the coordinates of qualified targets on the imagery; rather, we had to apply a mapping function to the coordinates to compensate for drift of the target from the time of image capture to flight time. This mapping function was provided by two independent “drift teams” of volunteer oceanographers, one based at the Monterey Bay Aquarium Institute and Naval Research Lab, another at NASA Ames (“Ocean Drift Modeling” in the figure).

The careful qualification of target coordinates was particularly important. It was quickly realized that many of the potential search coordinates would be far out at sea and, as mentioned earlier, require specialized aircraft and crews. Furthermore, flying low-altitude search patterns offshore in single-engine aircraft implied a degree of risk to the search team. Thus, it was incumbent on the analysis team to weigh this risk before declaring a target to be qualified. A key step in the process was a review of targets by naval experts prior to their final qualification (“Target Qualification” in the figure).

Prior to target qualification, an enormous set of images had to be reviewed and winnowed down to a small set of candidates that appeared to contain boats. To our surprise and disappointment, there were no computervision algorithms at hand well suited to this task, so it was done manually. At first, image-analysis tasking was managed using Amazon’s Mechanical Turk infrastructure to coordinate volunteers from around the world. Subsequently, a distributed team of volunteers with expertise in image analysis used a collection of ad hoc tools to coordinate and perform the review function (“Image Review” in the figure).

Shifting to the start of the pipeline, each image data set required a degree of preprocessing prior to human analysis of the imagery, a step performed by members of Johns Hopkins’s Department of Physics and Astronomy in collaboration with experts at CalTech and the University of Hawaii. At the same time, a separate team at the University of Texas’s Center for Space Research georeferenced the image-file headers onto a map included in a Web interface for tracking the progress of image analysis (“Image Preprocessing,” “Common Operating Picture,” and “Staging” in the figure).

The eventual workflow was a distributed, multiparty process. Its components were designed and built individually, “bottom-up,” by independent volunteer teams at various institutions. The teams also had to quickly craft interfaces to stitch together the end-to-end workflow with minimal friction. An interesting and diverse set of design styles emerged, depending on a variety of factors. In the following sections, we cover these components in greater detail, this time from start to finish:

Preprocessing. Once the image providers had data and the clearance to send it, they typically sent notification of availability via email to the image-analysis coordinator, together with an ftp address and the header file describing the collected imagery (“the collection”).

Upon notification, the preprocessing team at Johns Hopkins began copying the data to its cluster. Meanwhile, the common storage repository at the San Diego Supercomputer Center began ftp-ing the data to ensure its availability, with a copy of the header passed to a separate geo-coordination team at the University of Texas that mapped the location covered by the collection, adding it to a Web site. That site provided the overall shared picture of imagery collected and analyses completed and was used by many groups within the search team to track progress and solicit further collections.

Analysis tasking and result processing. Two approaches to the parallel processing of the tiled images were used during the course of the search. In each, image tiles (or smaller subtiles) had to be farmed out to human analysts and the results of their analysis collated and further filtered to avoid a deluge of false positives.

The initial approach was to use Amazon’s Mechanical Turk service to solicit and task a large pool of anonymous reviewers whose credentials and expertise were not known to us.

Mechanical Turk is a “crowdsourcing marketplace” for coordinating the efforts of humans performing simple tasks from their own computers. Given that the connectivity and display quality available to them was unknown, the Mechanical Turk was configured to supply users with work items called Human Interface Tasks (HITs), each consisting of a few 300×300-pixel image sub-tiles. Using a template image we provided of what we were looking for, the volunteers were asked to score each sub-tile for evidence of similar features and provide comments on artifacts of interest. This was an exceedingly slow process due to the number of HITs required to process a collection.

In addition to handling the partitioning of the imagery across volunteers, Mechanical Turk bookkeeping was used to ensure that each sub-tile was redundantly viewed by multiple volunteers prior to declaring the pipeline “complete.” Upon completion, and at checkpoints along the way, the system also generated reports aggregating the results received concerning each sub-tile.

False positives were a significant concern, even in the early stages of processing. So a virtual team of volunteers who identified themselves as having some familiarity with image analysis (typically astronomical or medical imagery rather than satellite imagery) was assembled to perform this filtering. In order to distribute the high-scoring sub-tiles among them, the image-analysis team configured an iterative application of Mechanical Turk accessible only to the sub-team, with the high-scoring sub-titles from the first pipeline fed into it. The coordinator then used the reports generated by this second pipeline to drive the target-qualification process. This design pattern of an “expertise hierarchy” seems likely to have application in other crowdsourcing settings.

A significant cluster of our image reviewers were co-located at the Johns Hopkins astronomy research center. These volunteers, with ample expertise, bandwidth, high-quality displays, and a sense of personal urgency, realized they could process the imagery much faster than novices scheduled by Mechanical Turk. This led to two modifications in the granularity of tasking: larger sub-tiles and a Web-based visual interface to coordinate downloading them to client-specific tools.

They were accustomed to looking for anomalies in astronomical imagery and were typically able to rapidly display, scan, and discard sub-tiles that were three-to-four times larger than those presented to amateurs. This ability yielded an individual processing rate of approximately one (larger) subtile every four seconds, including tiles requiring detailed examination and entry of commentary, as compared to the 2030-second turnaround for each Mechanical Turk HIT. The overall improvement in productivity over Mechanical Turk was considerably better than these numbers indicate, because the analysts’ experience reduced the overhead of redundant analysis, and their physical proximity facilitated communication and cross-training.

A further improvement was that the 256 sub-tiles within each full-size tile were packaged into a single zip file. Volunteers could then use their favorite image-browsing tools to page from one sub-tile to the next with a single mouse click. To automate tasking and results collection, this team used scripting tools to create a Web-based visual interface through which it (and similarly equipped volunteers worldwide) could visually identify individual tiles requiring work, download them, and then submit their reports.

In this interface, tiles were superimposed on a low-resolution graphic of the collection that was, in turn, geo-referenced and superimposed on a map. This allowed the volunteers to prioritize their time by working on the most promising tiles first (such as those not heavily obscured by cloud cover).

The self-tasking capability afforded by the visual interface also supported collaboration and coordination among the co-located expert analysts who worked in “shifts” and had subteam leaders who would gather and score the most promising targets. Though scoring of extremely promising targets was performed immediately, the periodic and collective reviews that took place at the end of each shift promoted discussion among the analysts, allowing them to learn from one another and adjust their individual standards of reporting.

In summary, we started with a system centered on crowdsourced amateur analysts and converged on a solution in which individuals with some expertise, though not in this domain, were able to operate at a very quick pace, greatly outperforming the crowdsourced alternative. This operating point, in and of itself, was an interesting result.

Target qualification. The analysis coordinator examined reports from the analysis pipelines to identify targets for submission to the qualification step. With Mechanical Turk, this involved a few hours sifting through the output of the second Mechanical Turk stage. Once the expert pipeline was in place, the coordinator needed to examine only a few filtered and scored targets per shift.

Promising targets were then submitted to a panel of two reviewers, each with expertise in identifying engineered artifacts in marine imagery. The analysis coordinator isolated these reviewers from one another, in part to avoid cross-contamination, but also from having to individually carry the weight of a potentially risky decision to initiate a search mission while avoiding overly biasing them in a negative direction. Having discussed their findings with each reviewer, the coordinator would then make the final decision to designate a target as qualified and thus worthy of search.

Given the dangers of deep-sea flights, this review step included an intentional bias by imposing less rigorous constraints on targets that had likely drifted close to shore than on those farther out at sea.

Drift modeling. Relatively early in the analysis process, a volunteer with marine expertise recognized that, should a target be qualified, it would be necessary to estimate its movement since the time of image capture. A drift-modeling team was formed, ultimately consisting of two sub-teams of oceanographers with access to two alternative drift models. As image processing proceeded, these sub-teams worked in the background to parameterize their models with weather and ocean-surface data during the course of the search. Thus, once targets were identified, the sub-teams could quickly estimate likely drift patterns.

The drift models utilized a particle-filtering approach of virtual buoys that could be released at an arbitrary time and location, and for which the model would then produce a projected track and likely endpoint at a specified end time. In practice, one must release a string of adjacent virtual buoys to account for the uncertainty in the initial location and the models’ sensitivity to local effects that can have fairly large influence on buoy dispersion. The availability of two independent models, with multiple virtual buoys per model, greatly increased our confidence in the prediction of regions to search.

Worth noting is that, although these drift models were developed by leading scientists in the field, the results often involved significant uncertainty. This was particularly true in the early part of the search, when drift modeling was used to provide a “search box” for Gray’s boat and had to account for many scenarios, including whether the boat was under sail or with engines running. These scenarios reflected very large uncertainty and led to large search boxes. By the time the image processing and weather allowed for target qualification, the plausible scenario was reduced to a boat adrift from a relatively recent starting point. Our colleagues in oceanography and the Coast Guard said the problem of ocean-drift modeling merits more research and funding; it would also seem to be a good area for collaboration with computer science.

The drift-modeling team developed its own wiki-based workflow interface. The analysis coordinator was given a Web site where he could enter a request to release virtual “drifters” near a particular geolocation at a particular time. Requests were processed by the two trajectory-modeling teams, and the resulting analysis, including maps of likely drift patterns, were posted back to the coordinator via the drift team’s Web site. Geolocations in latitude/longitude are difficult to transcribe accurately over the phone, so using the site helped ensure correct inputs to the modeling process.

A more apt term for the software and workflow described here might be a polytechture, the kind of system that emerges from the design efforts of many independent actors.

Analysis results. The goal of the analysis team was to identify qualified search coordinates. During the search, it identified numerous targets, but only two were qualified: One was in ER-2 flyover imagery near Monterey, originally flagged by Mechanical Turk volunteers; the other was in Digital Globe imagery near the Farallon Islands, identified by a member of the more experienced image-processing team.3 Though the low number might suggest our filtering of targets was overly aggressive, we have no reason to believe potential targets were missed. Our conclusion is simply that the ocean surface is not only very large but also very empty.

Once qualified, these two targets were then drift-modeled to identify coordinates for search boxes. For the first target, the drift models indicated it should have washed ashore in Monterey Bay. Because this was a region close to shore, it was relatively easy to send a private plane to the region, and we did. The second target was initially not far from the Farallon Islands, with both models predicting it would have drifted into a reasonably bounded search box within a modest distance from the initial location. Given our knowledge of Gray’s intended course for the day, this was a very promising target, so we arranged a private offshore SAR flight. Though we did not find Tenacious, we did observe a few fishing vessels of Tenacious‘s approximate size in the area. It is possible that the target we identified was one of these vessels. Though the goal of the search was not met, this particular identification provided some validation of the targeting process.

Discussion. The image-processing effort was the most structured and technical aspect of the volunteer search. In trying to cull its lessons, we highlight three rough topics: polytechtural design style, networked approaches to search, and civilian computer-vision research targeted at disaster-response applications. For more on the organizational issues that arose in this more structured aspect of the search, see the longer version of this article.3

Polytechture. The software-development-and-deployment process that emerged was based on groups of experts working independently. Some of the more sophisticated software depended on preexisting expertise and components (such as parallelized image-processing pipelines and sophisticated drift-modeling software). In contrast, some software was ginned up for the occasion, building on now-standard Web tools like wikis, scripting languages, and public geocoding interfaces; it is encouraging to see how much was enabled through these lightweight tools.

Redundancy was an important theme in the process. Redundant ftp sites ensured availability; redundant drift modeling teams increased confidence in predictions; and redundant target qualification by experts provided both increased confidence and limits on “responsibility bias.”

Perhaps the most interesting aspect of this loosely coupled software-development process was the variety of interfaces that emerged to stitch together the independent components: the cascaded Mechanical Turk interface for hierarchical expertise in image analysis; the ftp/email scheme for data transfer and staging; the Web-based “common operating picture” for geolocation and coarse-grain task tracking; the self-service “checkin/checkout” interface for expert image analysis; the decoupling of image file access from image browsing software; and the transactional workflow interface for drift modeling. Variations in these interfaces seemed to emerge from both the tasks at hand and the styles of the people involved.

The Web’s evolution over the past decade enabled this polytechtural design. Perhaps most remarkable were the interactions between public data and global communication. The manufacturer’s specifications for Tenacious were found on the Web, aerial images of Tenacious in its berth in San Francisco were found in publicly available sources, including Google Earth and Microsoft Virtual Earth, and a former owner of Tenacious discovered the Tenacious Search blog in the early days of the search and provided additional photos of Tenacious under sail. These details were helpful for parameterizing drift models and providing “template” pictures of what analysts should look for in their imagery. Despite its inefficiencies, the use of Mechanical Turk by volunteers to bootstrap the image-analysis process was remarkable, particularly in terms of having many people redundantly performing data analysis. Beyond the Turk pipeline, an interesting and important data-cleaning anecdote occurred while building the search template for Tenacious. Initially, one of Gray’s relatives identified Tenacious in a Virtual Earth image by locating its slip in a San Francisco marina. In subsequent discussion, an analyst noticed that the boat in that image did not match Tenacious‘s online specifications, and, following some reflection, the family member confirmed that Gray had swapped boat slips some years earlier and that the online image predated the swap. Few if any of these activities would have been possible 10 years before, not because of the march of technology per se but because of the enormous volume and variety of information now placed online and the growing subset of the population habituated to using it.

The volunteer search team’s experience reinforces the need for technical advances in social computing.

Networked search. It is worthwhile reflecting on the relative efficacy of the component-based polytechtural design approach, compared to more traditional and deliberate strategies. The amateur effort was forced to rely on loosely coupled resources and management, operating asynchronously at a distance. In contrast, the Coast Guard operates in a much more prepared and tightly coupled manner, performing nearly all search steps at once, in real time; once a planning phase maps out the maximum radius a boat can travel, trained officers fly planes in carefully plotted flight patterns over the relevant area, using real-time imaging equipment and their naked eyes to search for targets. In contrast, a network-centric approach to SAR might offer certain advantages in scaling and evolution, since it does not rely on tightly integrated and relatively scarce human and equipment resources. This suggests a hybrid methodology in which the relevant components of the search process are decoupled in a manner akin to our volunteer search, but more patiently architected, evolved, and integrated. For example, Coast Guard imagery experts need not be available to board search planes nationwide; instead, a remote image-analysis team could examine streaming (and archived) footage from multiple planes in different locales. Weather hazards and other issues suggest removing people boarding planes entirely; imagery could be acquired via satellites and unmanned aerial vehicles, which are constantly improving. Furthermore, a component-based approach takes advantage of the independent evolution of technologies and the ability to quickly train domain experts on each component. Image-analysis tools can improve separately from imaging equipment, which can evolve separately from devices flying the equipment. The networking of components and expertise is becoming relatively common in military settings and public-sector medical imaging. It would be useful to explore these ideas further for civilian settings like SAR, especially in light of their potential application to adjacent topics like disaster response.

Automated image analysis. The volunteer search team included experts in image processing in astronomy, as well as in computer vision. The consensus early on was that off-the-shelf image-recognition software wouldn’t be accurate enough for the urgent task of identifying boats in satellite imagery of open ocean. During the course of the search a number of machine-vision experts examined the available data sets, concluding they were not of sufficient quality for automated processing, though it may have been because we lacked access to the “raw bits” obtained by satellite-based sensors. Though some experts attempted a simple form of automated screening by looking for clusters of adjacent pixels that stood out from the background, even these efforts were relatively unsuccessful.

It would be good to know if the problem of finding small boats in satellite imagery of the ocean is inherently difficult or simply requires more focused attention from computer-vision researchers. The problem of using remote imagery for SAR operations is a topic for which computer vision would seem to have a lot to offer, especially at sea, where obstructions are few.

Reflection

Having described the amateur SAR processes cobbled together to find Tenacious, we return to some of the issues we outlined initially when we met in Berkeley in 2008.

On the computational front, there are encouraging signs that SAR can be “democratized” to the point where a similar search could be conducted without extraordinary access to expertise and resources. The price of computer hardware has continued to shrink, and cloud services are commoditizing access to large computational clusters; it is now affordable to get quick access to enormous computing resources without social connections or up-front costs. In contrast, custom software pipelines for tasks like image processing, drift modeling, and command-and-control coordination are not widely available. This software vacuum is not an inherent problem but is an area where small teams of open-source developers and software researchers could have significant impact. The key barrier to SAR democratization may be access to data. Not clear is whether data providers (such as those in satellite imagery and in plane leasing) would be able to support large-scale, near-real-time feeds of public-safety-related imagery. Also not clear, from a policy perspective, is whether such a service is an agreed-upon social good. This topic deserves more public discussion and technical investigation. Sometimes the best way to democratize access to resources is to build disruptive low-fidelity prototypes; perhaps then this discussion can be accelerated through low-fidelity open-source prototypes that make the best of publicly available data (such as by aggregating multiple volunteer Webcams3).

The volunteer search team’s experience reinforces the need for technical advances in social computing. In the end, the team exploited technology for many uses, not just the high-profile task of locating Tenacious in images from space. Modern networked technologies enabled a group of acquaintances and strangers to quickly self-organize, coordinate, build complex working systems, and attack problems in a data-driven manner. Still, the process of coordinating diverse volunteer skills in an emerging crisis was quite difficult, and there is significant room for improvement over standard email and blogging tools. A major challenge is to deliver solutions that exploit the software that people already use in their daily lives.

The efforts documented here are not the whole story of the search for Tenacious and its skipper; in addition to incredible work by the Coast Guard, there were other, quieter efforts among Gray’s colleagues and family outside the public eye. Though we were frustrated achieving our primary goal, the work done in the volunteer effort was remarkable in many ways, and the tools and systems developed so quickly by an amateur team worked well in many cases. This was due in part to the incredible show of heart and hard work from the volunteers, for which many people will always be grateful. It is also due to the quickly maturing convergence of people, communication, computation, and sensing on the Internet. Jim Gray was a shrewd observer of technology trends, along with what they suggest about the next important steps in research. We hope the search for Tenacious sheds some light on those directions as well.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment