There is a growing public acceptance that social-media technology is out of control with adversea societal consequences. Yet, it is not clearb how speech on social media should be regulated.

As with many other vexing societal problems, the social-media question ended up on the docket of the U.S. Supreme Court (SCOTUS). In 2023, SCOTUS decided two cases that left untouched Section 230 of the Communications Decency Act of 1996, which offers immunity from liability to providers and users of an “interactive computer service” who publish information provided by third-party users. This year, SCOTUS will decide a pair of cases brought by a trade group representing social-media platforms challenging Texas and Florida laws that seek to regulate the content-moderation choices of large platforms.

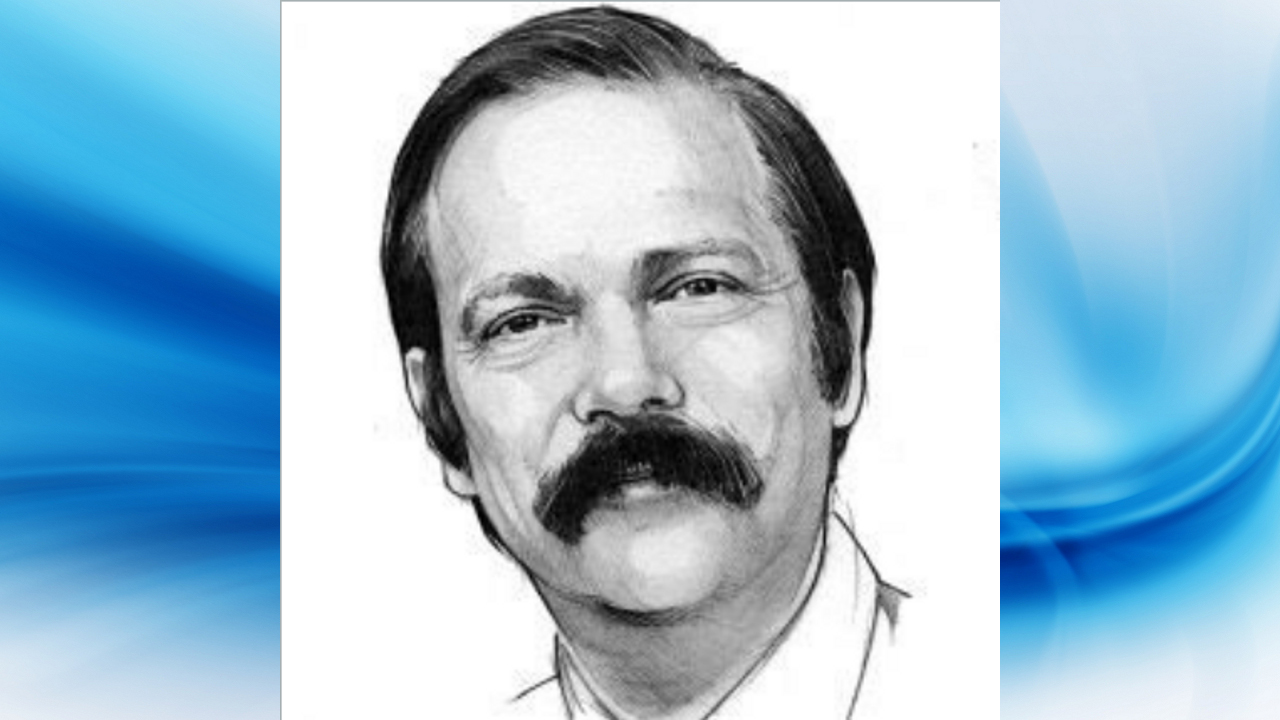

As Justice Elena Kagan admitted, however, last year during oral arguments, the justices are not “…the nine greatest experts on the Internet.” She was right! The Court’s Section 230 decisions demonstrate that it failed to understand the “More Is Different” principle. Noble Laureate physicist Philip W. Anderson put forward this principle in a famous 1972 paper,c where he argued that the ability to reduce everything to simple fundamental laws does not imply the ability to start from those laws and reconstruct the universe, due to twin difficulties of scale and complexity.

My own introduction to social media was in the 1980s. Yes, Facebook and Twitter did not invent social media in the 2000s. Usenet, a distributed discussion system available on computers, was established in 1980. It resembled precursor dial-up bulletin-board systems (BBS) but had a worldwide scope. When the Internet became commercial in the mid-1990s, Usenet quickly acquired millions of new users, outside of its earlier academic/research audience. The quality of Usenet discourse rapidly declined, and its earlier users mostly abandoned it. Why? Because more is different!

As Facebook and Twitter scaled up their user numbers in the 2000s, they encountered the same phenomenon. Facebook now has around 3B users, while Twitter, now X, has more than 0.5B users. Without aggressive content curation,d large social-media networks are simply not useful, due to a low signal-to-noise ratio. In fact, a couple of years ago, Facebook even removed the “Recent” option, which enabled users to see an un-curated stream of posts on their wall.

The Court seems to misunderstand fundamentally the concept of algorithmic content curation. “Defendants’ mere creation of their media platforms is no more culpable than the creation of email, cell phones, or the internet generally”, wrote the Court in its Twitter v. Taamneh decision,e adding that “defendants’ recommendation algorithms are merely part of the infrastructure through which all the content on their platforms is filtered.” The Court does not seem to comprehend that unlike phone or email, what you see on social media is what the platform decides to show you. In fact, the very term “platform,” implying passivity, is misleading. Facebook and X are in the content-curation business, just like The New York Times when it decides which letters-to-the-editor it publishes. To add insult to injury, SCOTUS argued that “the algorithms have been presented as agnostic as to the nature of the content”, while the whole point of the algorithm is to fit content to users.

In view of the Supreme Court’s fundamental misunderstanding of Internet technology, it seems that we must wait to hear from the U.S. legislative branch, where there seems to be a bipartisan consensus that something sought to be done about social media. (The U.S. House of Representative just passedf a ban on the Chinese-owned TikTok.) In a recent article, Lanier and Stanger offeredg a simple solution: “Axe 26 words from the Communications Decency Act. Welcome to a world without Section 230.”

Yet, removing the liability shield of Section 230 does not fully answer the question of regulating speech on social media. The basic policy question of speech regulation is inseparable, however, from another policy concern, which is how to deal with the concentration of power in technology. Social-media networks offer walled gardens, where they are fully in control. To counter that, Beck proposed decomposing the integrated digital monopolies vertically to enable competitive services based on a shared data structure.h Doctorow argued that forcing digital monopolies to open their APIs should suffice.i

I do not believe that we can expect the Court to resolve the social dilemma of speech on social media. The ball is now back in the court of the people.

I wonder if the question is, in addition to a level of understanding, in an intent to empower a party. The author seems to assume that the power of curation, as it is with his newspaper analogy, is with the platform. Indeed, it used to be the necessary case.

It is no longer necessary. Curation (as a service) may be a user-exposed interface—or multiple. Social media companies resist giving up that power, so they bundle curation with distribution. I think the courts and legislators lack an understanding of what is possible. So, they are struggling with how to balance the power between users and platforms and exactly how passive (or not) platforms should be.

Sounds like the companies are sweeping empowering (and problematic) vertical integration into a simplified Section 230 narrative. At some point, a better abstraction layering will have to be legislated – and the vertical integration scrutinized.