Self-assembly, by which atoms, molecules, or other nanoscale components spontaneously organize into something useful, sounds so simple. Just mix a few chemicals and wait for a new plastic, drug, or electronic component to form at the bottom of your test tube.

Unfortunately, it’s not so easy. Coaxing tiny particles to arrange themselves in an orderly way, with desirable and repeatable properties, is enormously complex, typically involving a great deal of trial and error in the laboratory.

But now two mathematicians, using tools from information theory and computer science, have found a new and relatively simple way to orchestrate the assembly of nanostructures. And they have devised algorithms that can produce mathematical proofs that their structures are optimum.

Henry Cohn, a principal researcher at Microsoft Research New England and Abhinav Kumar, an assistant professor of mathematics at Massachusetts Institute of Technology, have employed a rich mix of techniques—including heuristic algorithms, linear programming, search optimization, and error-correction theory—to produce their results.

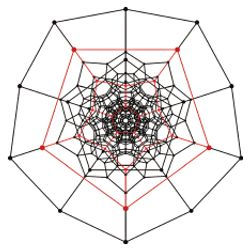

Writing in a recent issue of Proceedings of the National Academy of Sciences, Cohn and Kumar describe their success in designing a system to direct 20 randomly placed particles on a sphere to form into a perfect dodecahedron with 12 pentagonal faces, a structure that minimizes potential energy and, hence, maximizes stability.

Although the methods have yet to be implemented in a lab, they may ultimately find use in such diverse fields as electronics, communications, and medicine. For example, Cohn says, a drug company might produce a time-release drug by encapsulating tiny drug droplets in structures, such as dodecahedrons, that have certain desired properties. The idea is to simplify the search for the proper materials and conditions for self-assembly of such things.

The forces between particles determine whether or not they will organize into a stable and desired configuration. Cohn and Kumar’s blueprints for self-assembly specify the required inter-particle forces and distances via formulas called potential functions.

Traditional approaches to this problem use complex potential functions with multiple potential wells, local energy minima that cause the particles to settle into certain positions. But Cohn and Kumar devised a way to find potential functions that cause the particles to organize themselves more directly, without relying on local minima. The resulting formulas are simpler and, hence, would be much easier to implement in a lab or manufacturing line, they say.

In the second part of the problem, Cohn and Kumar go on to mathematically prove that the dodecahedron and several other structures built by their methods are in fact the unique ground states, or globally energy-minimizing arrangements of particles. They do that using linear programming bounds, a tool borrowed from error-correction theory.

The researchers find their optimal potential functions using an iterative process they call “simulation-guided optimization,” which alternates between molecular dynamics simulation and linear programming. A trial potential function is chosen, and a number of simulations—with random starting positions for the particles—are run, allowing the particles to interact until they settle into a structure. The result is a list of possible candidate structures for the ground state.

Then linear programming looks for a new potential function that makes all the candidates rank worse than the target configuration, and the whole process is repeated. The researchers call this process a heuristic algorithm because “it is not guaranteed to work.” They tested their potential function 1,200 times with different starting positions of the 20 particles, and all but six converged to the dodecahedron.

Not surprisingly, a process that repetitively applies simulation and linear programming can be computationally very taxing. Indeed, a large part of the mathematicians’ effort went into finding efficient search methods. “The problem is how not to get stuck at some sub-optimal solution,” Cohn says.

Graphs of potential functions against inter-particle distances for Cohn/Kumar solutions tend to be smooth, with particle interactions decreasing monotonically with distance. But those for the more conventional approaches—those that employ potential wells—are complex and bumpy, with numerous local maxima and minima. “The problem with potential wells is they are much harder to manufacture in the lab,” Kumar explains. “But the kinds of functions you see in nature, and the kind you might be able to generate, are basically functions with not too many wiggles. We ask the question, ‘Can you get a potential function with essentially no wiggles?'”

The Inverse Approach

Cohn and Kumar’s work builds on earlier research by Salvatore Torquato, a professor of chemistry at Princeton University. Starting with a paper written four years ago, Torquato pioneered what he calls the inverse approach to self-assembly. In the traditional forward approach, known particle interactions are used to predict a likely resulting structure. But the inverse method starts with some desired configuration and derives the optimal inter-particle interactions that would spontaneously organize into that target structure. Torquato has used pure theoretical work as well as numeric computer simulation to find potential functions that can lead to the self-assembly of materials into squares, honeycombs, diamond shapes, and lattices.

“This is a completely different way of thinking about designing these structures, and it’s tailor-made for self-assembly,” he says.

Torquato says the inverse approach driven by theoretical and computational methods is the most direct way to design materials that can be produced by self-assembly. Some such substances have counterintuitive properties, such as materials that shrink when heated or that, when stretched, expand at right angles to the direction of stretch. The latter property might be useful in a foam designed to provide a tight seal between two surfaces, for example.

“The game is to show that in fact you are not limited to what you get from molecular interactions,” Torquato says. “What kinds of interesting structures, that typically nature doesn’t make, can you make computationally? We ask the fundamental questions: What’s permitted? What’s not? We still don’t know the answers to those questions.”

While researchers using the inverse method increasingly rely on concepts from information theory, those employing the forward approach are more Edisonian, relying more on hands-on, trial-and-error laboratory work, Torquato says. “They synthesize new [substances] and they get what they get at the end of the day,” he says. “But the most direct way to get self-assembly is, OK, you want a particular target configuration, then let’s design the interactions to get those.”

But Torquato says it’s not a battle between Edisonians and computer scientists. “The idea would be to combine our computational theoretical approach with experimental synthesis technology, and that, I think, is the way material science will be done in the future.”

Torquato hails as a “wonderful contribution” Cohn and Kumar’s methods for mathematically proving theorems about their potential functions. That was feasible, he said, because the spaces they considered, such as spheres, were finite and bounded. “Can their approach scale to Euclidian space?” he asks. “There is no reason to think one can’t do that. Until they came along, there was no rigorous proof of any kind.”

Cohn and Kumar found inspiration in some unlikely recesses of information science, such as the theory behind the error-correction codes used in communication systems. In a noisy communication channel, one wants to keep multiple signals as well separated as possible so they don’t get confused. Similarly, in chemistry, particles often repel each other and stay as far apart as possible. Cohn says he was able to apply some of the theory of error-correction coding to the self-assembly problem by using it to tell particles how to stay far apart.

The research of Cohn and Kumar may have applications for fields such as electronics, communications, and medicine.

“Some of the most interesting areas for research are where there is an imbalance between two fields, where each field knows something that the other field hasn’t figured out yet,” Cohn says. “If you can make the connection, you can engage in arbitrage and transfer some information in each direction.”

Join the Discussion (0)

Become a Member or Sign In to Post a Comment