Everything about the Large Hadron Collider (LHC), the particle accelerator most famous for the Nobel Prize-winning discovery of the elusive Higgs boson, is massive—from its sheer size to the grandeur of its ambition to unlock some of the most fundamental secrets of the universe. At 27 kilometers (17 miles) in circumference, the accelerator is easily the largest machine in the world. This size enables the LHC, housed deep beneath the ground at CERN (the European Organization for Nuclear Research) near Geneva, to accelerate protons to speeds infinitesimally close to the speed of light, thus creating proton-on-proton collisions powerful enough to recreate miniature Big Bangs.

The data about the output of these collisions, which is processed and analyzed by a worldwide network of computing centers and thousands of scientists, is measured in petabytes: for example, one of the LHC’s main pixel detectors, the ultra-durable high-precision cameras that capture information about these collisions, records an astounding 40 million pictures per second—far too much to store in its entirety.

This is the epitome of big data—yet when we think of big data, and of the machine-learning algorithms used to make sense of it, we usually think of applications in text processing and computer vision, and of uses in marketing by the likes of Google, Facebook, Apple, and Amazon. “The center of mass in applications is elsewhere,” outside of the physical and natural sciences, says Isabelle Guyon of the University of Paris-Saclay, who is the university’s chaired professor in big data. “So even though physics and chemistry are very important applications, they don’t get as much attention from the machine learning community.”

Guyon, who is also president of ChaLearn.org, a non-profit that organizes machine-learning competitions, has worked to shift data scientists’ attention toward the needs of high-energy physics. The Higgs Boson Machine Learning Challenge that she helped organize in 2014, which officially required no knowledge of particle physics, had participants sift data from hundreds of thousands of simulated collisions (a small dataset by LHC standards) to infer which collisions contained a Higgs boson, the final subatomic particle from the Standard Model of particle physics for which evidence was observed.

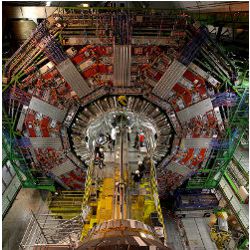

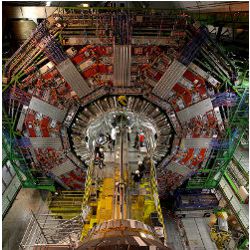

The Higgs Boson Machine Learning Challenge, despite a modest top prize of $7,000, attracted over 1,000 contenders, and in the end physicists were able to learn a thing or two from the data scientists, such as the use of cross-validation to avoid the problem of overfitting a model to just one or two datasets, according to Guyon. But even before this high-profile competition, high-energy physicists working at LHC had been using machine-learning tools to hasten their research. The finding of the Higgs boson is a case in point. “The Higgs boson discovery employed a lot of the machine learning techniques,” says Maria Spiropulu, a physics professor at the California Institute of Technology (Caltech) who co-leads a team on the Compact Muon Solenoid (CMS), one of the main experiments at the LHC. “In 2005, we were expecting we would have a discovery with 14 TeV [tera electron Volts] around 2015 or 2016, and the discovery happened in 2012, with half the energy and only two years of data. Of course, we were assisted with nature because the Higgs was there, but in large part the discovery happened so fast because the computation was a very, very powerful ally.”

These days, machine learning techniques—mainly supervised learning—are used at every stage of the collider’s operations, says Mauro Donegà, a physicist at ETH Zurich who has worked on both the ATLAS particle physics experiment and CMS, the two main experiments at the LHC. That process starts with the trigger system, which immediately after each collision determines if information about the event is worth keeping (most is discarded), and goes out to the detector level, where machine learning helps reconstruct events. Farther down the line, machine learning aids in optimal data placement by predicting which of the datasets will become hot; replicating these datasets across sites ensures researchers across the Worldwide LHC Computing Grid (a global collaboration of more than 170 computer centers in 42 countries) have continuous access to even the most popular data. “There are billions of examples [of machine learning] that are in this game—it is everywhere in what we do,” Donegà says.

At the heart of the collider’s efforts, of course, is the search for new particles. In data processing terms, that search is a classification problem, in which machine-learning techniques such as neural networks and boosted decision trees help physicists tease out scarce and subtle signals suggesting new particles from the vast “background,” or the multitude of known, and therefore uninteresting, particles coming out of the collisions. That is a difficult classification problem, says University of California, Irvine computer science professor Pierre Baldi, an ACM Fellow who has applied machine learning to problems in physics, biology, and chemistry.

“Because the signal is very faint, you have a very large amount of data, and the Higgs boson [for example] is a very rare event, you’re really looking for needles in a haystack,” Baldi explains, using most researchers’ go-to metaphor for the search for rare particles. He contrasts this classification problem with the much more prosaic task of having a computer distinguish male faces from female faces in a pile of images; that is obviously a classification problem, too, and classifying images by gender can, in borderline cases, be tricky, “but most of the time it’s relatively easy.”

These days, the LHC has no shortage of tools to meet the challenge. For example, one algorithm Donegà and his colleagues use focuses just on background reduction, a process whose goal is to squeeze down the background as much as possible so the signal can stand out more clearly. Advances in computing—such as ever-faster graphics processing units and field-programmable gate arrays—have also bolstered the physicists’ efforts; these advances have enabled the revival of the use of neural networks, the architecture behind a powerful set of computationally intensive machine-learning techniques known as deep learning.

Spiropulu believes machine learning will enable physicists to push the frontier of their field beyond the Standard Model of particle physics.

What is more, physicists have begun recruiting professional data scientists, in addition to continuing to master statistics and machine learning themselves, says Maurizio Pierini, a physicist at CERN who organized last November’s workshop on data science at the LHC. He points to ATLAS, the largest experiment at the LHC, which in the last two or three years has done “an incredible job of attracting computer scientists working with physicists,” he says.

Although machine learning has become an indispensable tool at the Large Hadron Collider, there is a natural tension in the use of machine learning in physics experiments. “Physicists are obsessed with the idea of understanding each single detail of the problem they’re dealing with,” says Pierini. In physics, “you take a problem and you decompose it to its ingredients, and you model it,” he explains. “Machine learning has a completely different perspective—you’re demanding your algorithm to explore your dataset and find the patterns between the different features in your dataset. A physicist would say, ‘No, I want to do this myself.'”

The opacity of what exactly goes on inside machine learning algorithms—the “black-box problem,” as it is usually called—makes physicists uneasy, but they have found ways to increase their confidence in the results of this new way of doing science. “When it comes to very elaborate software that we don’t understand, we’re all skeptics,” says Spiropulu, the Caltech physicist, “but once you do validation and verification, and you’re confronted with the fact that these are actually things that work, then there’s nothing to say, and you use them.” Simply put, the physicists know the algorithms work because they have been successfully tested many times on known physics phenomena, so there is every reason to believe they work in general.

In fact, Spiropulu believes machine learning will enable physicists to push the frontier of their field beyond the Standard Model of particle physics, accelerating investigations of more mysterious phenomena like dark matter, supersymmetry, and any other new particles that might emerge in the collider.

“It’s not unleashing some magic box that will give us what we want. These algorithms are extremely well-architected; if they fail, we will see where they fail, and we will fix the algorithm.”

Further Reading

Adam-Bourdarios, C., Cowan, G., Germain, C., Guyon, I., Kégl, B., and Rousseau, D.

The Higgs boson machine learning challenge. JMLR: Workshop and Conference Proceedings 2015 http://jmlr.org/proceedings/papers/v42/cowa14.pdf

Baldi, P., Sadowski, P., and Whiteson, D.

Searching for exotic particles in high-energy physics with deep learning, Nature Communications (2014), Vol 5, pp 1–9 http://bit.ly/1RCaiOB

Donegà, M.

ML at ATLAS and CMS: setting the stage. Data Science @ LHC 2015 Workshop (2015). https://cds.cern.ch/record/2066954

Melis, G.

Challenge winner talk. Higgs Machine Learning Challenge visits CERN (2015). https://cds.cern.ch/record/2017360

CERN. The Worldwide LHC Computing Grid. http://home.cern/about/computing/worldwide-lhc-computing-grid

Join the Discussion (0)

Become a Member or Sign In to Post a Comment