Neural network-based machine learning was invented over two decades ago, but it did not take over classification and categorization applications until the invention of fast processors running high-precision floating-point arithmetic, often accelerated with graphics processing units (GPUs). Since then, machine learning has delivered ultra-accurate results for scientific computing tasks such as calculating orbits and rocket trajectories, and simulating human organs. In recent years, machine learning has also trickled down to now-widespread perception and reasoning tasks, such as speech recognition, image classification, and language translation.

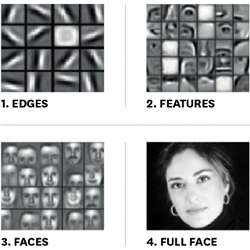

For these applications, the first step was always to accumulate a vast database of examples from which the neural network could learn its task. Fully connected deep neural networks (DNNs) used for speech-to-text classification, convolutional neural networks (CNNs) used for image classification, and recurrent neural networks (RNNs) used for language translation all require thousands, if not millions, of valid examples from which to learn. During training, the highest resolution possible was required to discern increasingly smaller "back propagation" errors as the neural network was fine-tuned. After learning, a lower-resolution trained neural network could be deployed as an inference engine that correctly identified, classified, or categorized real-world data by generalizing from its training data to examples with which it had never before been faced.

For the learning step, precision as high as 64 bits was required to discern very small errors, limiting training to data centers where such microprocessors and GPUs were commonplace and where the vast databases could be easily stored. After learning, the trained neural network could often be deployed as a 32-bit inference engine, since the small back-propagation errors it needed to discern while learning were not used during inference. Nevertheless even today, most inference engines still run inside the datacenter accessed by users over remote Internet connections.

The next generation of neural networks, however, will be deployed at the edge of the network, where real-time sensors collect training data. Instead of learning from a vast database that has been painstakingly curated in the datacenter, this new breed of application will learn from data streamed in real time from smartphones, Internet of Things devices, and machine-to-machine connections. Examples include natural language processing, vision-to-speech translation, fine-tuning models for personalization of smartphone apps, privacy-centric applications where raw data must remain confidential, and federated learning, where communication with centralized resources is minimized.

To facilitate such edge-of-network applications, industrial research centers are seeking to lower the resolution needed for both learning and inference engines on the network edge. Machine learning traditionally done in data centers using 64-bit processors and deployed on 32-bit processors, first shifted to 32 bits for learning and 16 bits for deployment. More recently, inexpensive 16-bit processors have started doing the learning, with inference engines running on cheap 8-bit processors. The next generation being developed now will graduate to using specially built 8-bit processors for the learning task, with inference engines running on small, inexpensive 4-bit processors, with 2-bit deployment on the horizon circa 2020.

Intel, Microsoft, NVIDIA, Google, and IBM have already made the first shift to running machine learning applications on 16-bit processors and deploying the trained inference engine on 8-bit processors.

Intel, in its tutorial Lower Numerical Precision Deep Learning Inference and Training, says that "both deep learning training and inference can be performed with lower numerical precision, using 16-bit multipliers for training and 8-bit multipliers for inference with minimal to no loss in accuracy."

Microsoft's Project Brainwave includes a field programmable gate array (FPGA) from Intel that demonstrated a "narrow precision" 8-bit floating point inference engine that was benchmarked to be superior to using 16-bit integer inference.

As NVIDIA explains in a blog post, "Most deep learning frameworks, including PyTorch, train using 32-bit floating point (FP32) arithmetic by default. However, using FP32 for all operations is not essential to achieve full accuracy for many state-of-the-art deep neural networks (DNNs) … FP16 reduces memory bandwidth and storage requirements by 2X."

Google also proved the concept of using low-precision arithmetic during inference computations in its Neural Machine Translation System, noting that the technique "accelerated the final translation speed."

IBM recently took the concept one step further, at the 2018 Conference on Neural Information Processing Systems, where it demonstrated how to perform 8-bit machine learning by making algorithmic modifications and adding specialized arithmetic units in Training Deep Neural Networks with 8-bit Floating Point Numbers. There, IBM demonstrated "for the first time, the successful training of DNNs [deep neural networks] using 8-bit floating point numbers while fully maintaining accuracy on a spectrum of deep learning models and datasets."

IBM "showcased new hardware that will take AI further than it's been before: right to the edge," said Jeff Welser, vice president and lab director of the IBM Research Almaden facility, in a blog post about what he calls the post-GPU era.

Observed Kailash Gopalakrishnan, IBM Distinguished Researcher and manager of Accelerator Architectures and Machine Learning, "Microsoft, Nvidia, and Google are all focused on 8-bit inference engines, but to deploy deep learning at the edge of the network, or for larger deep learning models to efficiently operate in the cloud, you need 8-bit training too."

IBM's 8-bit architecture for enabling learning at the network edge required smart algorithms, as well as special hardware that preserves accuracy by attending to the details of the learning process. "We have pushed the envelope to achieve accurate 8-bit deep learning," said Gopalakrishnan. "To achieve no loss in accuracy required a decade of exploring precisely how the neural networks work."

A major insight gleaned during the past decade, according to Gopalakrishnan, is that you cannot make the entire neural network operate at the 8-bit level, because the input layer (where the sensor features must be clearly depicted) and the output layer (where the classification categories must be clearly differentiated) require 16 bits. Consequently, whereas all the multiple deep layers operate at 8 bits for significant energy savings, the input and output layers of IBM's demonstrated prototype still use 16-bit floating point numbers.

"The input and output layers are still 16-bit, but that only constitutes less than 5% of the total, since the multiple interior layers of the neural network all operate at 8 bits," said Gopalakrishnan.

The second development enabling 8-bit learning as accurate as 16-bit learning was a redesign of the data-flow through the inner layers of the neural network. In particular, three operations typically are performed during learning in a deep multi-layered neural network. First, the inputs from the previous layer are assimilated—combined and compared to a threshold value. The error level in the output of that layer is then calculated. Finally, the error is propagated backwards from the output to the inputs of the layer in order to adjust the assimilation step to be more accurate (that is, to produce less output error). This process is then repeated for every layer in the deep multilayered network until the correct output classification category is selected.

The main thing IBM concluded from its detailed study of this "back-propagation of error" learning process was that the accuracy of the error data sent backwards through the network needed to be more precise than the forward-propagating sensor data from above. As a result, the forward-propagated data can be as small as 4 bits (or, in the future, perhaps even just 2 bits) while the backward propagating errors must be at least 8 bits.

The final insight was that the error signal back-propagating was inevitably very small compared to the forward-propagating sensor data. As a result, the norm is to use a high-precision accumulator in order to keep the small error data from being swamped by the larger sum. To compensate, IBM invented small "chunked" accumulators that slowly assemble partial sums among many medium-sized numbers, instead of using one big accumulator that adds small back-propagation error corrections to the large forward-propagating data.

As a result, "chunking" savings in the 8-bit deep multilayered learning neural network requires a much smaller processor, which nevertheless is much faster than the equivalently accurate 16-bit DNN today.

Chunking also can be applied to dramatically reducing communication overhead during training. IBM's adaptive compression (AdaComp) algorithm uses similar chunking techniques to reduce communication overhead in neural networks by 40 to 200 times.

Said IBM's Welser, "We've demonstrated, for the first time, the ability to train deep learning models with 8-bit precision while fully preserving model accuracy across all major AI dataset categories: image, speech, and text. The techniques accelerate training time for deep neural networks (DNNs) by two to four times over today's 16-bit systems."

The hardware itself for deep multilayered neural learning deployed at the edge of the network, according to IBM, will shrink the necessary memory size by half—enabling in-memory processing without mass storage of training data—with the processing hardware itself shrunk to one-quarter of the size of 16-bit learning DNNs today.

IBM contends that not only will its 8-bit approach enable any DNN algorithm to be deployed at the edge, but will also enable analog neuromorphic processors—which are even smaller and faster—to be used, since today they do not provide as much resolution as 16-bit digital processors. However, at 8-bit, 4-bit, or even 2-bit resolution, future analog neuromorphic processors could take over edge-deployment of learning AIs.

R. Colin Johnson is a Kyoto Prize Fellow who has worked as a technology journalist for two decades.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment