The first book dedicated to robot motion was published in 1982 with the subtitle “Planning and Control.”5 The distinction between motion planning and motion control has mainly historical roots. Sometimes motion planning refers to geometric path planning, sometimes it refers to open loop control; sometimes motion control refers to open loop control, sometimes it refers to close loop control and stabilization; sometimes planning is considered as an offline process whereas control is real time. From a historical perspective, robot motion planning arose from the ambition to provide robots with motion autonomy: the domain was born in the computer science and artificial intelligence communities.22 Motion planning is about deciding on the existence of a motion to reach a given goal and computing one if this one exists. Robot motion control arose from manufacturing and the control of manipulators30 with rapid effective applications in the automotive industry. Motion control aims at transforming a task defined in the robot workspace into a set of control functions defined in the robot motor space: a typical instance of the problem is to find a way for the end-effector of a welding robot to follow a predefined welding line.

Key Insights

What kind of optimality is about in robot motion? Many facets of the question are treated independently in different communities ranging from control and computer science, to numerical analysis and differential geometry, with a large and diverse corpus of methods including, for example, the maximum principle, the applications of Hamilton-Jacobi-Bellman equation, quadratic programming, neural networks, simulated annealing, genetic algorithms, or Bayesian inference. The ultimate goal of these methods is to compute a so-called optimal solution whatever the problem is. The objective of this article is not to overview this entire corpus that follows its own routes independently from robotics, but rather to emphasize the distinction between “optimal motion” and “optimized motion.” Most of the time, robot algorithms aiming at computing an optimal motion provide in fact an optimized motion that is not optimal at all, but is the output of a given optimization method. Computing an optimal motion is mostly a challenging issue as it can be illustrated by more than 20 years of research on wheeled mobile robots (as we discuss later).

Note that the notion of optimality in robot motion as it is addressed in this article is far from covering all the dimensions of robot motion.7 It does not account for low-level dynamical control, nor for sensory-motor control, nor for high level cognitive approaches to motion generation (for example, as developed in the context of robot soccer or in task planning).

What Is Optimal in Robot Motion Planning and Control?

Motion planning explores the computational foundations of robot motion by facing the question of the existence of admissible motions for robots moving in an environment populated with obstacles: how to transform the continuous problem into a combinatorial one?

This research topic22,26 evolved in three main stages. In the early 1980s, Lozano-Perez first transformed the problem of moving bodies in the physical space into a problem of moving a point in some so-called configuration space.28 In doing so, he initiated a well-defined mathematical problem: planning a robot motion is equivalent to searching for connected components in a configuration space. Schwartz and Sharir then showed the problem is decidable as soon as we can prove that the connected components are semi-algebraic sets.35 Even if a series of papers from computational geometry explored various instances of the problem, the general “piano mover” problem remains intractable.14 Finally by relaxing the completeness exigence for the benefit of probabilistic completeness, Barraquand and Latombe introduced a new algorithmic paradigm3 in the early 1990s that gave rise to the popular probabilistic roadmap20 and rapid random trees31 algorithms.

Motion planning solves a point-to-point problem in the configuration space. Whereas the problem is a difficult computational challenge that is well understood, optimal motion planning is a much more difficult challenge. In addition to finding a solution to the planning problem (that is, a path that accounts for collision-avoidance and kinematic constraints if any), optimal motion planning refers to finding a solution that optimizes some criterion. These can be the length, the time or the energy (which are equivalent criteria under some assumption), or more sophisticated ones, as the number of maneuvers to park a car.

In such a context many issues are concerned with optimization:

- For a given system, what are the motions optimizing some criteria? Do such motions exist? The existence of optimal motion may depend either on the presence of obstacles or on the criterion to be optimized.

- When optimal motions exist, are they computable? If so, how complex is their computation? How to relax exactness constraints to compute approximated solutions? We will address the combinatorial structure of the configuration space induced by the presence of obstacles and by the metric to be optimized. Time criterion is also discussed, as are practical approaches to optimize time along a predefined path.

- Apart from finding a feasible solution to a given problem, motion planning also wants to optimize this solution once it has been found. The question is particularly critical for the motions provided by probabilistic algorithms that introduce random detours. The challenge here is to optimize no more in the configuration space of the system, but in the motion space.

In this article, optimal motion planning is understood with the underlying hypothesis that the entire robot environment is known and the optimization criterion is given: the quest is to find a global optimum without considering any practical issue such as model uncertainties or local sensory feedback.

Optimal Motion Existence

Before trying to compute an optimal motion, the first question to ask is about its existence. To give some intuition about the importance of this issue, consider a mobile robot moving among obstacles. For some rightful security reason, the robot cannot touch the obstacles. In mathematical language, the robot must move in an open domain of the configuration space. Yet, an optimal motion to go from one place to another one located behind some obstacle will necessarily touch the obstacle. So this optimal motion is not a valid one. It appears as an ideal motion that cannot be reached. The best we can do is to get a collision-free motion whose length approaches the length of this ideal shortest (but non-admissible) motion. In other words, there is no optimal solution to the corresponding motion planning problem. The question here is of topological nature: combinatorial data structures (for example, visibility graphs) may allow us to compute solutions that are optimal in the closure of the free space, and that are not solutions at all in the open free space.

Even without obstacle, the existence of an optimal motion is far from being guaranteed. In deterministic continuous-time optimal control problems we usually search for a time-dependent control function that optimizes some integral functional over some time interval. Addressing the issue of existence requires us to resort to geometric control theory;18 for instance, Fillipov’s theorem proves the existence of minimum-time trajectories,a whereas Prontryagin Maximum Principle (PMP) or Boltyanskii’s conditions give respectively necessary and sufficient conditions for a trajectory to be optimal. However it is usually difficult to extract useful information from these tools. If PMP may help to characterize optimal trajectories locally, it generally fails to give their global structure. Later, we show how subtle the question may be in various instances of wheeled mobile robots.

The class of optimal control problems for which the existence of an optimal solution is guaranteed, is limited. The minimum time problems for controllable linear systems with bounded controls belong to this class: optimal solutions exist and optimal controls are of bang-bang type. However, the so-called Fuller problem may arise: it makes the optimal solution not practical at all as it is of bang-bang type with infinitely many switches. Other examples include the famous linear-quadratic-Gaussian problem (the cost is quadratic and the dynamics is linear in both control and state variables), and systems with a bounded input and with a dynamics that is affine in the control variables. In the former a closed loop optimal solution can be computed by solving algebraic Riccati equations, whereas in the latter the existence of an optimal control trajectory is guaranteed under some appropriate assumptions.

Even without an obstacle, the existence of an optimal motion is far from being guaranteed.

In more general cases, we can only hope to approximate as closely as desired the optimal value via a sequence of control trajectories. There is indeed no optimal solution in the too restricted space of considered control functions. This has already been realized since the 1960s. The limit of such a sequence can be given a precise meaning as soon as we enlarge the space of functions under consideration. For instance, in the class of problems in which the control is affine and the integral functional is the L1-norm, the optimal control is a finite series of impulses and not a function of time (for example, see Neustadt29). In some problems such as the control of satellites, such a solution makes sense as it can approximately be implemented by gas jets. However, in general, it cannot be implemented because of the physical limitations of the actuators.

Changing the mathematical formulation of the problem (for example, considering a larger space of control candidates) may allow the existence of an optimal solution. In the former case of satellite control, the initial formulation is coherent as an “ideal” impulse solution can be practically approximated by gas jets. However, in other cases the initial problem formulation may be incorrect as an ideal impulse solution is not implementable. Indeed, if we “feel” a smooth optimal solution should exist in the initial function space considered and if in fact it does not exist, then either the dynamics and/or the constraints do not reflect appropriately the physical limitations of the system or the cost functional is not appropriate to guarantee the existence of an optimal solution in that function space. To the best of our knowledge, this issue is rarely discussed in textbooks or courses in optimal control.

Optimal Path Planning

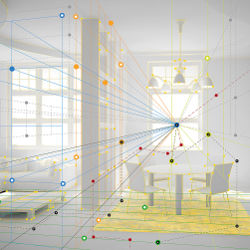

Considering a motion is a continuous function of time in the configuration (or working) space, the image of a motion is a path in that space. The “piano mover” problem refers to the path-planning problem, that is, the geometric instance of robot motion planning (see Figure 1). The constraint of obstacle avoidance is taken into account. In that context, optimality deals with the length of the path without considering time and control. The issue is to find the shortest path between two points.

Depending on the metric that equips the configuration space, a shortest path may be unique (for example, for the Euclidean metric) or not unique (for example, for the Manhattan metric). All configuration space metrics are equivalent from a topological point of view (that is, if there is a sequence of Euclidean paths linking two points, then there is also a sequence of Manhattan paths linking these two points). However, different metrics induce different combinatorial properties in the configuration space. For instance, for a same obstacle arrangement, two points may be linked by a Manhattan collision-free path, while they cannot by a collision-free straight-line segment: both points are mutually visible in a Manhattan metric, while they are not in the Euclidean one. So, according to a given metric, there may or may not exist a finite number of points that “watch” the entire space.25 These combinatorial issues are particularly critical to devise sampling-based motion planning algorithms.

Now, consider the usual case of a configuration space equipped with a Euclidean metric. Exploring visibility graph data structures easily solves the problem of finding a bound on the length of the shortest path among polygonal obstacles. This is nice, but it is no longer true if we consider three-dimensional spaces populated with polyhedral obstacles. Indeed, finding the shortest path in that case becomes a NP-hard problem.14 So, in general, there is no hope to get an algorithm that computes an optimal path in presence of obstacles, even if the problem of computing an optimal path in the absence of obstacle is solved and even if we allow the piano-robot to touch the obstacles.

As a consequence of such poor results, optimal path planning is usually addressed by means of numerical techniques. Among the most popular ones are the discrete search algorithms operating on bitmap representations of work or configuration spaces.3 The outputs we only obtain are approximately optimal paths, that is, paths that are “not so far” from a hypothetical (or ideal) estimated optimal path. Another type of methods consists in modeling the obstacles by repulsive potential. In doing so, the goal is expressed by an attractive potential, and the system tends to reach it by following a gradient descent.21 The solution is only locally optimal. Moreover, the method may get stuck in a local minimum without finding a solution, or that a solution actually exists or not. So it is not complete. Some extensions may be considered. For instance, exploring harmonic potential fields8 or devising clever navigation functions34 allow providing globally optimal solutions; unfortunately, these methods require an explicit representation of obstacles in the configuration space, which is information that is generally not available. At this stage, we can see how the presence of obstacles makes optimal path planning a difficult problem.

Optimal Motion Planning

In addition to obstacle avoidance, constraints on robot controls or robot dynamics add another level of difficulties. The goal here is to compute a minimal-time motion that goes from a starting state (configuration and velocity) to a target state while avoiding obstacles and respecting constraints on velocities and acceleration. This is the so-called kinodynamic motion planning problem.12 The seminal algorithm is based on discretizations of both the state space and the workspace.

It gave rise to many variants including nonuniform discretization, randomized techniques, and extensions of A* algorithms (see LaValle26). Today, they are the best algorithms to compute approximately optimal motions.

Less popular in the robot motion planning community are numerical approaches to optimal robot control.11 Numerical methods to solve optimal control problems fall into three main classes. Dynamic programming implements the Bellman optimality principle saying that any sub-motion of an optimal motion is optimal. This leads to a partial differential equation (the so-called Hamilton-Jacobi-Bellman equation in continuous time) whose solutions may sometimes be computed numerically. However, dynamic programming suffers from the well-known curse of the dimensionality bottleneck. Direct methods constitute a second class. They discretize in time both control and state trajectories so that the initial optimal control problem becomes a standard static non-linear programming (optimization) problem of potentially large size, for which a large variety of methods can be applied. However, local optimality is generally the best one can hope for. Moreover, potential chattering effects may appear hidden in the obtained optimal solution when there is no optimal solution in the initial function space. Finally, in the third category are indirect methods based on optimality conditions provided by the PMP and for which, ultimately, the resulting two-point boundary value problem to solve (for example, by shooting techniques) may be extremely difficult. In addition, the presence of singular arcs requires specialized treatments. So direct methods are usually simpler than indirect ones even though the resulting problems to solve may be very large. Indeed, their structural inherent sparsity can be taken into account efficiently.

At this stage, we can conclude that exact solutions for optimal motion planning remain out of reach. Only numerical approximate solutions are conceivable.

Optimal Motion Planning Along a Path

A pragmatic way to bypass (not overcome) the intrinsic complexity of the kinodynamic and numerical approaches is to introduce a decoupled approach that solves the problem in two stages: first, an (optimal) path planning generates a collision-free-path; then a time-optimal trajectory along the path is computed while taking into account robot dynamics and control constraints. The resulting trajectory is of course not time-optimal in a global sense; it is just the best trajectory for the predefined path. From a computational point of view, the problem is much simpler than the original global one because the search space (named phase plane) is reduced to two dimensions: the curvilinear abscissa along the path and its time-derivative. Many methods have been developed since the introduction of dynamic programming approaches by Shin and McKay36 in configuration space and simultaneously by Bobrow et al.4 in the Cartesian space. Many variants have been considered including the improvement by Pfeiffer and Johanni31 that combines forward and backward integrations, and the recent work by Verscheure et al.39 who transform the problem into a convex optimization one.

Optimization in Motion Space

Extensions of path-tracking methods may be considered as soon as we allow the deformations of the supporting paths. Here, we assume some motion planner provides a first path (or trajectory). Depending on the motion planner, the path may be far from being optimal. For instance, probabilistic motion planners introduce many useless detours. This is the price to pay for their effectiveness. So, the initial path must be reshaped, that is, optimized with respect to certain criteria. Geometric paths require to be shortened according to a given metric. The simplest technique consists in picking pairs of points on the path and linking them by a shortest path: if the shortest path is collision-free, it replaces the corresponding portion of the initial path. Doing so iteratively, the path becomes shorter and shorter. The iterative process stops as soon as it does not significantly improve the quality of the path. The technique gives good results in practice.

Beside this simple technique, several variational methods operating in the trajectory space have been introduced. Among the very first ones, Barraquand and Ferbach2 propose to replace a constrained problem by a convergent series of less constrained subproblems increasingly penalizing motions that do not satisfy the constraints. Each sub-problem is then solved using a standard motion planner. This principle has been successfully extended recently to humanoid robot motion planning.9

Another method introduced by Quinlan and Khatib consists in modeling the motion as a mass-spring system.32 The motion then appears as an elastic band that is reshaped according to the application of an energy function optimizer. The method applies for nonholonomic systems as soon as the nonholonomic metric is known16 as well as for real-time obstacle avoidance in dynamic environments.6

Recently, successful improvements have been introduced by following the same basic principle of optimizing an initial guess in motion space. Zucker et al. take advantage of a simple functional expressing a combination of smoothness and clearance to obstacles to apply gradient descent in the trajectory space.40 A key point of the method is to model a trajectory as a geometric object, invariant to parametrization. In the same framework, Kalakrishman et al. propose to replace the gradient descent with a derivative-free stochastic optimization technique allowing us to consider non-smooth costs.19

What We Know and What We Do Not Know About Optimal Motion for Wheeled Mobile Robots

Mobile robots constitute a unique class of systems for which the question of optimal motion is best understood. Since the seminal work by Dubins in the 1950s,13 optimal motion planning and control for mobile robots has attracted a lot of interest. We briefly review how some challenging optimal control problems have been solved and which problems still remain open.

Let us consider four control models of mobile robots based on the model of a car (Figure 2). Two of them are simplified models of a car: the so-called Dubins (Figure 3) and Reeds-Shepp (Figure 4) cars respectively. The Dubins car moves only forward. The Reeds-Shepp car can move forward and backward. Both of them have a constant velocity of unitary absolute value. Such models account for a lower bound on the turning radius, that is, the typical constraint of a car. Such a constraint does not exist for a two-wheel differentially driven mobile robot. This robot may turn on the spot while a car cannot. Let us consider two simple control schemes of a two-driving wheel mobile robot:b in the first one (Hilare-1), the controls are the linear velocities of the wheels; in the second one (Hilare-2), the controls are the accelerations (that is, the second system is a dynamic extension of the first).

Time-optimal trajectories. The car-like robots of figures 3 and 4 represent two examples of non-linear systems for which we know exactly the structure of the optimal trajectories. Note that in both examples the norm of the linear velocity is assumed to be constant. In those cases, time-optimal trajectories are supported by the corresponding shortest paths. Dubins solved the problem for the car moving only forward.13 More than 30 years later, Reeds and Shepp33 solved the problem for the car moving both forward and backward. The problem has been completely revisited with the modern perspective of geometric techniques in optimal control theory:37,38 the application of PMP shows that optimal trajectories are made of arcs of a circle of minimum turning radius (bang-bang controls) and of straight-line segments (singular trajectories). The complete structure is then derived from geometric arguments that characterize the switching functions. The Dubins and Reeds-Shepp cars are among the few examples of nonlinear systems for which optimal control is fully understood. The same methodology applies for velocity-based controlled differential drive vehicles (Hilare-1 in Figure 6). In that case, optimal trajectories are bang-bang, that is, made of pure rotations and straight-line segments. The switching functions are also fully characterized.1 This is not the case for the dynamic extension of the system, that is, for acceleration-based controlled differential drive vehicles (Hilare-2 as shown in Figure 7). Only partial results are known: optimal trajectories are bang-bang (that is, no singular trajectory appears) and are made of arcs of clothoid and involutes of a circle.15 However, the switching functions are unknown. The synthesis of optimal control for the Hilare-2 system the remain an open problem.

While the existence of optimal trajectories is proven for the four systems shown here, a last result is worth mentioning. If we consider the Reeds-Shepp car optimal control problem in presence of an obstacle, even if we allow the car touching the obstacles, it has been proven that a shortest path may not exist.10

Motion planning. These results are very useful for motion planning in the presence of obstacles. In figures 3 and 4 we display the reachable domain for both the Dubins and Reeds-Shepp cars. While the reachable set of the Reeds-Shepp car is a neighborhood of the origin, it is not the case for the Dubins car. Stated differently, the Reeds-Shepp car is small-time controllable, while the Dubins car is only controllable. The consequence in terms of motion planning is important. In the case of the Reeds-Shepp car, any collision-free—not necessarily feasible—path can be approximated by a sequence of collision-free feasible paths. Optimal paths allow building the approximation, giving rise to an efficient motion-planning algorithm.24 Not only does such an algorithm not apply for the Dubins car, we still do not know whether the motion-planning problem for Dubins car is decidable or not.

In Laumond et al.,24 we prove the number of maneuvers to park a car varies as the inverse of the square of the clearance. This result is a direct consequence of the shape of the reachable sets. So, the combinatorial complexity of (nonholonomic) motion planning problems is strongly related to optimal control and the shape of the reachable sets in the underlying (sub-Riemannian) geometry.17

Conclusion

When optimal solutions cannot be obtained for theoretical reasons (for example, nonexistence) or for practical ones (for example, untractability), we have seen how the problem can be reformulated either by considering a discrete representation of space and/or time, or by slightly changing the optimization criterion, or by resorting to numerical optimization algorithms. In all these cases, the resulting solutions are only approximated solutions of the original problem.

In conclusion, it appears the existence of optimal robot motions is rarely guaranteed. When it is, finding a solution has never been proven to be a decidable problem as is the motion-planning problem. So, “optimal motion” is most often an expression that should be understood as “optimized motion,” that is, the output of an optimization numerical algorithm. However, motion optimization techniques follow progress in numerical optimization with effective practical results on real robotic platforms, if not with new theoretical results.

The distinction between optimal and optimized motions as it is addressed in this article does not cover all facets of optimality in robot motion. In a companion article,23 we consider the issue of motion optimal as an action selection principle and we discuss its links with machine learning and recent approaches to inverse optimal control.

Acknowledgments

The article benefits from comments by Quang Cuong Pham, from a careful reading by Joel Chavas, and above all, from the quality of the reviews. The work has been partly supported by ERC Grant 340050 Actanthrope, by a grant of the Gaspar Monge Program for Optimization and Operations Research of the Fédération Mathématique Jacques Hadamard (FMJH) and by the grant ANR 13-CORD-002-01 Entracte.

Figures

Figure 1. A modern view of the “piano mover” problem: two characters have to move a shared piano while avoiding surrounding obstacles.

Figure 1. A modern view of the “piano mover” problem: two characters have to move a shared piano while avoiding surrounding obstacles.

Figure 2. A car (logo of the European Project Esprit 3 PRO-Motion in the 1990s) together with the unicycle model equations.

Figure 2. A car (logo of the European Project Esprit 3 PRO-Motion in the 1990s) together with the unicycle model equations.

Figure 5. The Hilare robot at LAAS-CNRS in the 1990s.

Figure 5. The Hilare robot at LAAS-CNRS in the 1990s.

Figure 6. Hilare-1: A different drive mobile robot. First model: The controls are the velocities of the wheels. The optimal controls are bang-bang. Optimal trajectories are made of pure rotations and of straight-line segments.

Figure 6. Hilare-1: A different drive mobile robot. First model: The controls are the velocities of the wheels. The optimal controls are bang-bang. Optimal trajectories are made of pure rotations and of straight-line segments.

Figure 7. Hilare-2: A different drive mobile robot. Second model: The controls are the acceleration of the wheels. The optimal controls are bang-bang. Optimal trajectories are made of arcs of clothoids and of arcs of involute of a circle.

Figure 7. Hilare-2: A different drive mobile robot. Second model: The controls are the acceleration of the wheels. The optimal controls are bang-bang. Optimal trajectories are made of arcs of clothoids and of arcs of involute of a circle.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment