The term ‘robotic musicianship’ may seem like an oxymoron. The first word often carries negative connotations in terms of artistic performance and can be used to describe a lack of expressivity and artistic sensitivity. The second word is used to describe varying levels of an individual’s ability to apply musical concepts in order to convey artistry and sensitivity beyond the facets of merely reading notes from a score.

Key Insights

- Robotic musicianship focuses on the construction of machines capable of producing sound, analyzing music, and generating music in such a way that allows them to showcase musicality and interact with human musicians.

- Research in robotic musicianship is motivated by the potential to enrich human musical culture and expand human creativity rather than replace it.

- Some robotic musicians are designed to play traditional instruments while others are designed to play augmented versions of instruments or even entirety new musical interfaces.

- Robotic musicians employ AI for identifying higher-level musical features essential to human musical cognition.

To understand the meaning of robotic musicianship, it is important to detail the two primary research areas of which it constitutes: Musical mechatronics, which is the study and construction of physical systems that generate sound through mechanical means;15 and machine musicianship, which focuses on developing algorithms and cognitive models representative of various aspects of music perception, composition, performance, and theory.31 Robotic musicianship refers to the intersection of these areas. Researchers within this space design music-playing robots with the necessary underlying musical intelligence to support both expressive solo performance and interaction with human musicians.37

Research in robotic musicianship has seen growing interest from both scientists and artists in recent years. This can be attributed to the domain’s interdisciplinary characteristics amenable to a wide-breadth of scientific and artistic endeavors. First, music provides an excellent platform for research because it allows us to transcend certain cultural barriers through common concepts such as rhythm and pitch. Second, robotic musicianship requires the integration of a wide variety of tasks, which combine aspects of engineering, computation, music, and psychology. Scholars in the field are drawn to this interdisciplinary nature and the inherent challenges involved in making a machine artistically expressive. Third, developing computer models for the perceptual and generative characteristics that support robotic musicianship can have broader impacts outside of artistic applications. Issues regarding timing, anticipation, expression, mechanical dexterity, and social interaction are pivotal to music and have numerous other functions in science as well.

Some researchers are drawn to robotic musicianship due to its potential for impact in other fields, ranging from cognitive science, human-robot interaction (HRI), to human anatomy. Music is one of the most time-demanding mediums where events separated by mere milliseconds are noticeable by human listeners. Developing HRI algorithms for anticipation or synchronization in a musical context can, therefore, become useful for other HRI scenarios, where accurate timing is required. Moreover, by building and designing robotic musicians scholars can better understand the sophisticated interactions between the cognitive and physical processes in human music making. By reconstructing sophisticated sound production mechanisms, robotic musicians can also help shed light on the role of embodiment in music making—how music perception and cognition interact with human anatomy and physicality.

There are also cultural and artistic motivations for developing robotic musicians. Robert Rowe, a composer and pioneer in the development of interactive music systems, writes, “if computers interact with people in a musically meaningful way, that experience will bolster and extend the musicianship already fostered by traditional forms of music education…and expand human musical culture.”31 Likewise, the goal of robotic musicianship is not only to imitate human creativity or replace it, but also to supplement it and enrich the musical experience for humans. Exploring the possibilities of combining computers with physical sound generators to create systems capable of rich, acoustic sound production; intuitive, physics-based visual cues from sound producing movements; and, expressive physical behaviors through sound accompanying body movements is an attractive venture for many artists. Additionally, there is artistic potential in the non-human characteristics of machines including increased precision, freedom of physical design, and the ability to perform fast computations. Discovering how best to leverage these characteristics to benefit music performance, composition, consumption, and education is a worthy investment as music is an essential component of human society and culture.

Another motivation for research in robotic musicianship stems from recent efforts to integrate personal robotics into everyday life. As social robots begin to penetrate the lives and homes of the public, music can play an important role in aiding the process. Music, in one form or another, is shared, understood, and appreciated by billions of people across many different cultures and epochs. Such ubiquity can be leveraged to yield a sense of familiarity between robots and people by using music to encourage human-robotic interaction. As more people begin to integrate robots into their everyday lives, trust and confidence in the new technology can be established.

But the recent interest in robotic musicianship has not come without popular criticism and misconceptions, mainly focused on the concern the ultimate goal of this research is to replace human musicality and creativity.a,b,c Though much of this concern may stem from the natural awe and unease accompanied by the introduction of new technology, it is important to reiterate both our own and Rowe’s sentiment that by placing humans alongside artistically motivated machines we are establishing an environment in which human creativity can grow, thus, enriching human musical culture rather than replacing it.

A related criticism comes from the belief the creation of music—one of the most unique, expressive and creative, human activities—could never be replicated by automated “soul-less” machines, at least not “good music.”d As veteran researchers in the field we believe robotic musicians bear great potential for enhancing human musical creativity and expression, rather than hindering it. For example, although in some cases the need for live musicians diminished when new technology was introduced (such as the introduction of talking films, or the MIDI protocol), technology has also introduced new opportunities in sound and music making, such as with sound design and recording arts. Similarly, disk jockeys introduced new methods for expressive musical performance using turntables and prerecorded sounds. Just as these new technologies expanded artistic creation, robotic musicianship has the potential to advance the art form of music by creating novel musical experiences that would encourage humans to create, perform, and think about music in novel ways. From utilizing compositional and improvisational algorithms that humans cannot process in timely manner (using constructs such as fractals or cellular automata) to exploring mechanical sound production capabilities that humans do not possess (from speed to timbre control), robotic musicians bear the promise of creating music that humans could never create by themselves and inspire humans to explore new and creative musical experiences, invent new genres, expand virtuosity, and bring musical expression and creativity to uncharted domains.

In this article we review past and ongoing research in robotic musicianship. We examine different methods for sound production and explore machine perception and the ability to decipher relevant musical events using various sensors. We discuss generative algorithms that enable the robot to complete different musical tasks such as improvisation or accompaniment. We then consider the design of social interactions and visual cues based on embodiment, anthropomorphism, and expressive gestures.

Sound Production and Design

For robots that play traditional acoustic instruments, typically the goal is to either emulate the human body and natural mechanics applied to playing an instrument or to augment an instrument to produce sound and create a physical affordance that is not possible in the instrument’s archety-pal state, such as a prepared piano. (For a detailed review of robotic musical instruments see Kapur15).

Methods for playing traditional instruments. Traditional acoustic instruments are designed for humans. Robots are not constrained to human form and, thus, can be designed in a number of ways. Emulating people can be mechanically challenging, but sometimes it is helpful to have an established reference inspired by human physiology and the various physical playing techniques employed by musicians. However, new types of designs allow researchers to break free from the constraints of human form and explore aspects of instrument playability related to speed, localization, and dynamic variations not attainable by humans. In this section we will explore examples of different design methodology for different instrument types and discuss their inherent advantages and disadvantages.

Percussive instruments. The mechanics involved in human drumming are complex and require controlled movement of and coordination between the wrist, elbow, and fingers. Subtle changes in the tightness of one’s grip on the drumstick, rate at which the wrist moves up and down, and distance between stick and drumhead give drummers expressive control over their performance. Human drummers use different types of strokes to achieve a variety of sounds and playing speeds. For example, for creating two hits separated by a large temporal interval, a drummer uses a single-stroke in which the wrist moves up and down for each strike. For two hits separated by a very small temporal interval a drummer may use a multiple-bounce stroke in which the wrist moves up and down once to generate the first strike, while subsequent strikes are achieved by adjusting the fingers’ grip on the drumstick to generate a bounce. Multiple motors, gears, solenoids, and a sophisticated control system are required to imitate such functionality.

No systems exist that emulate natural multiple-bounce stroke drumming technique, however, several systems have been designed to imitate velocity curves of single-stroke wrist and arm movements. The Cog robot from MIT uses oscillators for smoother rhythmic arm control.40 Another approach to smooth motion control is to use hydraulics as done by Mitsuo Kawato in his humanoid drummer.2 Sometimes robot designs are inspired more by the general physical shape of humans rather than specific human muscle and joint control. The humanoid keyboard playing WABOT has two hands for pressing keys and feet for pushing pedals.19 More recently Yoky Matsuoka developed an anatomically correct piano-playing hand.41

|

Piano-playing hand from the University of Washington. |

Many designers of robotic percussion instruments can achieve their sonic and expressive goals using a much simpler engineering method than what is needed in order to replicate human physical control. A common approach for robots (not constrained to human form) that play percussive instruments is to use a solenoid system that strikes percussion using a stick or other actuators. Erik Singer’s LEMUR bots33 and portions of Orchestrion (designed for Pat Methenye) use such an approach.

Singer’s projects as well as others use non-anthropomorphic designs in which individual solenoids are used to strike individual drums. In addition to a simplified actuation design, non-humanoid robots can produce musical results that are not humanly possible. For example, in Orchestrion each note on a marimba can be played simultaneously or sequentially in rapid fashion because a solenoid is placed on each key. This approach allows for increased flexibility in choosing different types of push and pull solenoids that can be used to achieve increased speed and dynamic variability.18 Unlike those of human percussionists, the single strokes generated by solenoids or motors can move at such great speeds it is not necessary to create double-stroke functionality.

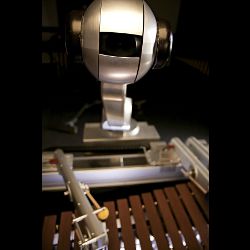

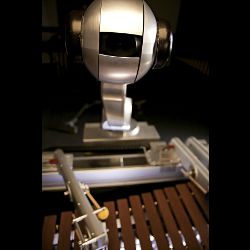

While solenoid-based designs are mechanically simple and capable of a wide range of expression, they tend to lack useful visual cues. Using a human shape provides interacting musicians and audience members with a framework they are more familiar with, thus, allowing them to better understand the relationship between sound generation and the accompanying robotic movements and gestures. Additionally, the visual cues provided by the larger human-like motion and gestural movements of humanoid robots allow the musicians to anticipate the robot’s actions and improve synchronization. Such visual cues cannot be yielded by a system such as Orchestrion. Some robots combine functions of both design methods in an attempt to simultaneously gain the benefits of the inhuman sound producing capabilities and important visual cues. The marimba-playing robot, Shimon, has four arms each with two solenoid activators for striking the keys. The movements of the arms combined with the eight fast-moving solenoids provides advantages from both design methods.38

|

Shimon from Georgia Tech’s Center for Music Technology. |

Stringed instruments. Similarly to the percussive robots, the design of several string-playing robots focus on achieving simple, yet robust excitation and pitch man ipulation rather than mimetic control and anthropomorphism. Sergi Jordà’s Afasia system14 is one such example. In this system solenoids are used to pluck strings, while pitch is controlled by either push solenoids placed along the bridge or motors that move the bridge itself. Singer’s Guitar-Bot uses a wheel embedded with guitar picks that can rotate at variable speeds to pluck strings and relies on a moving bridge assembly that rides on pulleys driven by a DC motor.

Anthropomorphism and emulating human plucking motion has not been attempted until recently. Work by Chadefaux et al. involves motion capture of human harp playing and the design of a robotic harp-playing finger. Silicone of various shape and hardness is placed on the fingertips in order to replicate different vibrational characteristics.6 An anthropomorphic violin-bowing arm was developed by Shibuya et al. The shoulder, elbow, and wrist is modeled to create expressive playing by controlling the bow’s pressure, position, and rate of movement.32 Similarly, Toyota’s robotic violin player replicates human form and motion.f

|

Violin-playing robot from Ryukoku University. |

Wind instruments. A main challenge for wind-instrument playing machines is producing the necessary energy fluctuations in order to make the instrument resonate at desired frequencies and volumes. Some address this challenge with non-humanoid designs and function. Roger Dannenberg’s McBlare is a non-humanoid robotic bagpipe player. An air compressor provides air and electro-magnetic devices control the power to “fingers,” which open and close the sound holes. While the speed of McBlare’s control mechanisms surpasses that of expert bagpipe performers it cannot equal the human skill of adapting to the characteristics of the reed.10 Another example of a non-anthropomorhpic robotic wind instrument is the Logo’s Foundation’s Autosax,24 which uses “compression drivers and acoustic impedance converters that feed the drive signal to the instrument via a capillary.”

Other wind instrument designs focus on replicating and understanding human physiology and anatomy. Ferrand and Vergez designed a blowing machine to examine the nonlinear dynamics natural human airflow by modeling the human mouth.11 They found the system’s performance was affected by the servo valve’s friction, cut-off frequency, and mounting stiffness of the artificial lips. Waseda University developed a humanoid flute-playing robot and more recently a sax-playing robot.36 The systems include degrees-of-freedom (DOFs) for controlling finger dexterity, lip and tongue control, and lung control (airpump and valve control).

|

Flutist from Waseda University. |

Augmenting traditional instruments and new instruments and interfaces. Unlike the examples described previously that demonstrate traditional instruments being played in traditional ways, there are also examples of traditional instruments being played in nontraditional ways. Augmenting musical instruments with mechanics is useful for acquiring additional sonic variety and playability. Trimpin’s Contraption Instant Prepared Piano is an example of instrument augmentation and enables mechanical bowing, plucking, and vibrating of piano strings to increase the harmonic and timbral range of the instrument. In many augmented instruments the human performer and robotic mechanisms share control of the sound producing characteristics. This creates an interesting interactive scenario that combines the traits inherent to the interaction of two individual musicians and the interaction between performer and instrument. Richard Logan-Greene’s systems, Brainstem Cello and ActionclarInet,g explore this type of shared interactive control as the instruments are outfitted with sensors to respond to the human performer by using mechanics to dampen strings or manipulate pitch in real time.

There are also examples of completely new instruments in which automated mechanical functions are necessary to create a performance. Trimpin’s “Conloninpurple” installation is made up of marimba-like bars coupled with aluminum resonating tubes and solenoid triggers. Each key is dispersed throughout a room to create a massive instrument capable of “three-dimensional” sound. Logan-Greene’s Submersible is a mechanical instrument that raises and lowers pipes in and out of water to modify their pitch and timbre.23

|

Submersible by Richard Logan-Greene. |

Musical Intelligence

The ultimate goal of robotic musicianship is to create robots that can demonstrate musicality, expressivity, and artistry while stimulating innovation and newfound creativity in other interacting musicians. In order to achieve such artistic sophistication a robotic musician must have the ability to extract information relevant to the music or performance and apply this information to its underlying decision processes.

Sensing methods. Providing machines with the ability to sense and reason about the world and their environments is a chief objective of robotics research in general. In musical applications, naturally, a large emphasis of sensing and analysis lies within the auditory domain. Extracting meaningful information from an audio signal is referred to as machine listening.31 There are numerous features that can be used to describe the characteristics of a musical signal. These descriptors are hierarchical in terms of both complexity and how closely they resemble human perceptions. Lower-level features typically describe physical characteristics of the signal. Higher-level descriptors, though often more difficult to compute, tend to correlate more strongly with the terms people generally use to describe music such as mood, emotion, and genre. Often these higher-level descriptors are classified by using a combination of low-level (amplitude and spectral centroid) and mid-level (tempo, chord, and timbre) features.

Determining which attributes of the sound are important is decided by the developer and predominantly relies on the desired functionality of the robot and its interaction with a person. Specific characteristics of the sound might be required for aesthetic reasons to support a specific interaction or there may be something inherent to the nature of the music that calls for a more narrowed focus in its analysis. For example, perhaps the developer intends for the system to interact with a drummer. In such a scenario audio analysis techniques that focus on rhythmic and timing qualities might be preferred over an analysis of pitch and harmony.

In fact, for many of the drumming robots the audio processing algorithms are indeed focused on aspects related to timing. A first step in this analysis is to detect musical events such as individual notes. This is referred to as onset detection and many methods exist for measuring an onset based on the derivative of energy with respect to time, spectral flux, and phase deviation.4 Instruments that exhibit longer transients (such as a cello or flute) and certain playing techniques (such as vibrato and glissandi) can pose challenges in detection. It is also controvertible whether the system should detect all physical onsets or all humanly perceptible onsets. Some methods are psychoacoustically informed to support the natural human perceptions in different portions of the frequency spectrum.9

An analysis of the onsets over time can provide additional cues related to note density, repetition, and beat. The notion of beat, which corresponds to the rate at which humans may tap their feet to the music, is characteristic to many types music. It is often computed by finding the fundamental periodicity of perceived onsets using autocorrelation.

The Haile robot continuously estimates the beat of the input rhythms and uses the information to inform its response.38 In Barton’s HARMI (Human and Robot Musical Improvisation) software he explores rhythmic texture by detecting multiple beat rates and not just those most salient to human listeners. This allows for more expressivity in the system by increasing rhythmic and temporal richness and allowing the robot to “feel” the beat in different ways. As a result the robot can detect “swing” in jazz music and the more rubato nature intrinsic to classical music.3

The ultimate goal of robotic musicianship is to create robots that can demonstrate musicality, expressivity, and artistry while stimulating innovation and newfound creativity in other interacting musicians.

Other analysis methods help robots to perceive the pitch and harmonic content of music. Though pitch detection of a monophonic signal has been achieved with relatively robust and accurate results, polyphonic pitch detection is more challenging.21 It is often desired to extract the melody from a polyphonic source. This generally consists of finding the most salient pitches and using musical heuristics (such as the tendency of melodic contours to be smooth) to estimate the most appropriate trajectory.29

Detecting harmonic structure in the context of a chord (three or more notes played simultaneously) is also important. Chord detection and labeling is performed using “chroma” features. The entire spectra is projected into 12 bins representing the 12 notes (or chroma) of a musical octave. The distribution of energy over the chroma is indicative of the chord being played. In Western tonal music Hidden Markov Models (HMMs) are often useful because specific chord sequences tend to occur more often and applying these chord progression likelihoods can be beneficial for chord recognition and automatic composition.7

Pitch tracking and chord recognition are useful abilities for robotic musicians. Some robots rely on these pitch-based cues to perform score following. The robot listens to other musicians in an ensemble and attempts to synchronize with them by estimating their location within a piece.26,34 Otsuka et al. describe a system that switches between modules based on melodic and rhythmic synchronization depending on a measure of the reliability of the melody detection.28 Other robotic systems that improvise such as Shimon use the chords in the music as a basis for its note generative decisions.

As an alternative to utilizing audio analysis for machine listening, symbolic protocols such as Musical Instrument Digital Interface (MIDI) and Open Sound Control (OSC) can be used to simplify some of the challenges inherent to signal analysis.

Machine vision and other sensing methods. Other cues outside of the audio domain can also provide meaningful information during a musical interaction. A performer’s movement, posture, and physical playing technique can describe information related to timing, emotion, or even the amount of experience with the instrument. Incorporating additional sensors can improve the machine’s perceptive capabilities, enabling it to better interpret the musical experience and perceptions of the interacting musicians.

Computer vision techniques have proven to be advantageous for several musical applications and interaction scenarios. Pan et al. program their humanoid marimba player to detect the head nods of an interacting musician.30 The nods encourage a more natural communication so humans and robots can exchange roles during the performance. Solis’ anthropomorphic flutist robot similarly uses vision to create more instinctive interactions by detecting the presence of a musical partner. Once detected the robot then listens and evaluates its partner’s playing.34 Shimon uses a Microsoft Kinect to follow a person’s arm movements. A person controls the specific motifs played by the robot and the dynamics and tempos by moving his arms in three dimensions. Shimon was also programmed to track infra-red (IR) lights mounted to the end of mallets. A percussionist is able to train the system with specific gestures and use these gestures to cue different sections of a precomposed piece.5

Mizumoto and Lim incorporate vision techniques to detect gestures for improved beat detection and synchronization.22,26 A robotic theremin player follows a score and synchronizes with an ensemble by using a combination of auditory and visual cues. Similarly, the MahaDeviBot utilizes a multimodal sensing technique to improve tempo and beat detection. Instead of using vision, an instrumentalist wears sensors measuring acceleration and orientation to provide information regarding his or her movement. The combined onsets detected by the microphone and wearable sensors are processed by Kalman filtering and the robot detects tempo in real time.16

Incorporating additional sensors can improve the machine’s perceptive capabilities, enabling it to better interpret the musical experience and perceptions of the interacting musicians.

Hochenbaum and Kapur extended their multimodal sensing techniques to classify different types of drum strokes and extract other meaningful information of a performance.12 They placed accelerometers on drummers’ hands to automatically annotate and train a neural network to recognize the different drum strokes from audio data. They then examined the inter-on-set-interverals between onsets found in both the audio and accelerometer data. They found there was a greater consistency between the physical and auditory onsets for the more expert musician. Such multimodal analyses that combine the physical and auditory spaces are becoming more standard in performance analysis.

The ability to synchronize musical events between interacting musicians is very important. For synchronization to occur, a system must know an event will occur before it actually happens. In music, these events are often realized in the form of a musical score, however, in some interactions such as improvisation there is no predefined score and synchronization may still be desired. Cicconet et al. explored visual cues based anticipation by developing a vision system that predicts the time at which a drummer will play a specific drum based off of his movement.8 Shimon was programmed to use these predictions to play simultaneously with drummer. The anticipation vision analyzes the up and downward motion of the drummer’s gesture by tracking an IR sensor attached to the end of a mallet. Using the mallet’s velocity, acceleration, and location the system predicts when the drumstick will make contact with a particular drum.

Generative functions. The second aspect of musical intelligence focuses on the system’s ability to play and perform music. This includes functions such as reading from a score, improvising, or providing accompaniment. While many examples for this functionality exist in the domain of computer-generated music, here we focus on methods that have been implemented specifically for robotic musicians. These generative functions may rely on statistical models, predefined rules, or abstract algorithms.

Modeling human performers. When reading from a precomposed score the robot does not need to decide what notes to play, but instead must decide how to play them in order to create an expressive performance. Designing expressivity in a robot is often achieved by observing human performers. Solis et al.35 describe such a methodology in their system. The expression of a professional flute player is modeled using artificial neural networks (ANNs) by extracting various musical features (pitch, tempo, volume, among others) from the performance. The networks learn the human’s rules for expressivity and output parameters describing a note’s duration, vibrato, attack time, and tonguing. These parameters are then sent to the robot’s control system.

Modeling human performers can also be used for improvisational systems. Shimon generates notes using Markov decision processes trained on performances of jazz greats such as John Coltrane and Thelonious Monk.27 The pitch and duration of their notes are modeled in 2nd order Markov chains.

Kapur et al. developed a robotic harmonium called Kritaanjli, which extracts information from an interacting human performer’s style of harmonium pumping and attempts to emulate it.17 The robot’s motors use information from a linear displacement sensor that measures the human’s pump displacement.

Algorithmic composition. Some music artificial intelligence (AI) systems rely on sets of rules, a hybrid of statistics and rules, or even algorithms with no immediate musical relevance to drive their decision processes.

The rule-based systems may attempt to formulate music theory and structure into formal grammars or may creatively map the robot’s perceptual modules to its sound generating modules. The Man and Machine Robot Orchestra at Logos has performed several compositions which employ interesting mappings24 for musical expression. In the piece, Horizon’s for Three by Kristof Lauwers and Moniek Darge, the pitches detected from a performer playing an electric violin are directly mapped to pitches generated by the automatons. The movements of the performers control the wind pressure of the organ. In another piece, Hyperfolly by Yvan Vander Sanden, the artist presses buttons that trigger responses from the machines. Pat Metheny similarly controls the machines in his Orchestrion setup. By playing specific notes or using other triggers the machines play precomposed sections of a piece.

For some interactions the robots are programmed with short musical phrases and rules for modifying and triggering them are implemented. Kritaanjli is programmed with a database of ragas from Indian classical music. The robot accompanies an improvising musician by providing the underlying melodic content of each raga and uses additional user input to switch between or modify the ragas.17 Shimon similarly uses predefined melodic phrases in a piece called Bafana by Gil Weinberg inspired by African marimba ensembles. Shimon listens for specific motifs and depending on the mode may either mimic and synchronize or play in canon creating new types of harmonies. At another point in the piece Shimon begins to change motifs using stochastic decision processes and the human performer then has a chance to respond.5

Many interaction scenarios designed for robotic musicians employ a hybrid of statistical models and rules. Different modes may rely on different note generative techniques. These modes can be mapped to certain parameters and triggered by interacting musicians either through the music (by playing a specific phrase or chord), physical motion (completing a predefined gesture) or other sensor (light, pressure, and so on). Cues that signal different sections or behaviors are commonly used in all-human ensembles and the question in robotic musicianship is how to create cues that can be robustly detected by the AI, yet, still maintain a natural and musical distinction.

Miranda and Tikhanoff describe an autonomous music composition system for the Sony AIBO robot that employs statistical models as well as rules.25 The system is trained on various styles of music and a short phrase is generated based off of the previous chord and first melodic note of the previous phrase. The AIBO interacts with its environment and the behavior of the composition is modified based off of obstacles, colors, the presence of humans, and different emotional states.

Though using traditional AI techniques such as ANNs and Markov Chains might capture the compositional strategies of humans, it is also common for composers and researchers to adapt a wide variety of algorithms to music generating functions. Often the behaviors of certain computational phenomena can yield interesting and unique musical results. Chaos, cellular automata, genetic, and swarm algorithms have all been repurposed for musical contexts and a few specific to robotic musicianship performances.

Weinberg et al. use a real-time genetic algorithm to establish human-robot improvisation.39 Short melodic phrases form the initial base population and dynamic time warping (DTW) is used as a similarity metric between observed phrases of the human performer in the algorithms fitness function. Mutating the phrases allows for more complexity and richer harmony and melodic content by transposing, inverting, and adding semitones to the phrases. The algorithm was implemented in Haile and the performance uses a call and response methodology in which the human performer and robot take turns using each other’s music to in their own responses.

Exploring decentralized musical systems has been explored by Albin et al. using musical swarm robots.1 Several mobile robots are each equipped with a smart-phone and solenoid that strikes a pitched bell. The robot’s location is mapped to different playing behaviors that manipulate the dynamics and rhythmic patterns played on the bell. Unique rhythmic and melodic phrases emerge when the robots undergo different swarming behaviors such as predator-prey, follow-the-leader, and flocking. Additionally, people interact with the robots by sharing the space with the robots and the robots respond to their presence (using face detection on the phone).

Embodiment

By integrating robotic platforms with machine musicians, researchers can bring together the computational, sonic, visual, and physical worlds, creating a sense of embodiment—the representation or expression of objects in a tangible and visible form. This can lead to richer musical interactions as users demonstrate increased attention, an ability to distinguish between an AI’s pragmatic actions (physical movements performed to bring the robot closer to its goal such as positioning its limbs to play a note) and epistemic actions (physical movements performed to gather information or facilitate computational processing such as positioning its sensors for an improved signal-to-noise ratio), and better musical coordination and synchronization as a result of the projection of physical presence and gestures.

Several human-robotic interaction studies outside of the context of music have demonstrated desirable interaction effects as a result of physical presence. The studies have found physical embodiment leads to increased levels of engagement, trust, perceived credibility, and more enjoyable interactions.20 Robotic musicians yield the same benefits for those directly involved with the interaction (other musicians) as well those merely witnessing it (audience members).

Physical presence can also directly affect the quality of the music. Hoffman and Weinberg examined the effects of physical presence and acoustic sound generation on rhythmic coordination and synchronization.13 The natural coupling between the robot’s spatial movements and sound generation afforded by embodiment allows for interacting musicians to anticipate and coordinate their playing with the robot, leading to richer musical experiences.

In addition to the cues provided by a robotic musician’s sound producing movements, physical sound-accompanying gestures can create better informed social interactions by communicating information related to a task, an interaction, or a system’s state of being. An important emphasis in our own research has been the design and development of robotic physical gestures that are not directly related to sound production, but can help convey useful musical information for human musicians and an observing audience. These gestures and physical behaviors are used to express the underlying computational processes allowing observers to better understand how the robot is perceiving and responding to the music and the environment. A robot’s gaze, posture, velocity, and motion trajectories can be manipulated for communicative function. For example, simple behaviors designed for Shimon, such as nodding to the beat, looking at its marimba during improvisation, and looking at interacting musicians when listening help enhance synchronization among the ensemble members and to reinforce the tempo and groove of the music.

In robotics, the term “path planning” refers to the process of developing a plan of discrete movements that satisfies a set of constraints to reach a desired location. In a musical context, robots may not be able to perform specific instructions in a timely, coordinated, and expressive manner, which requires employing musical considerations in designing a path-planning algorithm. Such considerations may shed light on conscious as well as subconscious path planning decisions human musicians employ while coordinating their gestures (such as finger order in piano playing). In robotic musicianship path planning and note generative and rhythmic decisions become an integrated process.

Summary and Evaluation

In this article we presented several significant works in the field of robotic musicianship and discussed some of the different methodologies and challenges inherent to designing mechanical systems that can reason about music and musical interactivity. The projects discussed bring together a wide variety of disciplines from mechanics, through perception, to artificial creativity. Each system features a unique balance between these disciplines, as each is motivated by a unique mixture of goals and challenges, from the artistic, through scientific, to the engineering-driven. This interdisciplinary set of goals and motivations makes it difficult to develop a standard for evaluating robotic musicians. For example, when the goal of a project is to design a robot that can improvise like a jazz master, then a musical Turing test can be used for evaluation; if the goal is artistic and experimental in nature, such as engaging listeners with an innovative performance, then perhaps positive reviews of the concert are indicative of success; and if the project’s motivation is to study and model humans’ sound generation mechanism, anatomy- and cognitive-based evaluation methods can be employed.

Most of the projects discussed in this article include evaluation methods that address the project’s specific goals and motivations. Some projects, notably those with artistic and musical motivations, do not include an evaluation process. Others address multiple goals, and therefore include a number of different evaluation methods for each aspect of the project. For example, in computational-cognition based projects, robots’ perceptual capabilities are measured by comparing the algorithm against a ground truth and calculating accuracy. However, establishing ground truths in such projects has proven to be a challenging task as many parameters in music are ambiguous and subject to individual interpretation. HRI motivated experiments, on the other hand, are designed to evaluate interaction parameters such as the robots’ ability to communicate and convey relevant information through their music generating functions or physical gesture. We believe as research and innovation in robotic musicianship continues to grow and mature, more unified and complete evaluation methods will be developed.

Though robotic musicianship has seen many great developments in the last decade, there are still many white spaces to be explored and challenges to be addressed—from developing more compelling generative algorithms to designing better sensing methods enabling the robot’s interpretations of music to correlate more closely with human perceptions. We are currently developing new types of robotic interfaces, such as companion robots and wearable robotic musicians, with the goal of expanding the breadth and depth of musical human-robotic interaction. In addition to developing new interfaces, sensing methods, and generative algorithms we are also excited to showcase our developments through performances as part of the Shimon Robot and Friends Tour.h We look forward to continue to observe and contribute to new developments in this field, as robotic musicians continue to increase their presence in everyday life, paving the way to the creation of novel musical interactions and outcomes.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment