We like to think we have been surfing a tsunami of computing innovation over the past 70 years: mainframe computers, microprocessors, personal computers, the Internet, the World Wide Web, search, cloud computing, social media, smartphones, tablets, big data, and the like. The list goes on and on, and the future for continuing innovation is quite bright, according to the conventional wisdom.

Recently, however, several people have been questioning this techno-optimism. In a commencement address at Bard College at Simon’s Rock, U.S. Federal Reserve chair Ben Bernanke compared life today to his life as a young boy in 1963, and his grandparents’ lives in 1913. He argued that the period from 1913 to 1963 saw a dramatic improvement in the quality of daily life, driven by automobilization, electrification, sanitation, air travel, and mass communication. In contrast, life today does not seem that different than life in 1963, other than the fact we talk less to each other, and communicate more via email, text, and social postings.

In fact, the techno-pessimists argue the economic malaise that we seem unable to pull ourselves out of—the sluggish economic growth in the U.S., the rolling debt crisis in Europe, and the slowdown of the BRICS—is not just a result of the financial crisis but also an indication of an innovation deficit. Tyler Cowen has written about the "great stagnation," arguing we have reached a historical technological plateau and the factors that drove economic growth since the start of the Industrial Revolution are mostly spent. Robert Gordon contrasted the 2.33% annual productivity growth during 1891-1972 to the 1.55% growth rate during 1972-2012. Garry Kasparov argued that most of the science underlying modern computing was already settled in the 1970s.

The techno-optimists dismiss this pessimism. Andrew McAfee argued that new technologies take decades to achieve deep impact. The technologies of the Second Industrial Revolution (1875-1900) took almost a century to fully spread through the economies of the developed world, and have yet to become ubiquitous in the developing world. In fact, many predict we are on the cusp of the "Third Industrial Revolution." The Economist published a special report last year that described how digitization of manufacturing will transform the way goods are made and change the job market in a profound way.

So which way is it? Have we reached a plateau of innovation, dooming us to several decades of sluggish growth, or are we on the cusp of a new industrial revolution, with the promise of dramatic changes, analogous to those that took place in the first half of the 20th century?

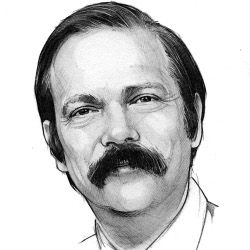

From my perch as the editor-in-chief of Communications of the ACM, I find it practically impossible to be a pessimist (which is my natural inclination). The flow of exciting research and news articles that we publish monthly continues to be innovative and exciting. No stagnation here!

Last year ACM celebrated Alan Turing’s centenary by assembling a historic gathering of almost all of the living ACM A.M. Turing Award Laureates for a two-day event in San Francisco. Over 1,000 participants attended the meeting and the buzz was incredible. Participants I talked to told me this was one of the most moving scientific meetings they have ever attended. We were celebrating not only Turing’s centenary, but also 75 years of computing technology that has changed the world, as well as the people who pioneered that technology. Like McAfee, it is difficult for me to imagine this technology not broadening and deepening its impact on our lives.

Earlier this year the McKinsey Global Institute issued a report on "Disruptive Technologies: Advances that will transform life, business, and the global economy," in which they assert that many emerging technologies "truly do have the potential to disrupt the status quo, alter the way people live and work, and rearrange value pools." By 2025, the report predicted a $5-$7 trillion potential economic impact from automation of knowledge work and the prevention of 1.5 million driver-caused deaths from car accidents by automation of driving. The 12 potential economically disruptive technologies listed in the report are: mobile Internet; knowledge-work automation; the Internet of Things; cloud technology; advanced robotics; autonomous and near-autonomous vehicles; next-generation genomics; energy storage; 3D printing; advanced materials; advanced oil and gas exploration and recovery; and renewable energy.

"Predictions are difficult," goes the saying, "especially about the future." It will be about 25 years before we know who is right, the techno-pessimists or the techno-optimists. For now, however, count me an optimist, for a change!

Moshe Y. Vardi, EDITOR-IN-CHIEF

Join the Discussion (0)

Become a Member or Sign In to Post a Comment