Over the last half-century, as computing has advanced by leaps and bounds, one thing has remained fairly static: Moore’s Law. The concept, named after semiconductor pioneer Gordon Moore, is based on the observation that the number of transistors packed into an integrated circuit (IC) doubles approximately every two years. For more than 50 years, this concept has provided a predictable framework for semiconductor development. It has helped computer manufacturers and many other companies focus their research and plan for the future.

However, there are signs that Moore’s Law is reaching the end of its practical path. Although the IC industry will continue to produce smaller and faster transistors over the next few years, these systems cannot operate at optimal frequencies due to heat dissipation issues. This has “brought the rate of progress in computing performance to a snail’s pace,” wrote IEEE fellows Thomas M. Conte and Paolo A. Gargini in a 2015 IEEE-RC-ITRS report, On the Foundation of the New Computing Industry Beyond 2020.

Yet, the challenges do not stop there. There is also the fact that researchers cannot continually miniaturize chip designs; at some point over the next several years, current two-dimensional ICs will reach a practical size limit. Although researchers are experimenting with new materials and designs—some radically different—there currently is no clear path to progress. In 2015, Gordon Moore predicted the law that bears his name will wither within a decade. The IEEE-RC-ITRS report noted: “A new way of computing is urgently needed.”

As a result, the semiconductor industry is in a state of flux. There is a growing recognition that research and development must incorporate new circuitry designs and rely on entirely different methods to scale up computing power further. “For many years, engineers didn’t have to work all that hard to scale up performance and functionality,” observes Jan Rabaey, professor and EE Division Chair in the Electrical Engineering and Computer Sciences Department at the University of California, Berkeley. “As we reach physical limitations with current technologies, things are about to get a lot more difficult.”

The Incredible Shrinking Transistor

The history of semiconductors and Moore’s Law follows a long and somewhat meandering path. Conte, a professor at the schools of computer science and engineering at Georgia Institute of Technology, points out that computing has not always been tied to shrinking transistors. “The phenomenon is only about three decades old,” he points out. Prior to the 1970s, high-performance computers, such as the CRAY-1, were built using discrete emitter-coupled logic-based components. “It wasn’t really until the mid-1980s that the performance and cost of microprocessors started to eclipse these technologies,” he notes.

At that point, engineers developing high-performance systems began to gravitate toward Moore’s Law and adopt a focus on microprocessors. However, the big returns did not last long. By the mid-1990s, “The delays in the wires on-chip outpaced the delays due to transistor speeds,” Conte explains. This created a “wire-delay wall” that engineers circumvented by using parallelism behind the scenes. Simply put: the technology extracted and executed instructions in parallel, but independent, groups. This was known as the “superscalar era,” and the Intel Pentium Pro microprocessor, while not the first system to use this method, demonstrated the success of this approach.

Around the mid-2000s, engineers hit a power wall. Because the power in CMOS transistors is proportional to the operating frequency, when the power density reached 200W/cm2, cooling became imperative. “You can cool the system, but the cost of cooling something hotter than 150 watts resembles a step function, because 150 watts is about the limit for relatively inexpensive forced-air cooling technology,” Conte explains. The bottom line? Energy consumption and performance would not scale in the same way. “We had been hiding the problem from programmers. But now we couldn’t do that with CMOS,” he adds.

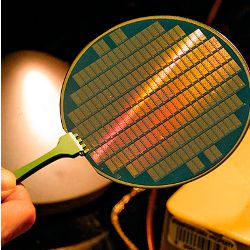

No longer could engineers pack more transistors onto a wafer with the same gains. This eventually led to reducing the frequency of the processor core and introducing multicore processors. Still, the problem didn’t go away. As transistors became smaller—hitting approximately 65nm in 2006 —performance and economic gains continued to subside, and as nodes dropped to 22nm and 14nm, the problem grew worse.

What is more, all of this has contributed to fabrication facilities becoming incredibly expensive to build, and semiconductors becoming far more expensive to manufacture. Today, there are only four major semiconductor manufacturers globally: Intel, TSMC, GlobalFoundries, and Samsung. That is down from nearly two dozen two decades ago.

To be sure, the semiconductor industry is approaching the physical limitations of CMOS transistors. Although alternative technologies are now in the research and development stage—including carbon nanotubes and tunneling field effect transistors (TFETs)—there is no evidence these next-gen technologies will actually pay off in a major way. Even if they do usher in further performance gains, they can at best stretch Moore’s Law by a generation or two.

“There are technical and engineering challenges, economic challenges because we’re seeing fewer industry players, and fundamental changes in the way people use computing devices.”

In fact, industry groups such as the IEEE International Roadmap of Devices and Systems (IRDS) initiative have reported it will be nearly impossible to shrink transistors further by 2023.

Observes Michael Chudzik, a senior director at Applied Materials: “Semiconductor technology is challenged on many fronts. There are technical and engineering challenges, economic challenges because we’re seeing fewer industry players, and fundamental changes in the way people use computing devices” such as smartphones, as well as cloud computing, and the Internet of Things (IoT), which place entire different demands on ICs. This makes the methods of the past less desirable in the future. “We are entering a different era,” Rabaey observes.

Designs on the Future

Mapping out a future for integrated circuits and computing is paramount. One option for advancing chip performance is the use of different materials, Chudzik says. For instance, researchers are experimenting with cobalt to replace tungsten and copper in order to increase the volume of the wires, and studying alternative materials for silicon. These include Ge, SiGE and III-V materials such as gallium arsenide and gallium indium arsenide. However, these materials present performance and scaling challenges and, even if those problems can be addressed, they would produce only incremental gains that would tap out in the not-too-distant future.

Faced with the end of Moore’s Law, researchers are also focusing attention on new and sometimes entirely different approaches. One of the most promising options is stacking components and scaling from today’s 2D ICs to 3D designs, possibly by using nanowires. “By moving into the third dimension and stacking memory and logic, we can create far more function per unit volume,” Rabaey explains. Yet, for now, 3D chip designs also run into challenges, particularly in terms of cooling. The devices have less surface volume as engineers stack components. As a result, “You suddenly have to do processing at a lower temperature or you damage the lower layers,” he notes.

Consequently, a layered 3D design, at least for now, requires a fundamentally different architecture. “Suddenly, in order to gain denser connectivity, the traditional approach of having the memory and processor separated doesn’t make sense. You have to rethink the way you do computation,” Rabaey explains. It’s not an entirely abstract proposition. “The advantages that some applications tap into—particularly machine learning and deep learning, which require dense integration of memory and logic—go away.” Adding to the challenge: a 3D design increases the risk of failures within the chip. “Producing a chip that functions with 100% integrity is impossible. The system must be fail-tolerant and deal with errors,” he adds.

Regardless of the approach and the combination of technologies, researchers are ultimately left with no perfect option. Barring a radical breakthrough, they must rethink the fundamental way in which computing and processing take place.

Conte says two possibilities exist beyond pursuing the current technology direction.

One is to make radical changes, but limit these changes to those that happen “under the covers” in the microarchitecture. In a sense, this is what took place in 1995, except “today we need to use more radical approaches,” he says. For servers and high-performance computing, for example, ultra-low-temperature superconducting is being advanced as one possible solution. At present, the U.S. Intelligence Advanced Research Projects Activity (IARPA) is investing heavily in this approach within its Cryogenic Computing Complexity (C3) program. These non-traditional logic gates are made in small scale, at a size roughly 200 times larger than today’s transistors.

Another is to “bite the bullet and change the programming model,” Conte says. Although numerous ideas and concepts have been forwarded, most center on creating fixed-function (non-programmable) accelerators for critical parts of important programs. “The advantage is that when you remove programmability, you eliminate all the energy consumed in fetching and decoding instructions.” Another possibility—and one that is already taking shape—is to move computation away from the CPU and toward the actual data. Essentially, memory-centric architectures, which are in development in the lab, could muscle up processing without any improvements in chips.

Finally, researchers are exploring completely different ways to compute, including neuromorphic and quantum models that rely on non-Von-Neumann brain-inspired methods and quantum computing. Rabaey says processors are already heading in this direction. As deep learning and cognitive computing emerge, GPU stacks are increasingly used to accelerate performance at the same or lower energy cost as traditional CPUs. Likewise, mobile chips and the Internet of Things bring entirely different processing requirements into play. “In some cases, this changes the paradigm to lower processing requirements on the system but having devices everywhere. We may see billions or trillions of devices that integrate computation and communication with sensing, analytics, and other tasks.”

In fact, as visual processing, big data analytics, cryptography, AR/VR, and other advanced technologies evolve, it is likely researchers will marry various approaches to produce boutique chips that best fit the particular device and situation. Concludes Conte: “The future is rooted in diversity and building devices to meet the needs of the computer architectures that have the most promise.”

Conte, T.M., and Gargini, P.A.

On the Foundation of the New Computing Industry Beyond 2020, Executive Summary, IEEE Rebooting Computing and ITRS. September 2015. http://rebootingcomputing.ieee.org/images/files/pdf/prelim-ieee-rc-itrs.pdf

Lam., C.H.

Neuromorphic Semiconductor Memory, 3D Systems Integration Conference (3DIC), 2015 International, 31 Aug.-2 Sept. 2015. http://ieeexplore.ieee.org/document/7334566/

Claeys, C., Chiappe, D., Collaert, N., Mitard, J., Radu, I., Rooyackers, R., Simoen, E., Vandooren, A., Veloso, A., Waldron, NH. Witters, L., and Thean, A.

Advanced Semiconductor Devices for Future CMOS Technologies, ECS Transactions, 66 (5) 49–60 (2015) 10.1149/06605.0049ecst ©The Electrochemical Society. 2015. https://www.researchgate.net/profile/C_Claeys/publication/277896307_Invited_Advanced_Semiconductor_Devices_for_Future_CMOS_Technologies/links/565ad44408aefe619b240bcc.pdf

Cheong, H.

Management of Technology Strategies Required for Major Semiconductor Manufacturer to Survive in Future Market, Graduate School of Management of Technology, Hoseo University, Asan 336–795, Korea, Procedia Computer Science 91 (2016) 1116 – 1118. Information Technology and Quantitative Management (ITQM 2016). http://www.sciencedirect.com/science/article/pii/S1877050916313564

Join the Discussion (0)

Become a Member or Sign In to Post a Comment