Over the last decade, digital photography has revolutionized the way people snap, store, and share photos. It has unleashed remarkable features and capabilities that have transformed the way we think about images…and the world around us. Yet, for all the advances in megapixels and signal processing, one fact remains: “Today’s digital cameras were designed to replace analog information-gathering devices such as film,” observes Richard Baraniuk, a professor of electrical and computer engineering at Rice University.

However, times and technology are changing. Researchers are exploring how to use computational optics and digital imagery in new and innovative ways. Lensless cameras, single-pixel imagery, devices that can see around corners and a number of other technology breakthroughs could transform photography and computational optics in ways that would have been unimaginable only a few years ago. These devices—particularly as they capture images outside the visible light spectrum—could address a wide array of challenges, from managing traffic networks and fighting crime to improving medicine.

That is no small matter, particularly as networks expand and mobile technology matures. Says Paul Wilford, senior director for Bell Labs, which has designed and built a prototype lensless camera: “As we move into a world where we rely on cameras and sensors to monitor and manage an array of tasks, we will need new types of devices and technological breakthroughs. We must find new ways to capture, compress, and use imagery.”

Pixel Perfect

Venturing into the future of digital imagery requires researchers to fundamentally rethink—but also revisit—the concept of photography. The traditional method of acquiring an image requires a lens and some type of medium (typically light-sensitive photographic paper or a sensor) to capture photons as dots or pixels. These techniques evolved from the concept of a camera obscura, a device that dates back thousands of years, which uses a pinhole to capture light and display images. Among its first uses were the viewing of solar eclipses and the patterns of shadows cast by trees and other objects.

Modern digital photography is incredibly effective at capturing images. Today’s cameras store data in millions of pixels and rely on sophisticated sensors, signal processing, and algorithms to produce extraordinarily high-quality photographs. Over the last decade, conventional film has largely disappeared and digital cameras built into smart devices are now ubiquitous.

The goal of today’s researchers is not to replace these cameras. Yaron Bromberg, a post-doctoral associate at Yale University, believes capturing images from non-visible parts of the spectrum, including infrared and terahertz radiation, could lead to breakthroughs in fields as diverse as medicine and environmental research. He is in a group that has studied pseudothermal compressive ghost imaging and how to build a single-pixel light capture system. “Today, devices that capture images in non-visible parts of the spectrum are expensive. Future cameras could open up a vast array of possibilities.”

Others, including Wilford, share that vision. “There have been fantastic advances in digital cameras, particularly in optical wavelengths. We can capture millions of pixels at a rate of hundreds of times per second with remarkable resolution. We also have coding algorithms that reduce file size and bandwidth,” notes Wilford. Capturing images in new ways and designing new techniques to compress files remains at the frontier of computational optics.

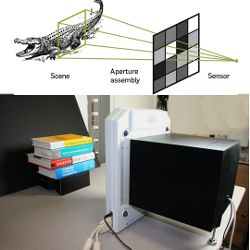

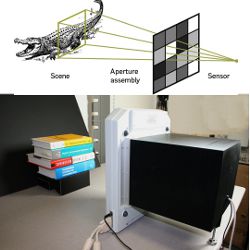

The Bell Labs team has developed a so-called “lensless camera” that relies on compressive sensing techniques to capture images. The system, built from off-the-shelf components, captures light via a two-dimensional array of photoelectric sensing elements built into an LCD panel. A micromirror array performs the functions of both pixelization and projection (attenuating aperture layers are used to create a pinhole which forms an image of the scene on the sensor array). Each array randomly records light from the scene; a sensor is able to detect three colors. Researchers can control each aperture element independently in accordance with the values of the overall sensing matrix. By analyzing multiple images, extrapolating on the data and redundancies and then correlating data points, it is possible to create a photographic image.

Bell Labs engineer Gang Huang says the accuracy of the lensless system—which can capture both visible and non-visible light—improves with more snapshots of the same object. However, the goal is not to obtain an attractive high-resolution image suitable for online viewing or a photographic print. “The appeal is that we are able to obtain a good image with only a fraction of the data that is required for a conventional image,” he says. The system can reduce file size by factors of anywhere from 10 to 1,000, all while eliminating the need to focus a lens. In fact, the virtual image is not formed by any physical mechanism and, in the end, no planar image is ever created. The quality of the image is affected only the quality of the resolution.

Lensless cameras and other technology breakthroughs could transform photography and computational optics in ways unimaginable only a few years ago.

The technology creates new possibilities and opportunities. For example, it could prove valuable for monitoring traffic across a network of roads or highways. “If you have 1,000 cameras on the road and each produces a single pixel, the resulting pixel stream requires much less data,” Wilford explains. The lensless system is able to detect infrared and millimeter waves; this could make it valuable for monitoring airports, stadiums, businesses, and other facilities. Alterations in consecutive images could alert a security team that something has changed, and indicate the speed of the change.

Although the Bell Labs group has successfully tested the lensless camera, the obstacle for now is the size of the device, which measures about a foot in height, width, and depth, notes Hong Jiang, a mathematician and signal processing expert for the team. Over the next few years, the researchers hope to dramatically reduce the size of the device while making it operate faster. “There are a lot of interesting possibilities and we have just begun to examine them,” Wilford says.

Mixed Signals

Other researchers are also hoping to rethink and reinvent photography. At Rice University, Baraniuk has also explored the use of single-pixel image capture, a technique he pioneered with Rice University electrical engineering professor Kevin Kelly. His research has focused on using bacterium-sized micro-mirrors that reflect light onto a single sensor; each mirror corresponds to a particular pixel. Just as high-resolution photographic images can be compressed by stripping out unneeded data and storing the file in JPEG format, it is possible to extrapolate on existing data in the image. The technique cycles through 50,000 measurements in order to find the optimal directional orientation for the mirrors.

The concept is appealing because a single-pixel design reduces the required size, complexity, and cost of a photon detector array down to a single unit. It also makes it possible to use exotic detectors that would not work in conventional digital cameras. For example, it could include a photomultiplier tube or an avalanche photodiode for low-light imaging, layers of photodiodes sensitive to different light wavelengths for multimodal sensing, or a spectrometer for hyperspectral imaging. In addition, a single-pixel design collects considerably more light in each measurement than a pixel array, which significantly reduces distortion from sensor nonidealities like dark noise and read-out noise.

These multiplexing capabilities, combined with shutterless sensing and compressive sensing, could lead to new types of cameras that might, for example, allow motorists to see through fog or to better see objects on the road at night, and thus avoid crashing into them or driving over them. Ultimately, the concept redraws the boundaries of imaging. “What we have come to realize,” Baraniuk says, “is that digital measurements that we generate at a scene or in the world around us do not need to be exactly analogous with how we use a film or conventional digital camera.”

There also is growing interest in engineering cameras that can see through solid objects. Researchers at Duke University, for example, have developed a camera that detects and records microwave signals. The device uses a one-dimensional aperture constructed from copper-based metamaterial to capture data that it sends to a computer, which constructs an actual image. Among other things, the device could prove valuable to law enforcement agencies; for example, passengers at airports could simply walk past the device at a security checkpoint while it scans for weapons and explosives. There would no longer be a need for long security queues.

“We are on the verge of remarkable breakthroughs in the way we think about photography and use computational optics.”

Scientists are peering into other concepts that are equally mind-bending. For example, MIT associate professor Ramesh Raskar and a group of researchers are exploring the emerging field of Femto-photography; their goal is to build a camera that can see around corners and peer beyond the line of sight. Such a device could provide access to dangerous and inaccessible locations including mines, contaminated sites, and inside certain machinery. He describes the method as using “echoes of light” to capture information about the overall environment.

The concept, which the group has already tested successfully, uses a laser light burst directed at a wall or object. The camera records images every 2 picoseconds, the time it takes light to travel 0.6 millimeters. By measuring the time it takes for scattered photons to reach a camera—and repeating the process over 50 femtoseconds (50 quadrillionths of a second), it is possible to construct a view of the hidden scene. The system relies on a sophisticated algorithm to decode photon patterns. At present, the entire process takes several minutes, though researchers hope to reduce the imaging time to less than 10 seconds.

Baraniuk believes researchers will overcome many existing hurdles—particularly surrounding signal processing and computational analysis—over the next decade. They have already taken a giant step in that direction by constructing algorithms that sidestep conventional signal processing and instead mine big data. Over the next few years, as imaging systems and computers advance, once-abstract and seemingly unachievable photographic methods will become reality. One company, InView, has already begun to introduce cameras that use advanced imaging and compressive sensing techniques.

Werner says computational imagery increasingly will tie in augmented reality. He believes computational imaging and sensing will also meld with 3D cameras, night vision, adaptive resolution technology, holographic displays, and negative index material refraction. As hardware gets “faster and cheaper and algorithms become more sophisticated,” next-generation cameras and image-capture devices will also connect with social networks and provide new ways for people to view and share the surrounding environment.

“We are on the verge of remarkable breakthroughs in the way we think about photography and use computational optics,” Baraniuk concludes. In fact, “At some point, the end result might not be an actual image. The camera might make inferences and decisions based on certain parameters, including where a person is located in a room or the overall pattern of cars on a network of roads. We are moving into a world where there will be lots of different ways to sense what is taking place around us.”

Further Reading

Huang, G., Jiang, H., Matthews, K., Wilford, P.

Lensless Imaging by Compressive Sensing, IEEE International Conference on Image Processing, ICIP 2013, Paper #2393, May 2013. http://arxiv.org/abs/1305.7181

Katz, O., Bromberg, Y., Silberberg, Y.

Compressive Ghost Imaging, Dept. of Physics of Complex Systems, The Weizmann Institute of Science, Rehovot, Israel. http://arxiv.org/pdf/0905.0321v2.pdf

Baraniuk, R.G.

More is Less: Signal Processing and the Data Deluge, Science, Data Collections Booklet, 2011. http://www.ncbi.nlm.nih.gov/pubmed/21311012

Velten, A., Willwacher, T., Gupta, O., Veeraraghavan, A., Bawendi, M.G., Raskar, R.

Recovering three-dimensional shape around a corner using ultrafast time-of-flight imaging, Nature Communications 3, Article number: 745, Published 20 March 2012. http://www.nature.com/ncomms/journal/v3/n3/full/ncomms1747.html

Figures

Figure. At top, a diagram of how Bell Labs’ lensless camera works: its single-pixel sensor is arrayed behind an aperture assembly that can create a matrix of apertures of varying opacity, so multiple measurements of light data can be conducted at once. Bottom, the lensless camera (black box) undergoes testing.

Figure. At top, a diagram of how Bell Labs’ lensless camera works: its single-pixel sensor is arrayed behind an aperture assembly that can create a matrix of apertures of varying opacity, so multiple measurements of light data can be conducted at once. Bottom, the lensless camera (black box) undergoes testing.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment