Over the past five years, machine learning has blossomed from a promising but immature technology into one that can achieve close to human-level performance on a wide array of tasks. In the near future, it is likely to be incorporated into an increasing number of technologies that directly impact society, from self-driving cars to virtual assistants to facial-recognition software.

Yet machine learning also offers brand-new opportunities for hackers. Malicious inputs specially crafted by an adversary can “poison” a machine learning algorithm during its training period, or dupe it after it has been trained. While the creators of a machine learning algorithm usually benchmark its average performance carefully, it is unusual for them to consider how it performs against adversarial inputs, security researchers say.

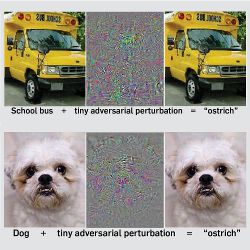

The emerging field of adversarial machine learning is exploring these vulnerabilities. In the past few years, researchers have figured out, for example, how to make tiny, imperceptible changes to an image to fool vision processing systems into interpreting an image humans see as a school bus as an ostrich instead. Such deceptions often can be carried out with virtually no knowledge about the inner workings of the machine learning algorithm under attack.

Machine learning can be easy to fool, computer scientists warn. “We don’t want to wait until machine learning algorithms are being used on billions of devices, and then wait for people to mount attacks,” said Nicholas Carlini, a graduate student in adversarial machine learning at the University of California, Berkeley, who has crafted audio files that sound like white noise to humans, but like commands to speech recognition algorithms. “We need to think of the attacks as early as possible.”

Attacking the Black Box

Adversarial machine learning has been studied for more than a decade in a few security-related settings, such as spam filtering and malware detection. However, the advent of deep neural networks has greatly expanded the scope both of the potential applications of machine learning and the attacks that can be carried out upon it.

Until very recently, it did not make much sense to study how to trick a neural network, said Ian Goodfellow, an adversarial machine learning expert at OpenAI, a research institute in San Francisco. “Five years ago, if I said ‘hey, I found an example that my neural network misclassifies,’ that would be the rule, not the exception,” he said. “Only recently has machine learning become accurate enough for it to be interesting when it’s inaccurate.”

A paper posted online in 2013 launched the modern wave of adversarial machine learning research by showing, for three different image processing neural networks, how to create “adversarial examples”—images that, after tiny modifications to some of the pixels, fool the neural network into classifying them differently from the way humans see them. Subsequent papers have created many more such adversarial examples—for instance, one that forced a deep neural network to classify what humans see as a “stop” sign instead as a “yield” sign.

While it is not clear whether such examples could be used in the real world to attack self-driving cars, “it’s important for the designers of self-driving cars to understand that one subsystem might totally fail because of one adversarial example,” said Goodfellow, one of the authors of the 2013 paper. “It highlights the importance of using several redundant safety systems, and making sure an attacker can’t compromise them all at the same time.”

The reason deep neural networks can be fooled by adversarial examples, Goodfellow said, is that while these complex networks are made up of many layers of processors, each individual layer essentially uses a piecewise linear function to transform the input. This linearity makes the model prone to overconfidence about its inferences, he said.

“If you find a direction to move in that makes the output more likely to say the image is a cat, then you can keep moving in the same direction for a long time, and the output will be saying more and more strongly that it’s a cat,” Goodfellow said. “This says that neural networks can extrapolate in really extreme ways.” Subtle adjustments to pixels that nudge an image along this “cat” direction can make the neural network mistake the image for a cat, even if to a human being, the altered image looks indistinguishable from the original.

“Only recently has machine learning become accurate enough for it to be interesting when it’s inaccurate.”

At the same time, linearity does not explain all the vulnerabilities of machine learning algorithms to adversarial examples. Last year, for instance, three researchers at the University of California, Berkeley—Alex Kantchelian, Doug Tygar, and Anthony Joseph—showed a highly nonlinear machine learning model called “boosted trees” is also highly susceptible to adversarial examples. “It’s easy to design evasions for them,” Kantchelian said.

The earliest adversarial examples were concocted by researchers who had full access to the machine learning model under attack. Yet even with those first examples, researchers started noticing something strange: examples designed to fool one machine learning algorithm often fooled other machine learning algorithms, too.

That means an attacker does not necessarily need access to a machine learning model’s architecture or training data to attack it. As long as the attacker can query the model to see how it classifies various data, he or she can use that data to train a different model, build adversarial examples for that model, then use those to trick the original model.

Researchers do not fully understand why adversarial examples transfer from one model to another, but they have confirmed the phenomenon in increasingly broad settings. In a paper posted online in May, Goodfellow, together with Nicolas Papernot and Patrick McDaniel of Pennsylvania State University, showed that adversarial examples transfer across five of the most commonly used types of machine learning algorithms: neural networks, logistic regression, support vector machines, decision trees, and nearest neighbors.

The team carried out “black box” attacks—with no knowledge of the model—on classifiers hosted by Amazon and Google. They found after only 800 queries to each classifier, they could create adversarial examples that fooled the two models 96% and 89% of the time, respectively.

Not every adversarial example crafted on one machine learning model will transfer to a different target, Papernot said, but “for an adversary, sometimes just a small success rate is enough.”

Getting Ready for Prime Time

Human vision systems have adversarial examples of our own, more commonly known as optical illusions. For the most part, though, people process visual data remarkably effectively. As computer vision systems approach human-level performance on particular tasks, adversarial examples offer a new way to benchmark performance, besides measuring how well an algorithm performs on typical inputs. Adversarial inputs “help find the flaws in neural networks that do really well on the more traditional kinds of benchmarks,” Goodfellow said.

“In a perfect world, what I would like to see in papers is, ‘Here is a new machine learning algorithm, and here is the standard benchmark for how it does on accuracy, and here is the standard benchmark on how it performs against an adversary,” Carlini said. Adversarial machine learning experts have some leads on what such a benchmark should look like, Papernot said, but no such benchmark has been established yet.

Software developers have a history of adding security to their products after the fact rather than integrating it into the development phase, Carlini said, even though that approach makes it easy to miss vulnerabilities. Now, he warned, the machine learning community is poised to make the same mistake: machine learning is a huge field, but only a tiny slice of the community is focused on security. “We should be developing security in from the start,” he said. “It shouldn’t be an afterthought.”

The good news is that adversarial examples do not just offer a way to benchmark how vulnerable a machine learning model is; they also can be used to make the model more robust against an adversary and, in some cases, even improve its overall accuracy.

Some researchers are making machine learning algorithms more robust by essentially “vaccinating” them: adding adversarial examples, correctly labeled, into the training data. In a 2014 paper, Goodfellow and two colleagues demonstrated in a classification task involving handwritten numbers that this kind of training not only made it harder to fool a neural network, but even brought down its error rate on non-adversarial inputs. Kantchelian, Tygar, and Joseph have shown a similar vaccination process can greatly improve the robustness of boosted trees.

Most of the research on adversarial machine learning has focused on “supervised” learning, in which the algorithm learns from labeled data. Adversarial training offers a potential way for machine learning algorithms to learn from unlabeled data—an exciting prospect, Goodfellow said, since labeled data is expensive and time-consuming to create. In a paper posted online in May, Takeru Miyato of Kyoto University—along with Goodfellow and Andrew Dai of Google Brain—was able to improve the performance of a movie review classifier by taking unlabeled reviews, creating adversarial versions of them, and then teaching the classifier to group those reviews in the same category. With the plethora of texts available on the Internet, “you can get as many examples for unlabeled learning as you want,” Goodfellow said.

Meanwhile, in another paper published in May, Papernot, McDaniel, and other researchers detail the creation of another defense against adversarial examples, called “defensive distillation.” This approach uses a neural network to label images with probability vectors instead of single labels, and then trains a new neural network using the probability vector labels; this more nuanced training makes the second neural network less prone to over-fitting. Fooling such a neural network, the researchers showed, required eight times as much distortion to the image as before the distillation.

The work done so far is just a start, Tygar said. “We haven’t even begun to understand all the potential different environments in which you might have an attack” on machine learning. The field of adversarial machine learning is full of opportunities, he said. “The area is ready to move.”

Although Tygar thinks it is a near certainty we will start seeing more attacks on machine learning as its use becomes prevalent, it would be a mistake, he said, to conclude machine learning is too risky to be used in adversarial settings. Rather, he said, the question should be, “How can we strengthen machine learning so it is ready for prime time?”

Goodfellow, I., Shlens, J., and Szegedy, C.

Explaining and Harnessing Adversarial Examples http://arxiv.org/pdf/1412.6572v3.pdf

Kantchelian, A., Tygar, J. D., and Joseph, A.

Evasion and Hardening of Tree Ensemble Classifiers http://arxiv.org/pdf/1509.07892.pdf

Miyato, T., Dai, A., and Goodfellow, I

Virtual Adversarial Training for Semi-Supervised Text Classification http://arxiv.org/pdf/1605.07725v1.pdf

Papernot, N., McDaniel, P., Wu, X., Jha, X., and Swami, A.

Distillation as a Defense to Adversarial Perturbations against Deep Neural Networks Proceedings of the 37th IEEE Symposium on Security and Privacy, May 2016.

Papernot, N., McDaniel, P., and Goodfellow, I.

Transferability in Machine Learning: from Phenomena to Black-Box Attacks using Adversarial Samples http://arxiv.org/pdf/1605.07277v1.pdf

Szegedy, C., Zaremba, W., Sutskever, I., Bruna, J., Erhan, D., Goodfellow, I., and Fergus, R.

Intriguing Properties of Neural Networks https://arxiv.org/pdf/1312.6199v4.pdf

Join the Discussion (0)

Become a Member or Sign In to Post a Comment