The idea behind brain-computer interfaces (BCIs) is nothing new. BCIs are devices that enable users to interact with computers by means of brain activity. Research on BCIs began in the 1970s, and BCI neuroprosthetic applications advanced rapidly in the 1990s, helping to restore people's damaged sight, hearing, and movement. Today's BCIs are designed primarily to augment, assist, or repair sensory-motor or human cognitive functions.

While BCIs are still in a rudimentary state, a few new developments may portend things to come.

For instance, BrainNet is a direct brain-to-brain interface that allows three people to work together to solve a problem using only their minds.

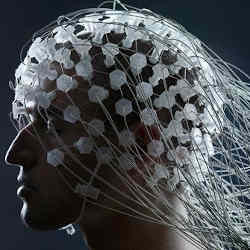

In another example, researchers at Carnegie Mellon University created the first mind-controlled robotic arm to work without an invasive brain implant; the user wears a cap utilizing electroencephalography (EEG, a way of reading brain waves) that can continuously track and follow a computer cursor using just the power of thought.

"At its most basic, a brain-computer interface, sometimes referred to as a brain-machine interface, is an interaction and control channel that does not depend on the natural pathways of the central nervous system," explains José del R. Millán, a professor at the Ecole Polytechnique de Lausanne (EPFL) in Switzerland.

"Whenever I move my hand," Millán says, "there is the sense of perception, so we know if the move was accomplished, and the degree of accomplishment." According to Millán, the intent of the subject has to be derived directly from analysis of the subject's brain activity, and there needs to be a response or completion, in order to understand whether or not the intended action has been accomplished, and how.

Millán points out that BCI alters brain activity by requiring users to learn to modify their brain activity patterns, as opposed to brain stimulation, which modifies brain activity (upregulating or downregulating certain brain rhythms) by delivering small electrical currents, magnetic pulses, or ultrasound waves to brain tissue.

He feels something has to happen with non-invasive technologies, in the same way there have been great advances in invasive approaches, in order to bring about new materials, interfaces, and approaches to make non-invasive interfaces easy to set up and use in daily life. Eventually, Millán anticipates a future where BCIs will detect our cognitive states to empower our intelligent devices, and make smart devices adapt to us, instead of us to them.

"This will make the new cognitive era that is coming really attune to people, rather than detaching people from technology," Millán says.

DARPA

One organization that has been at the forefront of BCI research since the 1970s is the Defense Advanced Research Projects Agency (DARPA), which is responsible for the development of emerging technologies for the U.S. Department of Defense.

In early 2018, DARPA launched the Next-Generation Nonsurgical Neurotechnology (N3) program, which seeks to achieve high levels of brain-system communications without surgery. Says Al Emondi, N3 program manager, "It is really about bringing all new technology to the BCI problem."

Emondi points out that when you look back at all success that DARPA has had in the field of brain-computer interfaces, one of the limitations is that all of the technology is invasive, requiring surgical implantation, and is only available for use with wounded warriors. The question Emondi is now asking is whether the technology has advanced enough that we might be able to interface the brains of the able-bodied with computers using nonsurgical approaches.

Today, there are different types of brain stimulation, but they are not very specific, according to Emondi.

"The resolution in which you can interact with those neurons is quite large, so the question became if it would be possible to interoperate with the brain in smaller scales, even down to 50 microns," Emondi explains. "We looked around and saw there is a lot of work going on in four modalities: ultrasound, light, magnetic fields, and electric fields."

He says there has been a lot of advances in these approaches in the past few years, so "We felt it was probably time to see whether or not we could start interoperating with the brain in a non-invasive way, and still get the resolution we need. That is ultimately what we are after."

In May, DARPA awarded funding to six organizations to support the N3 program:

- Battelle Memorial Institute

- Carnegie Mellon University

- Johns Hopkins University Applied Physics Laboratory

- Palo Alto Research Center (PARC)

- Rice University, and

- Teledyne Scientific.

These groups of researchers will pursue a mix of approaches and modalities to develop high-resolution, bi-directional wearable BCIs. Applications that might be enabled by the research include control of active cyberdefense systems, swarms of unmanned aerial vehicles, and teaming with computer systems to multitask during complex.

"Imagine a cyber-network sends feedback directly into the brain in the form of a haptic response, maybe perceived as a sensory stimulation on your arm or a signal that is actually invoked in the somatosensory cortex itself, that the network is being attacked," Emondi says. This could provide totally new ways of interoperating with military systems that involve multiple modalities for direct neural connections. This could not be explored previously, because the available technologies were all invasive (requiring surgery and implants).

"If we can figure out how to do this in a non-invasive way, it really opens up the door for further exploration," Emondi says.

Neuralink

Technology entrepreneur Elon Musk is also active in the BCI field through his start-up Neuralink, which is developing ultra-high-bandwidth BCIs to connect humans and computers.

In an interview with the news outlet Axios, Musk said Neuralink's mission is to build a hard drive for the human brain, with the long-term aspiration of achieving a symbiosis with artificial intelligence (AI). Musk says his new technology will be able to seamlessly combine humans with computers, invasively, through an "electrode-to-neuron interface at a micro level," and "a chip and a bunch of tiny wires" that will be "implanted in your skull." Musk believes this is obtainable "probably on the order of a decade."

Bloomberg reported earlier this year that some scientists associated with Neuralink outlined a way to rapidly implant electrical wiring into the brains of rats in an unpublished academic paper. Described as a "sewing machine" for the brain, at its essence the method embeds flexible electrodes into brain tissue. Bloomberg writes this could be a path forward to monitoring—and potentially stimulating—brain activity with minimal cranial harm, which would allow Neuralink to build a device with artificial intelligence that people could access with their thoughts.

Brain/Cloud Interface

The results of a study published earlier this year in Frontiers in Neuroscience suggests that BCIs, like much else, may eventually migrate to the cloud.

The study, led by researchers at the University of California, Berkeley and the Institute for Molecular Manufacturing, reports that advances in nanotechnology, nanomedicine, AI, and computation will lead to the development of what the researchers referred to as a "Brain/Cloud Interface," or B/CI for short.

B/CI will connect neurons and synapses in the brain to cloud-computing networks in real time, giving people instant access to vast knowledge and computing power via thought alone, according to the study. Dubbed "neural nanorobotics" by the researchers, it involves the use of implanted nanorobots that are connected to a network in real time. These "Matrix"-style downloads could be realized in as soon as 20 to 30 years.

"These devices would navigate the human vasculature, cross the blood-brain barrier, and precisely autoposition themselves among, or even within, brain cells," said Robert Freitas, Jr., the study's senior author. "They would then wirelessly transmit encoded information to and from a cloud-based supercomputer network for real-time brain-state monitoring and data extraction."

According to a co-author of the report, Nuno Martins, a professor of electrical and computer engineering at the University of Maryland, "A human B/CI system mediated by neural nanorobotics could empower individuals with instantaneous access to all cumulative human knowledge available in the cloud, while significantly improving human learning capacities and intelligence."

While the authors estimate that today's supercomputers possess sufficient processing speeds capable of handling the neural data for B/CI, other barriers exist.

"This challenge includes not only finding the bandwidth for global data transmission," says Martins, "but also how to enable data exchange with neurons via tiny devices embedded deep in the brain." One proposal is the use of "magnetoelectric nanoparticles" to effectively amplify communication between neurons and the cloud.

While neural nanorobotics will be in development for decades to come, it is a strong indicator of where this technology likely is headed.

Internet of Minds

As neuro-technologies advance, we can soon expect the development of the "Internet of Minds" (IoM), in which computing devices are neuro-controlled, says Michael Smith of the Center for Neural Engineering & Prostheses at the University of California, Berkeley. Instead of controlling the Internet of Things with our smartphones via our fingers, Smith suggests in the future we will control the IoT with our smartphones via BCI wearables (and AI, which will also play a major role).

Smith says the earliest stages of the IoM are likely to focus on devices that can decode motor responses and verbal commands directly from the brain. "Such devices would dramatically simplify our ability to interact with devices such as smartphones, self-driving cars, translation devices and so on," he says.

Soon thereafter, Smith said, it is likely IoM devices will be developed that can decode information that has not reached the level of conscious awareness or volitional control. This would enable entirely new applications such as self-driving cars that react instantly to dangers perceived by the occupants, or devices that convey emotional states.

According to Smith, these kinds of IoM technologies pose serious ethical challenges regarding privacy, and they open a potential side-channel for downloading information directly from the brain without either consent or awareness of the hack.

In the long term, technical advances will likely lead to full two-way, bi-directional communication between minds and machines. Such devices will bypass the limitations of our five senses, potentially increasing the bandwidth of the brain dramatically. However, writing information directly to the brain introduces even more serious ethical challenges, because that could fundamentally alter one's sense of agency, and perception of the world.

At that point, says Smith, "The question is, will we control the phone, or will the phone control us?"

John Delaney is a freelance technology writer based in Manhattan, NY, USA.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment