A host of different tasks—such as identifying the song in a database most similar to your favorite song, or the drug most likely to interact with a given molecule—have the same basic problem at their core: finding the point in a dataset that is closest to a given point. This “nearest neighbor” problem shows up all over the place in machine learning, pattern recognition, and data analysis, as well as many other fields.

Yet the nearest neighbor problem is not really a single problem. Instead, it has as many different manifestations as there are different notions of what it means for data points to be similar. In recent decades, computer scientists have devised efficient nearest neighbor algorithms for a handful of different definitions of similarity: the ordinary Euclidean distance between points, and a few other distance measures.

However, “every time you needed to work with a new space or distance measure, you would kind of have to start from scratch” in designing a nearest neighbor algorithm, said Rasmus Pagh, a computer scientist at the IT University of Copenhagen. “Each space required some kind of craftsmanship.”

Because distance measures are so varied, many computer scientists doubted these ad hoc methods would ever give way to a more general approach that could cover many different distance measures at once. Now, however, a team of five computer scientists has proven the doubters—who originally included themselves—were wrong.

In a pair of papers published last year (in the Proceedings of the ACM Symposium on Theory of Computing and the IEEE Annual Symposium on Foundations of Computer Science, respectively), the researchers set forth an efficient approximation algorithm for nearest neighbor search that covers a wide class of distance functions. Their algorithm finds, if not the very closest neighbor, then one that’s almost as close, which is good enough for many applications.

The distance functions covered by the new algorithm, called norms, “encompass the majority of interesting distance functions,” said Piotr Indyk, a computer scientist at the Massachusetts Institute of Technology.

The new algorithm is a big leap forward, Pagh said, who added, “I wouldn’t have guessed such a general result was possible.”

An Unbelievable Tangle

Four of the paper’s five authors—Alexandr Andoni and Erik Waingarten of Columbia University, Aleksandar Nikolov of the University of Toronto and Ilya Razenshteyn of Microsoft Research Redmond—originally set out to prove the opposite of what they finally proved. Namely, they tried to show there are some normed spaces, and some datasets within these spaces, for which no nearest neighbor algorithm can be significantly faster than the laborious process of comparing a given point to every single dataset point to find the closest one.

It seemed plausible to the four researchers that some such norms should exist, because norms are extremely diverse. A norm is simply a distance function in ordinary space (of any dimension) that has the property that whenever you scale the coordinates of two points by a given factor, the distance between them scales by the same factor. Ordinary Euclidean distance is a norm, and so is “Manhattan” distance, which measures the number of city blocks between two points.

But there are many other norms. In fact, given any convex shape that is symmetric around the origin, there is some corresponding norm for which that shape is the “unit ball”—the ball of radius 1 around the origin. In high-dimensional spaces, the assortment of different norms “is a wild world, but also very expressive,” said Assaf Naor, a mathematician at Princeton University and the new paper’s fifth author.

“There’s a lot of freedom in designing a normed space,” Andoni said. Because of this, he, Nikolov, Razenshteyn, and Waingarten believed at first that it should be possible to cook up some normed space that contains an “expander graph”—an object that is nearest neighbor search’s worst enemy.

An expander graph is a network (a collection of nodes and edges) that has a counterintuitive combination of sparseness and connectivity. Each node in an expander connects to relatively few other nodes; nevertheless, there’s no way to separate the graph into reasonably large chunks without cutting through many edges.

“Somehow globally it creates an unbelievable tangle that can never be separated,” Naor said. “It’s completely nonobvious that such objects even exist.”

Yet they do exist, and since every graph comes with a natural notion of distance—simply declare the length of each edge to be 1—it makes sense to talk about the nearest neighbor problem in the context of graphs. Expander graphs, because of their connectivity patterns, defy the kind of data-structuring approaches at the heart of nearest neighbor algorithms.

Expander graphs, because of their connectivity patterns, defy the kind of data-structuring approaches at the heart of nearest neighbor algorithms.

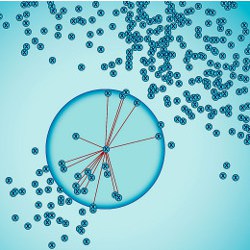

Computer scientists designing a nearest neighbor algorithm generally start by trying to partition the dataset into sections in such a way that points in different sections tend to be far from each other. That way, once you’ve decided that your chosen point belongs in a particular section, you can feel confident that its nearest neighbor—or at least an approximate nearest neighbor—lives within that section, too.

However, in an expander graph, it’s impossible to carry out an effective partition, since all but the most lopsided partitions are going to separate many points that are close to each other. Andoni, Nikolov, Razenshteyn, and Waingarten were able to show that there can be no efficient nearest neighbor algorithm for an expander graph.

It should be possible, the four researchers conjectured in a 2016 paper, to extend this result to the realm of norms, by creating an appropriate normed space that contains an expander graph. “If you can do that, then this tangle sitting inside the norm will fool any nearest neighbor data structure,” Naor said.

The researchers looked for some normed space that contains an expander. “But all our attempts failed,” Andoni said. “In retrospect, it was for good reason.”

A General Framework

When Naor saw the four researchers’ paper, he realized he could explain why their attempts had failed. A body of mathematics he had been developing for over a decade for other reasons had the power to settle the researchers’ conjecture—but with the opposite answer from what they had expected. Naor wrote a paper proving that expanders cannot be embedded in any reasonable way in normed spaces (except for trivial embeddings that do not create obstacles to efficient nearest neighbor search).

His paper, Naor said, “revived hope that maybe the community was wrong all this time” about whether there could be an efficient general method for nearest neighbor search that would cover all normed spaces. Naor teamed up with the other four researchers, and they came up with a method for organizing datasets inside any normed space so that finding an approximate nearest neighbor is a quick process.

Roughly speaking, their method starts by converting the dataset into a graph, by connecting every close pair of points with an edge. Naor’s result tells us this graph cannot be an expander, so one of two things must be true: either the graph contains some large cluster of highly-connected points, or it can be partitioned into two sets with few edges crossing from one set to the other.

In the former case, since the cluster points are all close to each other, you can collapse them all down to a single point without losing much information. In the latter case, the partition gives you a way to greatly narrow down your search. Either way, you’ve simplified the set of points you need to consider. Next, you repeat this process on the smaller graphs that you obtained by collapsing and partitioning, and keep doing so until you’ve collapsed and partitioned the dataset down to single points.

This recursive process creates a tree-like data structure. Then, when you want to find the (approximate) nearest neighbor for a given point, you can simply follow the tree downward to quickly obtain your answer.

“Now we have a method saying that whatever issue humanity may be faced with in the future, that uses some new norm I can’t even imagine, we have an off-the-shelf way to create a nearest neighbor data structure,” Naor said.

Significant further work will be needed to make the algorithm practical—improving the approximation factor, for instance, and making the tree structure faster to construct. Researchers also plan to examine whether the result can be extended to some distance functions that are not norms, such as the “edit distance,” which measures how many insertions, deletions, and substitutions it takes to convert one string of DNA into another.

Already, though, the new algorithm offers a path forward for norms that had no fast nearest neighbor method, such as the “nuclear” norm on matrices, which played a role in the Netflix Prize competition (an open competition approximately a decade ago to find the best algorithm for predicting user ratings of movies).

The new algorithm offers a path forward for norms that had no fast nearest neighbor method, such as the “nuclear” norm on matrices.

“This result is the first time that a general framework for high-dimensional spaces has been developed,” Indyk said. “Now that we have a framework, we can do lots of things with it.”

And the result opens up broader vistas of discovery about norms. “The world of norms is very complicated,” Naor said. “But the main picture is, we have more structure than we thought. I’m definitely intending to use this structure for more.”

Andoni, A., Naor, A., Nikolov, A, Razenshteyn, I., and Waingarten, E.

Data-dependent hashing via nonlinear spectral gaps, Proceedings of the 50th Annual ACM SIGACT Symposium on Theory of Computing, June 25–29, 2018, pp. 787–800. https://dl.acm.org/citation.cfm?id=3188846

Andoni, A., Naor, A., Nikolov, A, Razenshteyn, I., and Waingarten, E.

Hölder Homeomorphisms and Approximate Nearest Neighbors, Proceedings of the 2018 IEEE 59th Annual Symposium on Foundations of Computer Science, October 7–9, 2018, pp. 159–169. http://ieee-focs.org/FOCS-2018-Papers/pdfs/59f159.pdf

Andoni, A., and Indyk, P.

Near-optimal Hashing Algorithms for Approximate Nearest Neighbor in High Dimensions. Communications of the ACM, January 2008, Vol. 51, No. 1, pp. 117–122. https://dl.acm.org/citation.cfm?id=1327494

Join the Discussion (0)

Become a Member or Sign In to Post a Comment