The sheer size and complexity of today’s generative pretrained transformer (GPT) models is nothing less than astounding. OpenAI’s GPT-3, for example, possesses somewhere in the neighborhood of 175 billion parameters, and there is speculation GPT-4 could have as many as 10 trillion parameters.a

All of this introduces enormous overhead in terms of required cloud resources, including compute cycles and energy consumption. At the moment, the computer power required to train state-of-the-art artificial intelligence (AI) models is rising at a rate of 15x every two years.b The cost of training a large GPT model can run into the millions of dollars.c Retraining a model to fit onto a device like a laptop or smartphone can push the price tag up considerably more.

As a result, there is a growing focus on shrinking GPT models without losing critical attributes. In many cases, the original parameters required to build the model are no longer required once a finished GPT model exists. So, through a variety of techniques, including quantization, sparsity, pruning and other distillation methods, it is possible to shrink the model with negligible impact on performance.

In January 2023, a pair of researchers at the Institute of Science and Technology Austria (ISTA) pushed the boundaries of knowledge distillation and model compression into a new realm. Through a combination of quantization, pruning, and layer-wise distillation, they discovered a way to reduce the size of a GPT model by 50% in one shot, without any retraining and with minimal loss of accuracy. SparseGPT works efficiently at the scale of models with 10–100+ billion parameters.

The deep-learning method used to accomplish this, SparseGPT,d may pave the way to more practical forms of generative AI, including systems that are customized and optimized for particular users—say a travel agent, physician, or insurance adjustor—while also adapting to a person’s specific behavior and needs. Moreover, the ability to load even scaled-down GPT models on devices could usher in far greater security and privacy safeguards by keeping sensitive data out of the cloud.

“The ability to compress and run these powerful language models on endpoint devices introduces powerful capabilities,” says Dan Alistarh, professor at ISTA and co-author of the SparseGPT academic paper. “We’re working to find a way to ensure accurate and dependable results, rather than have a model collapse and become unusable. This is a significant step forward.”

Breaking the Model

The idea of compressing AI models is not particularly new. As early as the 1980s, researchers began to explore ways to streamline data. In much the same way the human brain can pare synapses and retrain itself, they learned it often is possible to purge unwanted and unnecessary parameters without witnessing any significant drop-off in reasoning and results. In the case of GPT models, the goal is to scale down a model but deliver essentially the same results.

“When you initially train a model, it’s important to have a large number of parameters. We have empirically seen that larger models are easier to train and better able to extract meaningful information from the data when they are overparameterized,” says Amir Gholami, a researcher of large language models and AI at the University of California, Berkeley. Yet once the training process is complete and convergence has taken place, “It’s no longer necessary to keep all those parameters to produce accurate results,” he says.

In fact, “Researchers have found that in some cases it’s possible to get the same type of performance from a large language model such as GPT that’s 100 times smaller than the original without degrading its capabilities,” Gholami says. The question is which parameters to remove—and how to go about the task in the most efficient and cost-effective way possible. It’s no small matter, because building and retraining a GPT model can involve thousands of GPU hours and costs can spiral into the millions of dollars.

Data scientists use several techniques to compress models like GPT-4 and Google’s Bard. In quantization, the precision used to represent the parameters is reduced from 16 bits to 4 bits; this reduces the model size by a factor of 4. As the model size shrinks, these models can fit in smaller numbers of GPUs, and their inference latency and energy demand drops. This approach helps avoid a fairly recent phenomena of workloads hitting a ‘memory wall.’ “This means that the bottleneck is no longer how fast you can perform computations, but how fast you can feed data into the system. So, fewer bytes is better,” Gholami says.

Another widely used technique is sparsity, which centers on removing unneeded values that do not impact the data. It could be considered quantization with zero bits. Structured sparsity involves removing entire groups of parameters, which makes implementation easier and often results in straightforward efficiency gains. The downside is that it sacrifices accuracy for speed, because it is difficult to remove large numbers of groups without negatively affecting the model. Unstructured sparsity removes redundant parameters without any constraint on the sparsity pattern. As a result, one can retain model accuracy even at ultra-high sparsity levels.

Data scientists use these approaches—and others such as pruning, which completely removes individual parameters—to continually reduce the memory and compute overhead of these models. The resulting distilled and compressed models operate faster, consume less energy, and in some cases, they even produce better results. As Gholami explains, “You end up with a smaller but more efficient AI framework.”

Learning the Language of AI

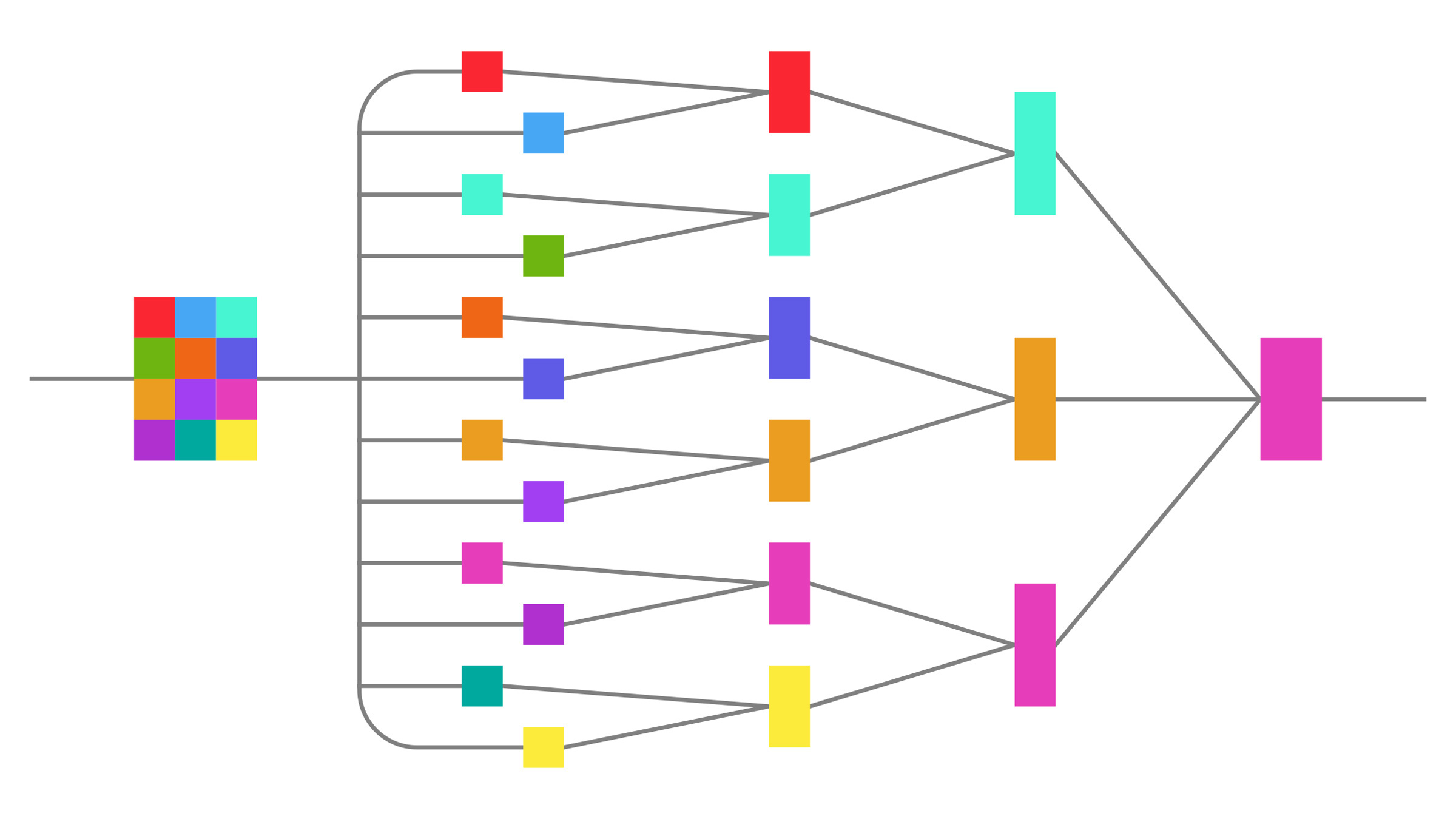

The approach data scientists use to distill and compress a GPT model requires a “teacher” network to train the “student” network. “The system learns to approximate a program that already exists. It maps to a function you can already compute,” says Christopher De Sa, an assistant professor in the Computing Science Department at Cornell University. “So, in the case of a neural network, you’re trying to build a model that has the same accuracy as an already existing neural network, but is smaller.”

Sparcity centers on removing unneeded values that do not impact the data. It could be considered quantization with zero bits.

A problem is that these frameworks often require an enormous investment in tuning and retraining. “They produce good, small models that display low loss and high accuracy. Additionally, the results aren’t necessarily representative of the larger model,” De Sa says. For many applications this change in some predictions is acceptable, since the accuracy level remains high. “However, if you care about something like privacy or security, you may discover that the larger network doesn’t achieve key requirements because you don’t make the same predictions as the original model,” he adds.

Scaling up quantization, pruning, and knowledge distillation methods also is a challenge, says Elias Frantar, a Ph.D. candidate at ISTA and co-author of the SparseGPT paper. For example, many of today’s GPT models are 1,000 times larger than only a few years ago—and they continue to grow at a furious rate. “This impacts the techniques that you use to distill a model. Compressing a model with hundreds of billions of parameters requires different thinking and different techniques,” he says.

So, when the researchers at ISTA launched the SparseGPT project, they adopted what Alistarh describes as a “Swiss Army Knife approach” by combining pruning, quantization, and distillation. The pair focused on approaching the challenge in a modular way, including compressing various layers of the network separately and then recombining all the pieces to produce a fully compressed model. While this method generated significant gains, it is not necessarily ideal.

“If you could optimize everything together, you would ultimately produce the best possible results,” Frantar says. “But since this isn’t possible today, the question becomes: ‘how can we get to the best possible results with the resources we are working with?'”

Lowering Noise, Raising Signals

SparseGPT may not be perfect, but the technique has pushed GPT model compression into new territory. Running on the largest open source models, OPT175B and BLOOM-176B, the SparseGPT algorithm chewed through its more than 175 billion parameters—approximately 320 gigabytes of data—in less than 4.5 hours, with up to 60% unstructured sparsity. There was a negligible increase in perplexity and, in the end, the researchers were able to remove upwards of 100 billion weights without any significant deterioration in performance or accuracy.

The algorithm relies on a clever approach. It succeeds by decomposing the task of compressing the entire model into separate, per-layer compression problems, each of which is an instance of sparse regression. It then tackles the subproblems by iteratively removing weights, while updating remaining weights to compensate for the error incurred during the removal process. The algorithm achieves further efficiency by freezing some weights in a pattern that maximizes compute resources required throughout the algorithm. The resulting accuracy and efficiency make it possible for the first time to tackle models with upwards of 100 billion parameters.

Remarkably, a single GPU identifies the data that is not necessary in the model, typically within a few hours, and presents the compressed model in a single shot and without any retraining. “One of the interesting things we discovered,” Alistarh says, “is that these large models are extremely robust and resistant to digital noise. Essentially, all the noise is filtered out as it passes through the model, so, you wind up with a network that is optimized for compression.”

This finding is good news for software developers and others who would like to build commercial applications. At the moment, various communities of hobbyists and hackers are finding ways to load smaller, not-always-licensed GPT models onto devices, including the Raspberry Pi, and Stanford University researchers found a way to build a chat GPT for less than US$600. However, the Stanford team terminated the so-called Alpaca chatbot in April 2023 due to “hosting costs and the inadequacies of our content filters,” while stating that it delivered “very similar performance” to OpenAI’s CPT-3.5.e

However, to arrive at the next level of knowledge distillation and compression, researchers must push quantization, pruning, fine tuning, and other techniques further. Alistarh believes throwing more compute power at the problem can help, but it also is necessary to explore different techniques, including breaking datasets into a larger number of subgroups, tweaking algorithms, and exploring sparsity weightings. This could lead to 90% or better compression rates, he says.

Outcomes Matter

At the moment, no one knows how much compression is possible while maintaining optimal performance on any given model, De Sa notes he and others continue to explore options and approaches. Researchers also say it is vital to proceed with caution. For example, changes to the model can mean that results may lack a clear semantic meaning, or they could lead to bewildering outcomes, including hallucinations that seem entirely valid. “We must focus on preserving the properties of the original model beyond accuracy,” De Sa says. “It’s possible to wind up with the same level or even a better level of accuracy, but have significantly different predictions and outcomes from the larger model.”

Another problem is people loading a sophisticated AI language model onto a device and using it for underhanded purposes, including bot farms, spamming, phishing, fake news, and other illicit activities. Alistarh acknowledges this is a legitimate concern, and the data science community must carefully examine the ethics involved with using a GPT model on a device. This has motivated many researchers to withhold publishing training parameters and other information, Gholami says. In the future, researchers and software companies will have to consider what capabilities are reasonable to place on a device, and what types of results and outcomes are unacceptable.

Nevertheless, SparseGPT and other methods that distill and compress large language models are here to stay. More efficient models will significantly change computing and the use of natural language AI in profound ways. “In addition to building more efficient models and saving energy, we can expect distillation and compression techniques will fuel the democratization of GPT models. This can put people in charge of their data and introduce new ways to interact with machines and each other,” De Sa says.

Frantar, E. and Alistarh, D.

SparseGPT: Massive Language Models Can be Accurately Pruned in One-Shot, ArXiv, Vol. abs/2301.00774, Jan. 2, 2023; https://arxiv.org/pdf/2301.00774.pdf

Yao, Z., Dong, Z., Zheng, Z., Gholami, A., Yu, J. Tan, E., Wang, L., Huang, Q., Wang, Y., Mahoney, M.W., and Keutzer, K.

HAWQ-V3: Dyadic Neural Network Quantization, Proceedings of the 38th International Conference on Machine Learning, PMLR 139, 2021; http://proceedings.mlr.press/v139/yao21a/yao21a.pdf

Polino, A. Pascanu, R., and Alistarh, D.

Model Compression via Distillation and Quantization, ArXiv, Vol., abs/1802.05668, Feb. 15, 2018; https://arxiv.org/abs/1802.05668

Chee, J., Renz, M., Damle, A., and De Sa, C.

Model Preserving Compression for Neural Networks, Advances in Neural Information Processing Systems, Oct. 31, 2022; https://openreview.net/forum?id=gtl9Hu2ndd

Cai, Y., Hua, W., Chen H., Suh, E,, De Sa, C., and Zhang, Z.

Structured Pruning is All You Need for Pruning CNNs at Initialization, arXiv:2203.02549, Mar. 4, 2022; https://arxiv.org/abs/2203.02549

Join the Discussion (0)

Become a Member or Sign In to Post a Comment