Some experienced hands in the field of data analysis feel the differences between investigational data scientists, who work on the leading edge of concepts using statistical tools such as the R programming environment, and operational data scientists, who have traditionally used general-purpose programming languages like C++ and Java to scale analytics to real-time enterprise-level computational assets, need to become less relevant.

That sentiment is growing, especially as tools emerge that enable individual scientists to analyze ever-larger amounts of data. Though many, if not most, of these data practitioners are not considered software developers in the traditional sense, the data their analysis creates becomes itself an increasingly valuable resource for many others.

“It is increasingly important to bridge that gap, for a few reasons,” says Michael Franklin, director of the AMPLab, an open source innovation lab at the University of California at Berkeley. Franklin’s reasons for closing the gap include:

- Having a unified system for both speeds up discovery by “closing the loop” between exploration and operation.

- Having different systems introduces the potential for error—a model created in the “investigational” tool set may be translated incorrectly to the (different) operational system—sometimes these errors can be quite subtle and go unnoticed.

- Increasingly, data scientists are responsible for both exploration and production.

The tools around many of the most innovative and promising data science initiatives are often open source resources that range from the R statistical programming language, widely used by individual researchers in the life sciences, physical sciences, and social sciences alike, to the enterprise-friendly Apache Spark cluster computing platform and its companion machine learning library developed at the AMPLab.

The attraction of open source tools might be ascribed to the academic culture in which much cutting-edge data science is being done, according to Stanford University marine biologist Luke Miller. Miller started using R when he began working at an institution that did not have a free site license for Matlab; his observations are shared by Scott Chamberlain, co-founder of rOpenSci, which creates open source scientific R packages, collections of R functions, data, and compiled code that help reduce redundant coding.

“Academics have this culture of not wanting to have to pay for things,” Chamberlain says. “They want to use things that either the university has a license for, or they want it to be free. That’s the culture; love it or hate it, that’s the way it is.”

Maturing the R Ecosystem

While the free culture around R has allowed it to spread to many disciplines—it has narrowly outpaced Python in the past two O’Reilly data science tool surveys—it has also become rather unwieldy, according to Jim Herbsleb, a professor of computer science at Carnegie Mellon University.

“There’s a lot duplication of effort out there, a lot of missed opportunities, where one scientist has developed a tool for him or herself, and with a few tweaks, or if it conformed to a particular standard or used a particular data format, it could be useful to a much wider community,” he says. “R is all over the world, on everybody’s laptops, so it’s really hard to get a sense of what’s happening out in the field. That’s what we’re trying to address.”

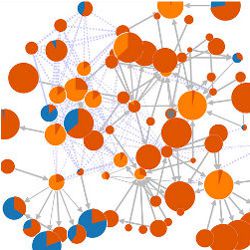

Herbsleb and post-doctoral researcher Chris Bogart have created the Scientific Software Network Map (http://scisoft-net-map.isri.cmu.edu/), essentially a meta-tool that allows interactive exploration of data showing which software tools have been used, how use is trending, which packages are used together to produce a result, and the publications that resulted from that use.

Herbsleb views the newly launched project, funded by a National Science Foundation grant, more as a reference point for R package developers who wish to see how end users are utilizing their work than for those seeking tools. “If developers know what other people need or want from a tool they are working on, they are often willing to do some extra work to make sure it works for everybody,” he says. “That’s another thing one could hope to get from the map: a better understanding of what community needs are by seeing how the tools are actually being used. Another use case is for communities of scientists who either already do, or would like to, start managing their software assets as a community.”

However, Larry Pace, author of several books on R, thinks Herbsleb’s network map could be exactly the reference neophyte R users, as well as developers, would find very useful.

“I can see the various R packages I use on this map; it’s a really, really cool way to show this,” Pace says. “There are, even in R right now, tools that do what psychologists do, psychometric analyses and things like that. They’re just not well publicized, so people don’t know about them.”

For experienced end users like Miller, there appears to be a critical mass of mailing list exchanges, an index of domain-specific “task views” on the Comprehensive R Archive Network (CRAN) website (http://cran.r-project.org/), and dialogue within scientific communities that facilitate reuse of code and overall expansion of bodies of knowledge codified in R; what one may term the “operational” side of the worldwide availability of reproducible data.

“There are enough R users who have written very useful scripts that I can piggy-back onto and open these same data files and plunk through them,” he says. “Python in some ways may be a better way to do that, certainly in terms of time invested; a good Python programmer probably would have gotten it done faster than I did in R. We’re not doing wildly fancy programming here; we’re just opening data files and parsing them. But in terms of integrating into the rest of my data visualization and analysis workflow, there’s very little reason to step outside of R for that sort of task.”

User-Friendly ML Emerging

Developers of machine learning tools are also embracing non-traditional communities of data scientists, touting speed and ease of use rather than programming environment as selling points, though R interfaces are increasingly viewed as important features.

For some of these projects, survival seems assured, through ample funding and acceptance by the open source infrastructure. Spark, for example, which boasts in-memory processing capabilities that can be 100 times faster than Hadoop MapReduce, was initially developed as part of a $10-million National Science Foundation award to the Berkeley AMPLab and granted top-level status by the Apache Software Foundation in 2014. The developers of Spark (which includes the MLbase machine learning stack) cite rapid growth in the number of people trained on it; AMPLab co-director Ion Stoica estimates 2,000 received training in 2014 and estimates 5,000 will receive training in 2015. The first Spark Summit, held in December 2013, had 450 attendees and a second, in June 2014, had 1,100.

“Today, Spark is the most active big data open source project in terms of number of contributors, in number of commits, number of lines of code—in whatever metric you are using, it’s several times more active than other open source projects, including Hadoop,” Stoica says.

To help attract data scientists who have not traditionally been users of machine learning at scale, the Spark team has developed features including:

- Spark’s MLlib machine learning library already ships with application programming interfaces (APIs) such as a pipelines API, which streamlines the sequence of data pre-processing, feature extraction, model fitting, and validation stages by a sequence of dataset transformations. Each transformation takes an input dataset and outputs the transformed dataset, which becomes the input to the next stage.

- Spark’s developers have also been perfecting its R interface. Introduced in January 2014, early versions of SparkR supported a distributed list-like API that maps to Spark’s Resilient Distributed Dataset (RDD) API. More recently, says SparkR developer Shivaram Venkataraman, the team is close to releasing a DataFrame API that will allow R users to use a familiar data frame concept, but now on large amounts of data. Venkataraman says the developers also have some work in progress in SparkR that will allow R users to call Spark’s machine learning algorithms by using the pipelines. At a high level, Venkataraman says, the interface will allow R users to use specific columns of the DataFrame for machine learning and parameter tuning.

- Franklin also says the MLbase project, still under development, is going one step further to democratize machine learning over big data by allowing users to specify what they want to predict and then having the underlying system determine the best way to accomplish that using MLlib and the rest of the scalable Spark infrastructure.

A much smaller project than Spark, called mlpack, also targets those not experienced with machine learning. The project’s “primary maintainer,” Ryan Curtin, a Georgia Institute of Technology doctoral student, says the project, which launched its 1.0 version in December 2011, now includes five or six consistent developers, 10 to 12 occasional contributors, and perhaps 20 who may send in one contribution and then move on.

Spark boasts in-memory processing capabilities that can be 100 times faster than Hadoop MapReduce.

Curtin says mlpack appeals to nonexperts through a consistent API that provides default parameters that can be left unspecified. Additionally, a user could move from one method to another while expecting to interact with the new method in the same way. Expert users may use its native C++ environment to customize their work.

Curtin also says that, though mlpack and Spark’s target audiences may differ, the tool can be very useful for operational tasks in areas such as the burgeoning field of data-driven population health management. A data analyst for a public health department or healthcare delivery system, for example, could use one of mlpack’s clustering algorithms on a set of numerical values that include fields such as a person’s name and location (both mapped to a granular numerical format) and vital health data such as blood glucose and blood pressure, to predict where clinicians will have to deliver more intense care.

“There are a couple of clustering algorithms in mlpack you could use out of the box, like k-means and Gaussian Mixture Models, and there are enough flexible tools inside mlpack that if you really want to dig deep into it and write some C++, you can really fine-tune the clustering algorithm or write a new or modified one.”

While mlpack research shows benchmarks significantly faster than those of other open source platforms such as Shogun (which originally focused on large-scale kernel methods and bioinformatics) and Weka (a popular general-purpose machine learning platform with a graphical user interface that may appeal to those uncomfortable with command line interfaces) in a test of k-nearest neighbors, Curtin says ultimately, the prevailing vibe among the community is cooperative more than competitive.

“We all sort of have the same goal,” he says, “but there’s an understanding the Shogun guys built their own thing. Shogun focuses on kernel machines and supports vector machines and classifiers of that ilk. mlpack focuses more on problems where you’re comparing points in some space, like nearest neighbor.”

That sense of community may prove paramount for small projects such as mlpack. While Curtin says the growth curve of mlpack’s downloads is becoming exponential, he has limited time to develop features that might spur growth even further among non-expert users, such as designing a GUI and automatic bindings for other languages.

Those things, in fact, may be for somebody else to do, says Curtin.

“My interest is less in seeing mlpack as itself grow, although that’s nice,” he says. “I want to see the code get used more than anything else. That’s what brought me to open source in the first place; building things that are useful for people.”

Further Reading

Matloff, N.

The Art of R Programming: A Tour of Statistical Software Design, No Starch Press, San Francisco, CA, 2011.

Pace, L.

Beginning R: An Introduction to Statistical Programming, Apress, New York, NY, 2012.

Wickham, H.

The Split-Apply-Combine Strategy for Data Analysis, Journal of Statistical Software 40 (1), April 2011

Sparks, E., Talwalkar, A., Smith, V., Kottalam, J., Pan, X., Gonzalez, J., Franklin, M., Jordan, I., and Kraska, T.

MLI: An API for Distributed Machine Learning, International Conference on Data Mining, Dallas, TX, Dec. 2013

Curtin, R., Cline, J., Slagle, N.P., March, W., Parikshit, R., Mehta, N., and Gray, A.

MLPACK: A Scalable C++ Machine Learning Library, Journal of Machine Learning Research 14, March 2013

Join the Discussion (0)

Become a Member or Sign In to Post a Comment