Photonic computing has seen its share of research breakthroughs and deep research winters, much like the history of artificial intelligence (AI). Now, with the resurgence of AI, the huge amounts of energy today’s large neural-network models need when running on electronic computers is reawakening interest in uniting the two.

More than 30 years ago, during one of the booms in research into artificial neural networks, Demetri Psaltis and colleagues at the California Institute of Technology demonstrated how techniques from holography could perform rudimentary face recognition. The team members showed they could store as many as one billion weights for a two-layer neural network using the core elements from a liquid-crystal display. Similar spatial light modulators became the foundation of several attempts to commercialize optical computing technology, including those by U.K.-based startup Optalysys, which has focused in recent years on applying the technology to accelerating homomorphic encryption to support secure remote computing.

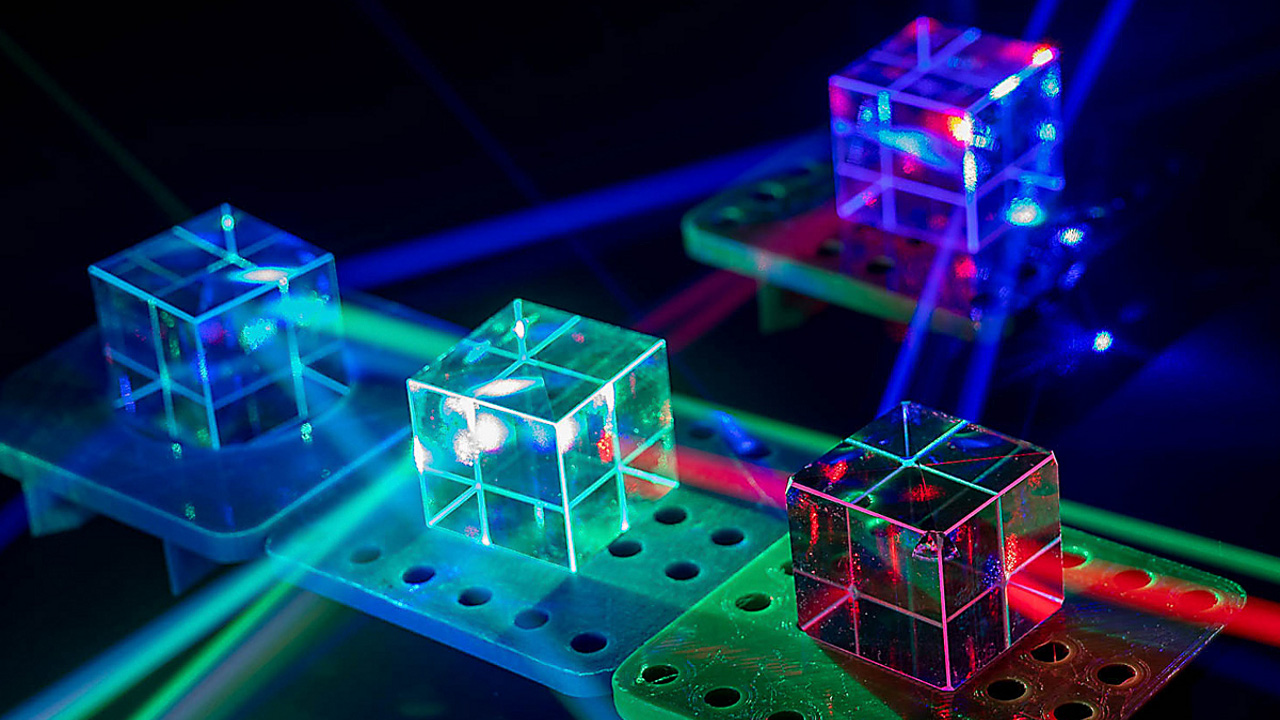

Though some groups are using spatial light modulators for AI, they represent just one category of optical computer suitable for the job. There are also decisions as to which form of neural networks best suits optical computing. Some techniques focus on the matrix-arithmetic operations of mainstream deep learning pipelines, while others focus squarely on emulating the spike trains of biological brains.

What all the proposed systems have in common is the possibility that, by using photons to communicate and calculate, they will deliver major advantages in density and speed over systems based purely on electrical signaling. A 2021 study of inferencing based on matrix arithmetic by Mitchell Nahmias, now CTO of startup Luminous Computing, and colleagues at Princeton University argued the theoretical efficiency of AI inferencing in the optical domain could far surpass that of conventional accelerators based on existing electronics-only architectures.

The key issue for machine learning is the amount of energy needed to move data around accelerators. Electronic accelerators often employ strategies to cache as much data as possible to reduce this overhead, with major trade-offs concerning whether temporary results or weights are held in the cache depending on the model’s structure. However, the energy cost of delivering photons over distances larger than the span of a single chip is far lower than it is for electrical signaling.

A second potential advantage of photonic AI comes from the ease with which it can handle complex operations in the analog domain, though the power savings achievable here are less certain than for communications. Whereas matrix arithmetic relies on highly parallelized hardware circuits for performance in conventional systems, simply passing photons through an optical component such as a Mach-Zehnder interferometer (MZi) or micro-ring resonator will perform arithmetic requiring hundreds or even thousands of logic gates in a digital circuit.

In the MZi, coherent beams of light pass through a succession of couplers and phase shifters. At each coupling point, the interference between the beams results in phase shifts that can be interpreted as part of a matrix multiplication. A 4×4 matrix operation requires just four inputs that feed into six coupling elements, with four output ports providing the result. The speed of the operations is limited only by the rate at which coherent pulses can be passed through the array.

In analog architectures, noise presents a significant hurdle. Work by numerous groups on accelerating inference operations has shown that deep neural networks can work successfully at an effective resolution of 4 bits, but hardware overhead and energy rise quickly as resolution increases. These effects may limit the practical energy advantage of photonic designs.

Estimates by Alexander Tait, assistant professor at Queen’s University, Ontario, found the potential power-savings easily eroded by the practical limitations of today’s optical devices. Tait calculated just 500 micro-ring resonators acting as neurons in a fully connected layout could fit onto a single 1cm2 die using early 2020s technology. But operating at 10GHz, it would require a kilowatt of power. Tait stresses the example shows the impact of the current need for heaters to tune optical properties. Scaling and design changes could bring the energy down dramatically. “The heaters are certainly a solvable problem,” he says.

One possible direction is to use phase-change materials like those used in rewritable DVDs and non-volatile memories. These materials exhibit changes in their electro-optical properties based on how quickly they are cooled after rapid heating. But as they do not need constant heating, they would result in lower-energy optical computers if they can be made to work reliably.

“If you have a phase shifter that is low energy, there are so many things you can do in integrated photonics,” says Carlos Ríos Ocampo, professor of materials science and engineering at the University of Maryland.

However, there are differences in how the materials can be applied. Tait points out that while the energy of MZi and micro-ring multiplier implementations are similar today, because the tuning needed for MZi devices is dynamic, the non-volatile nature of phase-change materials does not convey as big a benefit as for micro-rings.

“If you have a phase shifter that is low energy, there are so many things you can do in integrated photonics.”

Another factor in favor of optical processing is that the high bandwidth and speed of photonic processing means integration need be nowhere the level of electronic processing.

“The metric is compute density. If you do vector or matrix operations with photonics you don’t need dense core devices: with the higher speed you can use the hardware recursively,” says Bhavin Shastri, assistant professor at Queen’s University.

A potentially more troublesome problem for photonic neural networks lies in the need for non-linear behavior in AI algorithms. Most of the conventional photonic components work entirely linearly and lack the flexibility of transistors that can operate in linear and non-linear regions.

“Non-linear interactions become quite challenging. Either you need new materials to enhance the nonlinearities or go to optical-electronic-optical conversion, and this has to be done in a very efficient way,” Shastri says. Today, the delay in electro-optical conversion reduces the theoretical throughput advantage of photonic AI.

Ríos Ocampo points out that the semiconductor fab operators who could build the necessary silicon photonics chips are reluctant to introduce novel materials into their processes without a large market driver. Photonic computing has yet to provide the necessary demand. Some help may come from the experience semiconductor companies have obtained in their decades-long work on electronic phase-change memories. This could assist the development of optical non-volatile memories and other components that will be useful in these systems, though most of the focus in semiconductors has been on compounds that absorb light. Transparent materials that can be used to manipulate phase alone would need far more testing for compatibility with semiconductor fab equipment.

Despite photonic AI being at an early stage of its evolution, several startups have embarked on plans to build commercial photonic accelerators, taking advantage of improvements in silicon-photonics made so far, primarily in response to the needs of the networking and communications sector. Communication throughput remains a key focus in the AI world as well. Among the small group of startups, Lightmatter is working on both a photonic accelerator and an optical interconnect technology that can be used to improve communications speed between electronic modules.

Luminous Computing originally planned to build its own photonic AI system, but the company has opted to focus on an electronic accelerator supported by a photonic interconnect of its own design.

Luminous president Michael Hochberg says having investigated its options for a photonic core, “We concluded that the bottlenecks that were preventing dramatic improvements were elsewhere in the system.”

A brighter future for full photonic AI may lie outside datacenter systems, such as those being built by Lightmatter and Luminous. “This other community is looking at applications where electronics fundamentally has challenges,” Shastri says. “There are some things you can’t just offload to a data center. The question then becomes: What are those tasks?”

Though the trend was short-lived at the time, the driver behind research into optical-only routers in the late 1990s was the belief that it would be easier to steer packets at line speed by keeping the data in the photonic domain and not take the hit of electro-optical and digital-analog conversion. There are numerous possible applications where sensor outputs can be taken and used directly without having to go through analog-digital conversion, in contrast to datacenter systems that work on stored data.

Shastri points to recent work on using analog photonic processing to separate radio transmissions. “It’s a form of the cocktail-party problem: In crowded radio spectrum you need to find and focus on just one signal and use intelligent ways of processing it. You can’t just use filtering. The bandwidth of electronics is narrow and the scaling of energy if you use electronic processing can grow quadratically depending on the number of channels or antennas. We showed you can process at really wide bandwidths. And the energy scales linearly.”

“There are some things you can’t just offload to a datacenter. The question then becomes: What are those tasks?”

Another potential application lies in optimization tasks as well as predictive control and stabilization for fast-moving vehicles, such as hypersonic aircraft. “Where you need to converge to a solution really fast, photonics can have an advantage.”

Though much hinges on the ability to integrate multiple technologies in a cost-effective way, the massive demand for data intelligence in a growing range of applications may provide photonic computing with the conditions needed to avoid another R&D winter.

Huang, C. et al.

Prospects and Applications of Photonic Neural Networks Advances in Physics: X, 7:1, 1981155 (2021)

Nahmias, M.A, Ferreira de Lima, T., Tait, A.N., Peng, H.-T., Shastri, B.J, and Prucnal, P.R.

Photonic Multiply-Accumulate Operations for Neural Networks IEEE Journal of Selected Topics in Quantum Electronics, Volume 26, Issue 1 (2020)

Ríos Ocampo, C.A. et al.

Ultra-Compact Nonvolatile Photonics Based on Electrically Reprogrammable Transparent Phase-Change Materials PhotoniX, 3:26 (2022)

Tait, A.N.

Quantifying Power in Silicon Photonic Neural Networks Physical Review Applied 17, 054029 (2022)

Join the Discussion (0)

Become a Member or Sign In to Post a Comment