Judging from the blockbuster success of Avatar, images that appear three dimensional are poised to transform movies. But researchers at the Massachusetts Institute of Technology (MIT) hope to create huge displays in which the third dimension is no illusion.

The Flyfire project aims to create pixels that can move independently in space, by equipping tiny toy helicopters with light-emitting diodes. So far the team has coordinated the motion of only about ten helicopters, and full-scale results are probably a few years away. But their video simulations illustrate the dramatic results such a display would make possible.

Remote control of flying vehicles poses special challenges, says Emilio Frazzoli of MIT’s Aerospace Robotics and Embedded Systems Laboratory. "On the ground, if you get in trouble, you can just shut down everything," he notes. "You don’t have that luxury in the air." In addition, different helicopters must not only avoid colliding but also steer clear of each others’ downwash.

Frazzoli and his colleagues have extensive experience getting robots to act on their own, for example in the "urban challenge" run by the Defense Advanced Research Projects Agency. "We know how to design a single autonomous object," he says, but practical robots will need to function in a complicated environment that includes humans and other robotic agents.

So far, the team has worked in a controlled indoor environment to coordinate a few helicopters, which they equipped with microcontrollers and sensors for stabilization and hovering capability. For overall positioning, they used an external computer, relying on a motion-capture system like that used to make Avatar to monitor the motion of the helicopters.

"This is not very scalable to a large number of vehicles" as envisioned in the display project, Frazzoli says. "I expect that, as the project develops and scales up, the tradeoff between onboard and offboard intelligence will have to be skewed more towards onboard."

The Flyfire project grew out of a conversation between Frazzoli and Carlo Ratti, who heads the SENSEable City Laboratory, based in the Urban Studies and Planning Department at MIT. In general, the lab looks for ways in which "pervasive technologies can better inform the citizen that resides in the city," says E Roon Kang, a research fellow working on Flyfire. Other lab projects, for example, track trash electronically or use cell-phone data to study people’s movements.

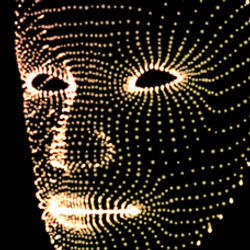

The display idea builds on the vision of "smart dust," in which small, smart sensors relay information about the environment, Kang says. "If we have thousands of those small LEDs flying around, we can create a three dimensional display that will change its configuration in real time." Because it can take on a true three-dimensional shape, he says, "it’s almost like you have another channel of information streaming, that is delivering another dimension of information."

At first, the researchers hope to capture people’s imagination by using the display for public art, but other uses may emerge as they learn more, Kang says. "We’re just at the stage of envisioning this."

Join the Discussion (0)

Become a Member or Sign In to Post a Comment