Today's deep neural networks (DNNs) must be taken off-line to update their learned skills, but real brains update their skill set by reconfiguring their neurons and synapses constantly on the fly—a feat that can now be performed with reconfigurable brain-like chips using quantum materials.

Quantum materials—such as superconductors, topological insulators and even graphene—have remarkable electronic properties that manifest macroscopic quantum mechanical principles that traditional semiconductors only exhibit at the microscopic scale. Now researchers have found a way to form a nickelate (nickel-based) quantum material from the rare earth element neodymium bonded to nickel oxide, NdNiO3 (also known as NNO), with remarkable room-temperature electronic properties that make it ideal for lifelong neural learning hardware, according to a team of researchers from Purdue University, Argonne National Laboratory, the University of Illinois Chicago, Brookhaven National Laboratory, and the University of Georgia.

"The brain is continuously learning," said Purdue University professor Shriram Ramanathan. The formation of new neurons and synapses, a process known as neurogenesis, is ongoing in human brains from birth to death, while today's DNNs are routinely fixed after a lengthy training period, during which they learn a rote function. Ramanathan believes the new quantum material NNO will enable DNNs to learn continuously using neurogenesis, just like human brains.

"Neurogenesis and synaptic rewiring play a crucial role in the formation of memory, and a dynamic brain engaged in life-long learning," said Ramanathan. "Inspired by dynamic reconfiguration in the brain, we hypothesized: if we could mimic neurogenesis behaviors in electrical hardware, we can make AI machines that learn throughout their lifespan."

Funded by the U.S. Department of Energy Office of Science, the U.S. Air Force Office of Scientific Research, and the National Science Foundation (NSF), the researchers formed thin films of NNO on silicon-on-insulator (SOI) -compatible substrates to create proof-of-concept microchips that outperform traditional silicon in deep neural learning hardware. The NNO material demonstrates novel quantum properties that enable it to learn throughout its lifetime, forming new classification categories and deleting old unused ones. It seems ideal for Internet of Things devices on the network's edge, autonomous cars, and many other real-world functions where the world's raw data is in constant flux.

The key to the quantum material NNO is that its perfect crystalline structure is intentionally doped with injected hydrogen atoms, which can be reconfigured in real time to learn different functions. This differs significantly from traditional semiconductor materials, whose conductivity is fixed by their concentration of ionized dopants at the time of manufacture. NNO, when configured as a perovskite crystal, can dynamically change its dopant concentrations.

Explained Ramanathan, "Hydrogen [a proton with one electron] acts as an electron donor [leaving only the proton], inducing a colossal metal-to-insulator phase change in perovskite nickel oxide semiconductors [NNO] at room temperature. With catalytic electrodes such as platinum or palladium, hydrogen molecules split to protons and electrons at the boundary of the perovskite nickelate semiconductor. Depending on the position of the protons, we can induce different electronic states which can be utilized for adaptive learning."

Joshua Yang, an IEEE Fellow, a professor in the department of electrical and computer engineering at the University of Southern California, as well as co-director of the university's Institute for the Future of Computing, describes the protons in the crystalline lattice as the "mobile species" for switching states.

"I like the fact that protons are used as the mobile species for the switching, which renders their devices more energy-efficient, CMOS-compatible, and more uniform than filamentary resistive memories [where the filaments conductor's radius is negligible compared to its length of the resistive connection]," said Yang.

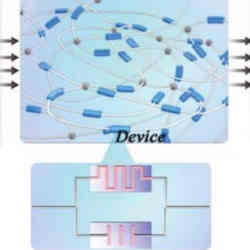

Instead of a switching mechanism similar to that used in resistive memories (RRAMs, where conductivity is changed by electromigration) the researchers used electrical fields to redistribute the protons already doped into the nickelate lattice by modifying the conductivity systematically to generate a multitude of electronic devices. For instance, to generate an RRAM-type electronic device, annealing (heating to shake up the lattice, then gently cooling to settle it into a more stable state) results in a NNO phase transition which, like a RRAM, changes resistivity by several orders of magnitude. A neuron-like device is made by first electrically stimulating protons into a central concentration, while a synaptic-type device is made by first depleting a central core to be proton free.

Unparalleled Plasticity

The perovskite's crystalline structure accommodates one rare-earth atom (Nd), one nickel atom (Ni), and three oxygen atoms (O3). The researchers were able to sputter (as well as use atomic layer deposition) to apply thin films of NNO to an oxidized wafer. The wafer was then processed and diced into chips, which the researchers characterized as exhibiting quantum properties at the macroscale, namely reconfigurable electronic phase transitions. These macroscopic quantum properties are similar to those of other quantum materials, such as zero resistance in superconductors, spin locked to momentum on the surface of topological insulators, and the quantum Hall effect in graphene.

"Our platform can dynamically reconfigure and adapt its status in response to incremental inputs, which is not possible for static neural networks to do. For example, if [a minimum-sized] static model learned digits 0-4, then when it tried to learn new digits 5-9, it would not be able to do so with sufficient accuracy. However, our dynamic network activates new nodes to handle this incremental memory need in order to learn new digits while maintaining its high accuracy," said Ramanathan. "We believe our ability to modify existing neural network structures, depending on new tasks, is a very powerful strategy for future AI."

How does it work?

Cortical data processing in human brains uses self-adaptive dynamic grow-when-required (GWR) networks to achieve lifelong learning in the real world. The researchers mimic this process by creating and deleting neural network nodes and changing the strength of the connecting synapses on the fly to change internal representations so they better match changing input data.

Perovskite semiconductors are room-temperature quantum materials because they undergo electronic phase transitions that use one-shot electronic pulses to relocate protons present in its original doping. These voltage-controlled metastable reconfigurations are achieved by the moving protons in the perovskite lattice using electronic migration of the positively-charged hydrogen doping atoms, minus their donor electrons.

Dynamic reconfiguration of its circuitry performs different brain-like functions with the same hardware. Neurons are emulated by adding more protons around the center of a device, whereas moving the protons away from that same location allows the device to act like a synapse.

In greater detail, the researchers reconfigured the brain-inspired reservoir computing (RC) machine learning architecture. The reconfigurable perovskite semiconductor executes all the different brain functions using reconfigurable machine learning hardware functions that the researchers claim are faster than typical DNN algorithms running in software. NNO's reconfigurable hardware structures quickly perform continuous learning functions from scant datasets, whereas traditional DNNs require slow static preparatory learning algorithms to be run on vast datasets before runtime functions will execute properly.

Traditional DNN machine learning also must be taken off-line periodically and retrained to accommodate new data cases by its software simulations of neural networks. Also, before retraining, the DNN often slowly degrades in performance while online, until more layers can be added during retraining offline. NNO's dynamically reconfigurable semiconductors, on the other hand, improve their performance while online by automatically reconfiguring for new data cases within the same-sized hardware matrix.

In practice, the NNO approach will work better for edge devices that can take advantage of their reconfigurability by better allocating resources on-chip as new data cases emerge in the field, according to Ramanathan et. al. In real-world testing by the researchers ,they reported the reconfigurable perovskite semiconductor operated more efficiently in recognizing electrocardiogram patterns and digits, compared to static DNNs.

R. Colin Johnson is a Kyoto Prize Fellow who has worked as a technology journalist for two decades.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment