"CAUTION" my iPhone warns me, highlighting the word for good measure in a bright red circle. No, I wasn't about to overuse my data plan, or even try to make sense of a Donald Trump tweet. Instead, I had, like some Instagram-obsessive, just taken a photo of my breakfast for the first time, and an artificial intelligence (AI) resident on my phone didn't like what it saw. The reason? My poached eggs and toast also included … a sausage.

"High fat content," the warnings continued, "risk of weight gain."

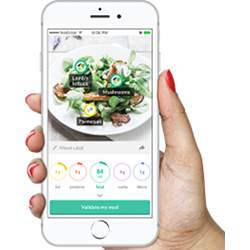

What I was looking at was FoodVisor, a novel image recognition app that is harnessing the image recognition power of deep learning convolutional neural networks (CNNs) to recognize the food on our plates. However, just like the traditionally weighty tasks undertaken by CNNs, which include recognizing objects, faces, or speech, identifying food is also turning out to be a tougher AI task than expected.

The idea behind the app is to boost adherence to calorie-controlled diets by making it easier to chronicle your food intake. Until now, dieters have had to laboriously track what they have eaten by filling in computer or phone-based food diaries after every meal, snack, or drink, as many do on popular commercial slimming programs like the Weight Watchers plan from WW International of New York, for instance.

Although smartphones have made this easier because the diary app is almost always at hand on an always-on device, unlike a Web-based interface on a laptop or desktop, entering detailed meal ingredient info and rotating through menus to select the amounts you've eaten is still an unwieldy hassle; so much so, that it is one of the reasons people give up on diets.

"We realized that current food diary solutions are very time-consuming and tiring to use," says Jann Giret, head of research and development at FoodVisor, the 12-person tech startup based in Paris, France, that is behind the eponymous app. "You have to search every food type you eat, work out the amount you are eating manually, and enter it in the diary. For that, reason a lot of people quit their diets. So we thought: what if we could make food-logging as easy as taking a picture?"

They are not alone in thinking this would be a great idea: experts in public health agree. "For novices who are new to food tracking, manually logging calories is burdensome and hard to do accurately," says Eun Kyoung Choe, an information scientist specializing in human-computer interaction and health at the University of Maryland (UMD) in College Park, MD. "Automating this process, assuming that the information is accurate, could be useful in many ways."

How so? First, Choe says, accurate tracking could provide people with a great nutritional education tool. Second, taking a photo of your meal is far less taxing than laboriously entering food items and amounts. Finally, she says the information gleaned about eating habits could be valuable for dietitians and doctors, who may be treating patients with a raft of obesity-related conditions.

Giret realized food logging was one of the slimming industry's unsolved problems while on a 2015 internship at Withings, the Paris-based maker of Wi-Fi-connected weighing scales, and a host of other wearable, quantified self gadgets. Once his internship was completed and he was back studying computer science at the École Centrale in Paris, Giret, alongside fellow students Charles Boes (now FoodVisor CEO) and Gabriel Samain, set about making food logging as easy as smartphone photography. It became their final-year project, "using the applied math, machine learning, and computer vision techniques" they had recently learned, Giret says.

They started simply, Giret recalls, working out how to train a computer vision system to recognize six basic food items in isolation: salmon steaks, green beans, lamb cutlets, apples, a dessert, and French fries. From those humble beginnings, they moved on to using deep neural networks, and their system soon was able to recognize dozens of food items (it now can identify more than 1,000). "At the end of the first year of development, our proof of concept was robust enough to move towards creating a real app we could put in users' hands," he says.

To perform food recognition, their algorithm uses a probabilistic process called semantic segmentation to decide, first, whether an item in an image is likely to be food, and if it is, what kind of food is it most probably? To do this, the algorithm examines each pixel in the image and makes a probabilistic judgment on its nature; doing this at the pixel level also helps judge how much food, and therefore how many calories (and other nutritional categories like fat, saturated fat, protein, sugars, etc.), will be consumed.

FoodVisor's algorithm takes into account a number of factors to improve the probabilistic decision on the foodstuff, or meal, with which it is being presented. These include the country the app is being used in, so it can consider what it sees in the context of local cuisine; plus the time of day, "so it can say that a golden liquid is more likely to be apple juice than beer if it's breakfast time," says Giret.

Aptly perhaps for a food product, there is some serious "secret sauce" in FoodVisor's algorithm that helps it differentiate between foods of similar textures and colors, such as light-colored granular foods (like white rice, brown rice, fried rice, and couscous).

While the app easily recognized my breakfast (including the offending sausage), it has trouble recognizing complex mixtures of foods. I cooked up some kedgeree, a dish consisting of flaked fish, rice, vegetables, and egg, and FoodVisor could not tell what it was.

However, there is a learning feedback loop: the app asked me to report the food it was unable to identify by sending the image back to its server and providing a name for the dish. This is how FoodVisor learns, with regular training sessions of its deep neural network. Giret says the system needs about 50 people to send in pictures for each dish, in order for it to have a large-enough training dataset from which to learn.

Considering it is an early take on food recognition, FoodVisor has been well received, especially by people used to keeping fiddly manual diaries. In France, the app already has 800,000 users and, with cuisine databases for British and American palates now installed, it has just launched in the U.K. and U.S.

A segment on ABC News' Good Morning America on January 29 showed the kind of positive reaction the app has been receiving after its recent U.S. and U.K. launches, with words like "magical" and "amazing" being used to describe the lack of need for manual input.

UMD's Choe says she particularly likes the way FoodVisor gets to know the user, so if you use sugar in your coffee, despite that being an invisible factor, it will know that after you inform it once, for instance. "That the app takes input from the user to enhance the accuracy is a good way of engaging people to teach a machine," she says.

FoodVisor was being incubated in a six-month Facebook accelerator program that ended in February, and also by Apple, which likes its health potential. However, it is unlikely to have this space to itself for long. Research elsewhere in the field suggests we can expect a rash of similar food recognition apps to arrive soon, thanks to the amazing pattern recognition power of deep learning CNNs.

For instance, at an ACM workshop on Multimedia for Cooking And Eating Activities last summer, a team led by Ya Lu at the University of Bern in Switzerland also built a CNN-based meal recognition and volume estimation system, reporting "outstanding performance" for it, simply from a single RGB image of a meal. Lu's team is now in the process of creating algorithms that can cope with multiple complex dishes on a single plate.

Very different cuisines may need different approaches, too, so Takumi Ege and Keiji Yanai at the University of Electro-Communications in Tokyo, Japan, have developed deep CNNs that can recognize Japanese meals, and provide calorie estimations for them.

Still others are interested in how augmented reality visors might feed back food data; a team of researchers at the Georgia Institute of Technology has researched how such technology might give speedy at-a-glance glucose data to people coping with diabetes.

And yet, we should not get too hung up on food recognition and calorie counting alone, says Choe. "Food tracking is not the same thing as calorie counting; it's much more than that," she says, adding that it's also important that apps encourage people to understand how their eating behaviors impact their health.

Nutrition experts support this view, and at the ACM Conference on Human Factors in Computing Systems (CHI2019) in Glasgow, UK, this May, Choe and her UMD colleagues will unveil the results of a survey on food tracker design, in which dieticians say providing context is vital in such apps. Unless dieters understand issues beyond calories—like their hunger and fullness levels, their mood, and when they choose to eat—Choe says they will not be getting the most they can from AI's food recognition revolution.

Paul Marks is a technology journalist, writer, and editor based in London, U.K.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment