A man with a stack of papers in his hands walks towards a closed cupboard. In the corner of the room, an 18-month-old boy is watching the scene from a corner of the room. The man bumps to the cupboard, takes a few steps back, tries again, and bumps against the closed doors a second time. The little boy leaves the corner, walks to the cupboard, and opens both cupboard doors; then he looks up to the man, who again walks towards the cupboard. As the boy gazes at the bookshelves, the man places the stack of papers on one of the shelves.

The video described above, titled "Experiments with altruism in children and chimps," was created during a psychological experiment by Massachusetts Institute of Technology (MIT) cognitive scientist and artificial intelligence researcher Josh Tenenbaum. He showed the video during his invited talk on "Building Machines that Learn and Think Like People" at IJCAI 2018, the 27th International Joint Conference on Artificial Intelligence, held in Stockholm, Sweden, in July.

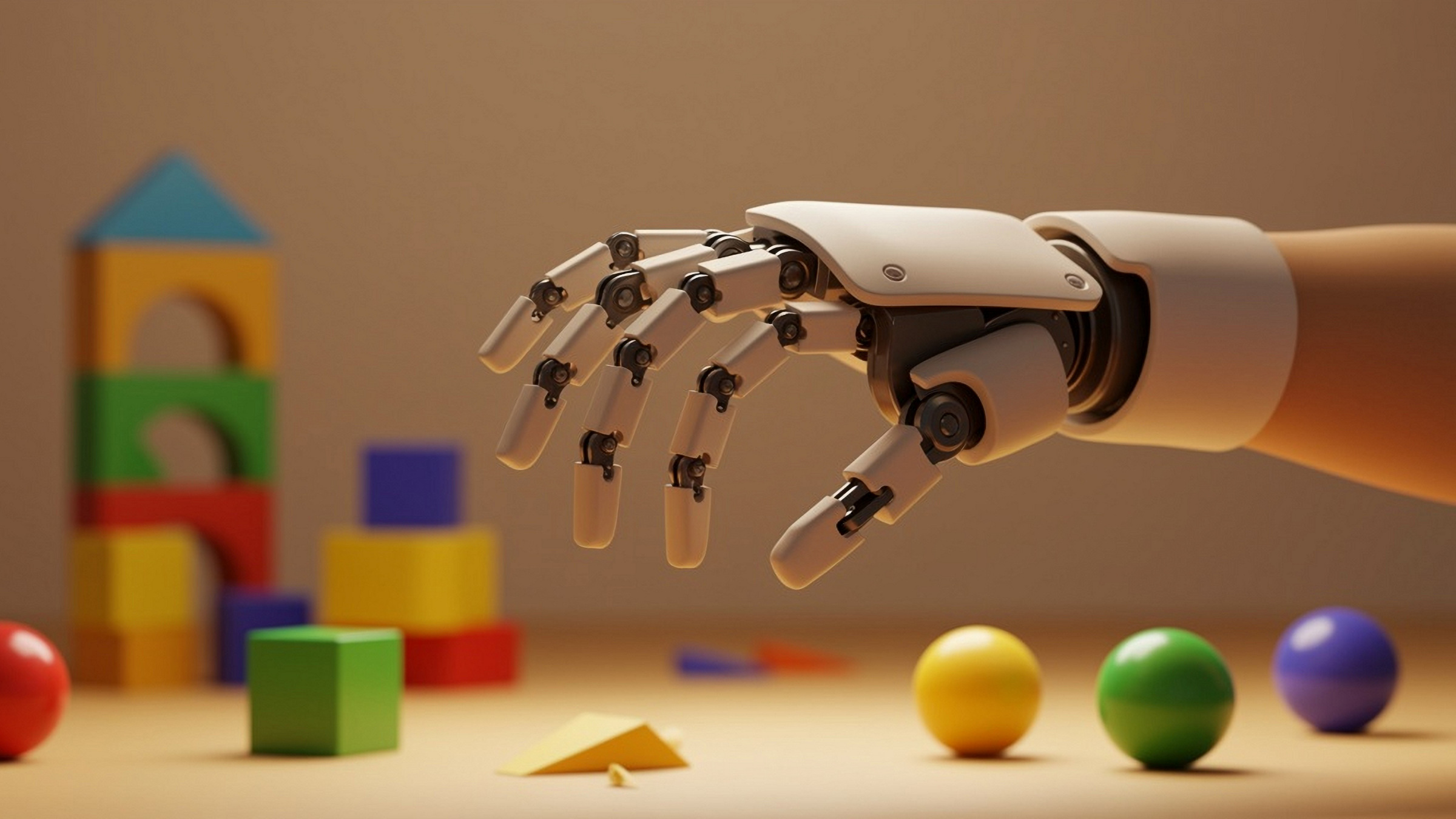

In commenting on the video, Tenenbaum said, "The little boy sees an action that he has never seen before. He can understand what's going on and interact. Think about the common sense going on in this kid's head in order to do this. If we could build robots that can do this, that would be incredible. This is still far away, but that's our aim."

Back in the early 1950s, Tenenbaum notes, computer pioneer Alan Turing thought the learning process of young children is like filling the pages of a notebook consisting of mainly blank sheets. "From developmental psychology, we now know that this idea is completely wrong," said Tenenbaum. "The starting state is much more sophisticated than we might have thought, and the learning processes are also much more sophisticated. Apart from supervised learning and reinforcement learning, which we also use in AI (artificial intelligence), children have other powerful learning mechanisms."

In their first 12 months, infants develop what Tenenbaum calls 'intuitive physics'; they learn things like understanding that objects do not just stop existing, do not teleport, and do not pass through walls. They come to a very basic understanding of three-dimensional space, gravity, inertia, and causality. In addition, he said, infants learn 'intuitive psychology,' the ability to understanding at a basic level what other people think or want, just as the little boy in the video did.

"Intelligence is so much more than pattern recognition," says Tenenbaum. "Intelligence is also about modeling the world: explaining and understanding what we see, imagining things we could see but haven't seen yet, problem-solving and planning actions and building new models as we learn more about the world. We are far from having any AI that can model the world as flexibly and as deeply as humans do, but we have at least one route to get there, and that is to reverse-engineer how these abilities work in the human mind and brain."

That is why Tenenbaum and his colleagues in MIT's Department of Brain and Cognitive Sciences are trying to capture such intuitive physics and intuitive psychology in terms that computers or robots can utilize. He compares the way intuitive physics works in the human mind with the way game engines work in computer games: "Game engines are fast approximate programs for simulating graphics, physics, and planning. They are not prefect, but they work well and efficiently enough. We think that in first approximation, these game engines can describe in some way what evolution has given us as common-sense modeling ability."

With some basic experimentation, Tenenbaum can demonstrate how a computational intuitive physics model he and his colleagues developed can predict how people think. Show people a picture of a stack of blocks, and they can fairly well predict whether or not the stack will fall over, in which direction the stack might fall, and even how far away the falling blocks will land. The computational intuitive physics model makes the same predictions as the people, without any learning going on, just working from a model of the world.

Tenenbaum and his colleagues also developed a computational intuitive psychology engine grounded in the principle that people try to reach their goals with the least effort. "Even three-month-old babies understand that people acting in the world try to do this efficiently, and 10-month-old babies already do some kind of cost-benefit analysis to see how much people want a certain goal."

In order to capture the roots of human common sense in engineering terms, Tenenbaum proposes combining the power of machine learning methods with the classical AI approach of knowledge representation and symbolic reasoning.

"Can we fulfill AI's oldest dream, to build a machine that grows intelligence the way a human being does?" asks Tenenbaum. "We are in the early stages, but I see a promising route by combining new tools from probabilistic programs, game engines, and program learning with the power of symbolic languages, probabilistic and causal inference, and pattern recognition."

Bennie Mols is a science and technology writer based in Amsterdam, the Netherlands.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment