What’s old is new again. At least, it is if we are talking about analog computing. The moment you hear the phrase “analog computing,” you might be forgiven for thinking we are talking about the hipsters of the technology world. The people who prefer vinyl over Spotify. The ones that want to bring back typewriters to replace word processors, or the folks who prize handwritten notes over those generated by ChatGPT.

Nothing could be further from the truth.

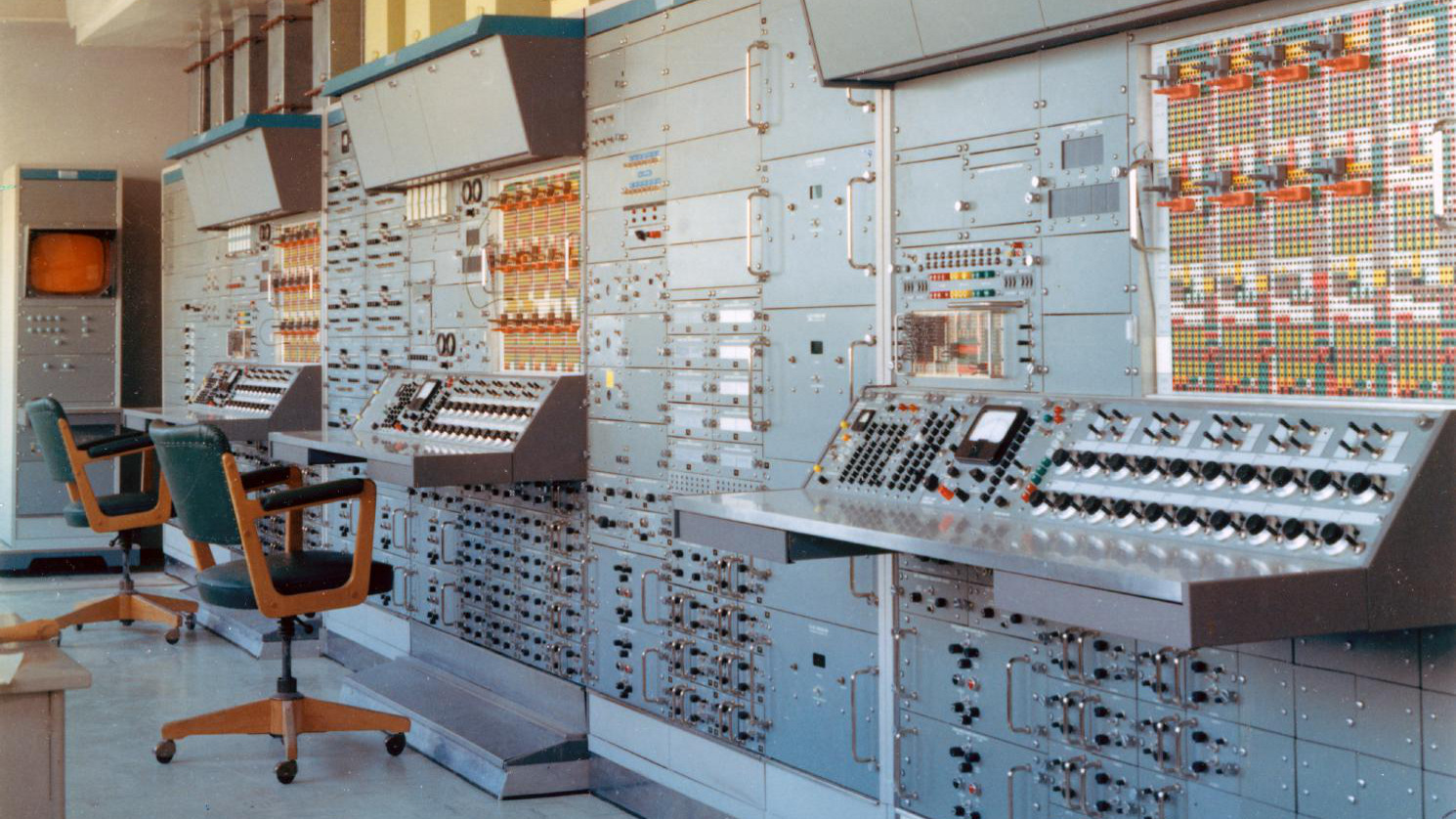

By “analog,” we mean computers that, unlike digital models, do not function by representing values as zeros or ones. Instead, analog computers represent values in various physical metrics, such as voltage or fluid dynamics. It’s true, these types of machines are currently out of vogue, but make no mistake, these computers have been responsible for some of humanity’s biggest steps forward.

“Analog computers, which had their heyday from about 1935 to 1980, helped put us on the Moon, design jet aircraft, model the North American electrical grid, and design roads, bridges, and hundreds of other important engineering applications,” says Dag Spicer, senior curator at the Computer History Museum in Mountain View, CA.

While it is natural to be skeptical of the term “analog” in today’s solidly digital world, analog computing is making a comeback—not because of nostalgia but because of utility.

As the artificial intelligence (AI) revolution kicks off, companies and technologists increasingly are looking for energy-efficient, information-dense devices that can circumvent the limitations of current semiconductor chips. Because of their peculiar benefits, analog computers might provide the solution they are seeking.

“Analog systems are already outperforming digital products,” says Dave Fick, cofounder and CEO of Mythic (https://mythic.ai/), a company that makes analog processors for AI applications.

Why Analog? Why Now?

A big reason some are turning to analog has to do with Dennard scaling.

Dennard scaling is a scaling law in the world of semiconductors that states that as transistors get smaller, they consume less power but provide the same amount of computational ability.

For decades since the law was formulated in 1974, Dennard scaling has held true. Thanks to Moore’s Law, which states the number of transistors that can fit on a chip doubles about every two years, we got very, very good at building smaller chips packed with more and more transistors, each of which (thanks to Dennard scaling) had the same amount of computing power as its larger counterpart. Hence, our chips got a lot more powerful over time.

However, in 2005, Dennard scaling began to break down as we began to build transistors at the nanometer scale. It became more difficult to clock chips faster while still using affordable technology to adequately cool them, eroding the advantages provided by packing more transistors on chips.

This can impact the efficacy of digital computing when compared to analog computing.

Digital computing uses many more devices to do simple calculations than analog does. For instance, doing scalar multiplication in digital requires a large multiplier design with hundreds of transistors. The same can be achieved in analog computing with a resistor and an electrical junction.

Thanks to the breakdown in Dennard scaling, it may be possible—and more beneficial—in certain circumstances to use analog computing. That’s because analog technology uses the full operating range of an electronic device, unlike digital technology, which means a single device can represent more than one bit of information.

“Analog computing allows tremendous information density by having as much as 27 bits of information on a single wire, leading to incredible energy efficiency and performance,” says Fick. As a result, you need fewer analog devices to process the same amount of information as a group of digital devices.

However, that requires trade-offs. While analog has some unique benefits, digital computing as a whole is far more predictable in its accuracy and reliability than analog. It also is highly flexible and programmable.

Which begs the question: Isn’t the digital computing technology we have today simply superior to analog in every way? After all, isn’t analog, backward?

Not exactly.

“Analog and digital technology make different trade-offs and have different costs and benefits,” explains Bruce MacLennan, a professor of electrical engineering and computer science at the University of Tennessee, Knoxville.

In fact, analog technology is particularly attractive in applications where high-density information is important, and that information is represented by real numbers rather than by bits. That is why companies such as Mythic are betting the farm on analog as a way to keep the AI revolution going full speed ahead, even as issues like the breakdown in Dennard scaling arise.

“It is more important than ever to use innovative design techniques, instead of relying on silicon process scaling to provide improvements in energy efficiency and performance,” says Fick.

Analog Intelligence

What’s the reason for Mythic’s bet? It turns out that certain AI applications are extremely well-suited to analog computing.

“We’re seeing the most demand for analog solutions in edge AI applications, particularly ones that require computer vision for use cases like image recognition and object tracking,” says Fick.

Drones are one example. Drones used for everything from package delivery to agriculture need to process multiple large deep neural networks in real time. At the same time, however, they also need to be power-efficient in order to prolong flight times. As Moore’s Law slows down, analog becomes an increasingly viable solution.

And there’s a reason for that.

“The most promising use cases for analog technology are applications involving very large arrays of real numbers,” says MacLennan. Large artificial neural networks that power advanced AI fit the bill. Such networks mimic the computation capabilities of the human brain which, says MacLennan, “can be characterized as massively parallel, low-precision analog computation.”

That just happens to be the type of use case in demand from AI companies trying to buy ever-increasing amounts of compute.

In the example of Mythic, the company says its Analog Matrix Processor (AMP) delivers the same compute power as one of the graphics processing units (GPUs) in such great demand in the world of AI, while boasting just a tenth of the power consumption. When it comes to computer vision, the company believes analog can outcompete digital.

“It will be very tough for digital systems to be able to keep up with analog processors in the coming years,” says Fick. He says Mythic’s M1076 analog chip stores 80 million weights directly on-chip, “which helps it be the lowest-latency processing solution for computer vision, outperforming all digital systems in its class.” In the opinion of proponents such as Fick, if digital computing’s issues present roadblocks to further performance and scale improvements, analog computing could offer us a way to keep the AI revolution going.

However, analog computing does have its downsides.

“It is more difficult and expensive to achieve high-precision computation with analog devices, which require more accurate manufacturing than digital devices,” says MacLennan. With digital technology, you just have to add bits to improve performance, which is cheaper than the multiplicative costs of more advanced techniques at the manufacturing level. Even analog advocates acknowledge there’s a time and a place for analog over digital.

“Since analog computers are not so easily programmed as digital computers, analog technology is suited best to applications—such as artificial neural networks—in which a restricted class of computations are required,” says MacLennan.

The Marriage of Physical and Digital

Some advocates for analog are baffled that one could even draw a line between analog and digital computing.

“It must be said that we live in an analog world,” says Spicer. “Even digital systems are actually analog systems, just ones with predefined analog thresholds for digital ones and zeroes.” He cites the example of the iPhone, a device that combines digital microprocessors with a host of analog systems such as accelerometers, a microphone, speakers, and a gyroscope.

Analog’s real value comes in the marriage of artificial intelligence with the physical world.

This year, there has been a great deal of discussion around ChatGPT and the power of generative AI tools that help us communicate with machines using only natural language. Yet, that is only a bridge to something much bigger than interacting with a chatbot. AI developments now allow us a simple, easy, and usable interface with machines of any type, including physical ones.

That includes the next generation of physical machines being built by companies like Tesla (https://www.tesla.com) and Figure (https://www.figure.ai/), both of which are making humanoid robots for use in factories.

“[Analog] will compete best where small size and low power consumption are advantageous, such as in autonomous robots and drones,” says MacLennan. In other words, in the very vessels we are using to marry the digital wonders of artificial intelligence with agency in the physical world.

So it seems that analog technology today isn’t a competitor to digital, but a complement to it. It remains to be seen if digital computing can overcome or circumvent the breakdown in Denning scaling, but in any event, analog technology fills specific, important gaps.

“Analog technology appears everywhere that a digital system needs to interface with the outside world,” says Spicer. “Analog techniques from the past are being rediscovered and reim-plemented in silicon.”

Abdul, A.

Bite-Size Science: The return of analog computing, a brief on the latest quantum computing innovation, The Tufts Daily, March 10, 2023, https://bit.ly/3Ykz6Wf

Platt, C.

The Unbelievable Zombie Comeback of Analog Computing, WIRED, March 30, 2023, https://www.wired.com/story/unbelievable-zombie-comeback-analog-computing/

Zewe, A.

Q&A: Neil Thompson on computing power and innovation, Massachusetts Institute of Technology, June 24, 2022, https://news.mit.edu/2022/neil-thompson-computing-power-innovation-0624

Join the Discussion (0)

Become a Member or Sign In to Post a Comment