Hopes for quantum computing have long been buoyed by the existence of algorithms that would solve some particularly challenging problems with exponentially fewer operations than any known algorithm for conventional computers. Many experts believe, but have been unable to prove, that these problems will resist even the cleverest non-quantum algorithms.

Recently, researchers have shown the strongest evidence yet that even if conventional computers were made much more powerful, they probably still could not efficiently solve some problems that a quantum computer could.

That such problems exist is a long-standing conjecture about the greater capability of quantum computers. “It was really the first big conjecture in quantum complexity theory,” said computer scientist Umesh Vazirani of the University of California, Berkeley, who proposed the conjecture with then-student Ethan Bernstein in the 1993 paper (updated in 1997) that established the field.

That work, now further validated, challenged the cherished thesis that any general computer can simulate any other efficiently, since quantum computers will sometimes be out of reach of conventional emulation. Quantum computation is the only computational model, then or now, “that violates the extended Church-Turing thesis,” Vazirani said. “It overturned this basic fabric of computer science, and said: ‘here’s a new kid on the block, and it’s completely different and able to do totally different things.'”

Quantum Resources

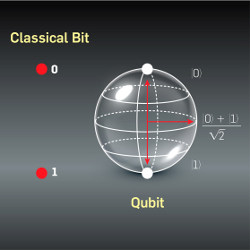

Conventional “classical” computers store information as bits than can be in one of two states, denoted 0 and 1. In contrast, a quantum degree of freedom, such as the spin of an electron or the polarization of a photon, can exist concurrently in a weighted mixture of two states. A set of, say, 50 of these “qubits” thus can represent all 250 (∼1015) combinations of the individual states. Manipulations of this assembly can be viewed as simultaneously performing a quadrillion calculations.

Performing a vast number of computations does not do much good, however, unless a unique answer can be extracted. Interrogating the qubits forces them into some specific combination of 0s and 1s, with probabilities that depend on their post-calculation weights. Critically, however, different initial configurations make quantum contributions to the weight that are complex numbers, which can cancel each other out as well as reinforce each other. The challenge is devising an algorithm for which this cancellation occurs for all configurations except the desired solution, so the eventual measurement reveals this answer.

Soon after Bernstein and Vazirani’s work, mathematician Peter Shor, working at AT&T Research in New Jersey as it spun off from Bell Labs, presented an algorithm that achieved this goal for determining the factors of a large integer. The security of public key cryptography schemes depends on this factorization being impractically time consuming, so the potential for a rapid quantum solution attracted a lot of attention.

Inspired by this and other concrete examples, researchers have been striving to assemble ever-larger systems of physical qubits in the lab that can preserve the delicate complex amplitudes of the qubits long enough to perform a calculation, and to correct the inevitable errors. In recent years, several competing implementations have gotten big enough (dozens of qubits) to achieve “quantum supremacy,” meaning solving selected problems faster than a conventional computer.

Classifying Complexity

Assessing comparative execution times is complicated by the fact that algorithms continually improve, sometimes dramatically. To compare techniques that have yet to be devised and machines that have yet to be built, computer scientists rely not on coding but on formal methods known as computational complexity theory.

These mathematical arguments can determine if an answer can be assured given access to specific resources, such as computational time or the depth of a corresponding circuit. An algorithm that is guaranteed to finish in “polynomial time,” meaning the runtime increases no faster than some fixed power of the size of the input, can be regarded as efficient. In contrast, many problems, notably those that require exhaustively searching many combinatorial possibilities, are only known to yield to methods whose execution time grows exponentially or worse with the size of the input.

Complexity theory divides problems into “complexity classes,” depending on the resources they need. Some of the best-known problems belong to the class P, consisting of problems whose solution can be found in polynomial time. A much larger class is NP, which includes problems for which a proposed solution can be verified as correct in polynomial time. NP includes such classic challenges as the traveling salesman problem and the graph vertex coloring problem, which researchers have been unable to show belong to P. Many experts strongly suspect that polynomial-time algorithms for many problems in NP have not been found because they do not exist, in which case P≠NP. This important question, regarded by many as the most important open question in theoretical computer science, remains unresolved, and a $1-million prize from the Clay Mathematics Institute awaits its answer.

To compare techniques that have yet to be devised and machines that have yet to be built, computer scientists rely on computational complexity theory.

Bernstein and Vazirani defined a new complexity class called BQP (Bounded Quantum Polynomial), which has access to quantum resources. BQP is closely analogous to the conventional class BPP (Bounded Probabilistic Polynomial), which has access to a perfect random-number generator and must not give a wrong answer too often. Currently, some problems having only stochastic solutions are known, but it is hoped that deterministic, “de-randomized” algorithms will eventually be found for them.

Consulting the Oracle

The relationship of the quantum class BQP to various conventional classes, however, continues to be studied, long after Bernstein and Vazirani suggested it includes problems beyond the scope of conventional techniques. “We have our conjectures and we can feel strongly about them, but every so often they are wrong,” Vazirani said. “A proof is really something to be celebrated.”

The new proof of separation does not apply to the pure versions of BQP and the other complexity classes addressed by the Vazirani-Bernstein conjecture. Similar to the long-standing unproven relationship of P and NP, “We almost never are able to actually separate these important classes of complexity theory,” said computer scientist Ran Raz of Princeton University in New Jersey and the Weizmann Institute in Israel. “We don’t know how.”

Instead, Raz and his former student Avishay Tal (now at Stanford University) performed what is called an oracle separation. Like its namesake from ancient Greece (or The Matrix movies), an oracle provides answers to profound questions without explaining how it got them. Roughly speaking, Raz and Tal compared the capabilities of quantum and classical algorithms that were given access to an oracle that answers a specific question. Provided with this oracle, they showed the quantum system could efficiently solve a carefully chosen problem more efficiently than the classical system could using the same oracle.

Lance Fortnow, a computer scientist at the Georgia Institute of Technology, said hundreds of proofs in complexity theory have relied upon such oracle separations. “They are a way for us to understand what kinds of problems are hard to prove and what kinds of results might be possible, but they’re not a definite proof technique,” he said. “We didn’t prove a separation between these two classes,” Raz agreed. “I can’t imagine that [such a separation] will be proved in our lifetime.”

“The basic ability to do Fourier transformation, that’s the heart of the power of quantum, at least most of the algorithms we know.”

“Already there were oracle separations of BQP and NP, BQP and P, and other classes,” Raz said. He and Tal now extend the argument to a super-charged class called the polynomial hierarchy, or PH. “This is what is stronger in our result,” he said. PH can be viewed as an infinite ladder of classes, starting with P and NP, in which successive rungs can build on the earlier ones by using logical constructions. Later classes invoke the earlier ones rather like a subroutine, for example by defining problems using them in a phrase such as “for every,” or “there exists.” “Almost all the problems that we encounter in everyday life are somewhere in the polynomial hierarchy,” Raz said.

If all NP problems had polynomial-time solutions, though, it turns out that the entire polynomial hierarchy would collapse into one class, PH=NP=P. The new result, though, shows that oracle-assisted BQP would still be separate. “The way I view the Raz-Tal oracle is they’re saying that even if P happened to equal to NP—that’s an unlikely case,” Fortnow said, “it’s still possible that quantum can do more than classical machines can.”

What Is It Good For?

“If we choose the right oracle,” Raz said, “we show that there is one problem that BQP will solve better than PH.” In addition to choosing the right oracle, he and Tal had to choose a problem that reveals quantum computation’s strength—and classical computation’s weakness—but they only needed one example.

They adapted an earlier suggestion by Scott Aaronson (then at the Massachusetts Institute of Technology) in which the computer must determine if one sequence of bits is (approximately) the Fourier transform of another. Computing such frequency spectra is a natural task for quantum computations, and Shor’s algorithm exploits precisely this strength to identify periodicities that expose prime factors of the target. “The basic ability to do Fourier transformation,” Fortnow said, “that’s the heart of the power of quantum, at least most of the algorithms we know.”

“The hard part is to give the lower bound for the polynomial hierarchy,” Raz said. To show that no such algorithm, even with access to the oracle, could solve it efficiently, he and Tal tweaked Aaronson’s suggestion so they could apply recent discoveries about pseudorandom sequences.

These and the earlier results illustrate what quantum computers will be able to do, once they get very large and perform like the idealized models, Vazirani said. What is less clear is how to effectively use the less-capable machines that are now being developed. “What will we be able to do with those?” he asked. “That’s one of the things that we are working hard to try to figure out.”

Bernstein, E. and Vazirani, E.

Quantum Complexity Theory, SIAM J. Comput. 26, 1411 (1997).

Shor P.W.

Polynomial-Time Algorithms for Prime Factorization and Discrete Logarithms on a Quantum Computer, SIAM Journal of Computing 26, pp. 1484–1509 (1997).

Raz, R. and Tal, A.

Oracle Separation of BQP and PH, Electronic Colloquium on Computational Complexity, Report No. 107 (2018).

Join the Discussion (0)

Become a Member or Sign In to Post a Comment