On June 7, 2014, a Turing-Test competition, organized by the University of Reading to mark the 60th anniversary of Alan Turing’s death, was won by a Russian chatterbot pretending to be a Russian teenage boy named Eugene Goostman, which was able to convince one-third of the judges that it was human. The media was abuzz, claiming a machine has finally been able to pass the Turing Test.

The test was proposed by Turing in his 1950 paper, "Computing Machinery and Intelligence," in which he considered the question, "Can machines think?" In order to avoid the philosophical conundrum of having to define "think," Turing proposed an "Imitation Game," in which a machine, communicating with a human interrogator via a "teleprinter," attempts to convince the interrogator that it (the machine) is human. Turing predicted that by the year 2000 it would be possible to fool an average interrogator with probability of at least 30%. That prediction led to the claim that Goostman passed the Turing Test.

While one commentator argued that Goostman’s win meant that "we need to start grappling with whether machines with artificial intelligence should be considered persons," others argued that Goostman did not really pass the Turing Test, or that another chatterbot—Cleverbot—already passed the Turing Test in 2011.

The real question, however, is whether the Turing Test is at all an important indicator of machine intelligence. The reality is the Imitation Game is philosophically the weakest part of Turing’s 1950 paper. The main focus of his paper is whether machines can be intelligent. Turing answered in the affirmative and the bulk of the paper is a philosophical analysis in justification of that answer, an analysis that is as fresh and compelling today as it was in 1950. But the analysis suffers from one major weakness, which is the difficulty of defining intelligence. Turing decided to avoid philosophical controversy and define intelligence operationally—a machine is considered to be intelligent if it can act intelligently. But Turing’s choice of a specific intelligence test—the Imitation Game—was arbitrary and lacked justification.

The essence of Turing’s approach, which is to treat the claim of intelligence of a given machine as a theory and subject it to "Popperian falsification tests," seems quite sound, but this approach requires a serious discussion of what counts as an intelligence test. In a 2012 Communications article, "Moving Beyond the Turing Test," Robert M. French argued the Turing Test is not a good test of machine intelligence. As Gary Marcus pointed out in a New Yorker blog, successful chatterbots excel more in quirkiness than in intelligence. It is easy to imagine highly intelligent fictional beings, such as Star Trek’s Mr. Spock, badly failing the Turing Test. In fact, it is doubtful whether Turing himself would have passed the test. In a 2003 paper in the Irish Journal of Psychological Medicine, Henry O’Connell and Michael Fitzgerald concluded that Turing had Asperger syndrome. While this "diagnosis" should not be taken too seriously, there is no doubt that Turing was highly eccentric, and, quite possibly, might have failed the Turing Test had he taken it—though his own intelligence is beyond doubt.

In my opinion, Turing’s original question "Can machines think?" is not really a useful question. As argued by some philosophers, thinking is an essentially human activity, and it does not make sense to attribute it to machines, even if they act intelligently. Turing’s question should have been "Can machines act intelligently?," which is really the question his paper answers affirmatively. That would have led Turing to ask what it means to act intelligently.

Just like Popperian falsification tests, one should not expect a single intelligence test, but rather a set of intelligence tests, inquiring into different aspects of intelligence. Some intelligence tests have already been passed by machines, for example, chess playing, autonomous driving, and the like; some, such as face recognition, are about to be passed; and some, such as text understanding, are yet to be passed. Quoting French, "It is time for the Turing Test to take a bow and leave the stage." The way forward lies in identifying aspects of intelligent behavior and finding ways to mechanize them. The hard work of doing so may be less dramatic than the Imitation Game, but not less important. This is exactly what machine-intelligence research is all about!

Follow me on Facebook, Google+, and Twitter.

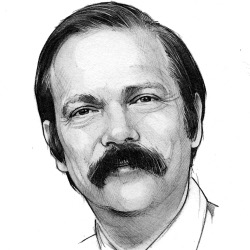

Moshe Y. Vardi, EDITOR-IN-CHIEF

Join the Discussion (0)

Become a Member or Sign In to Post a Comment