Throughout the history of computer science, leading researchers—including Turing, von Neumann, and Minsky—have looked to nature. This inspiration has often led to extraordinary results, some of which acknowledged biology even in their names: cellular automata, neural networks, and genetic algorithms, for example.

Computing and biology have been converging ever more closely for the past two decades, but with a vision of computing as a resource for biology. The resulting field of bioinformatics addresses structural aspects of biology, and it has produced databases, pattern manipulation and comparison methods, search tools, and data-mining techniques.47,48 Bioinformatics’ most notable and successful application so far has been the Human Genome Project, which was made possible by the selection of the correct abstraction for representing DNA (a language with a four-character alphabet).48 But things are now proceeding in the reverse direction as well. Biology is experiencing a heightening of interest in system dynamics by interpreting living organisms as information manipulators.30 It is thus moving toward “systems biology.”31 There is no general agreement on systems biology’s definition, but whatever we select must embrace at least four characterizing concepts. Systems biology is a transition:

- From qualitative biology toward a quantitative science;

- From reductionism to system-level understanding of biological phenomena;

- From structural and static descriptions to functional and dynamic properties; and

- From descriptive biology to mechanistic/causal biology.

These features highlight the fact that causality between events, the temporal ordering of interactions and the spatial distribution of components are becoming essential to addressing biological questions at the system level. This development poses new challenges to describing the step-by-step mechanistic components of phenotypical phenomena, which bioinformatics does not address.9

One of the philosophical foundations of systems biology is mathematical modeling, which specifies and tests hypotheses about systems;7 it is also a key aspect of computational biology because it deals with the solution of systems of equations (models) through computer programs.37 Solution of systems of equations is sometimes termed “simulation.” By whatever name, the main concept to be exploited involves instead algorithms and the (programming) languages used to specify them. We can then recover temporal, spatial, and causal information on the modeled systems by using well-established computing techniques that deal with program analysis, composition, and verification; integrated software-development environments; and debugging tools as well as computational complexity and algorithm animation. The convergence between computing and systems biology on a peer-to-peer basis is then a valuable opportunity that can fuel the discovery of solutions to many of the current challenges in both fields, thereby moving toward an algorithmic view of systems biology.

The main distinction between algorithmic systems biology and other techniques used to model biological systems stems from the intrinsic difference between algorithms (operational descriptions) and equations (denotational descriptions). Equations specify dynamic processes by abstracting the steps performed by the executor, thus hiding from the user the causal, spatial, and temporal relationships between those steps. Equations describe the changing of variables’ values when a system moves from one state to another, while algorithms highlight why and how that system transition occurs. We could simplify the difference by stating that we move from the pictures described by equations to the film described by algorithms.

Algorithms precisely describe the behavior of systems with discrete state spaces, while equations describe an average behavior of systems with continuous state spaces. However, it must be noted that hybrid approaches exist; they manipulate discrete state spaces annotated with continuous variables through algorithms.2,15

It is well known in computer science that input-output relationships are not suitable for characterizing the behavior of concurrent systems, where many threads of execution are simultaneously active (in biological systems, millions of interactions may be involved). Concurrency theory was developed as a formal framework in which to model and analyze parallel, distributed, and mobile systems, and this led to the definition of specific programming primitives and algorithms. Equations, by contrast, are sequential tools that attempt to model a system whose behavior is completely determined by input-output relations. The sequential assumption of equations also impacts the notion of causality that coincides with the temporal ordering of events. In a parallel context, causality is instead a function of concurrency14 and may not coincide with the temporal ordering of the observed events. Therefore relying on a sequential modeling style to describe an inherently concurrent system immediately makes the modeler lose the connection with causality.

The full involvement of computer science in systems biology can be an arena in which to distinguish between computing and mathematics, thereby clarifying a discussion that has been going on for 40 years.21,33 Algorithms and the coupling of executions/executors are key to that differentiation.

Algorithms force modelers/biologists to think about the mechanisms governing the behavior of the system in question. Therefore they are both a conceptual tool that helps to elucidate fundamental biological principles and a practical tool for expressing and favoring computational thinking.53 Similar ideas have been recently expressed in Ciocchetta et al.12

Algorithms are quantitative when the mechanism for selection of the next step is based on probabilistic/temporal distributions associated with either the rules or the components of the system being modeled. Because the dynamics of biological systems are mainly driven by quantities such as concentrations, temperatures, and gradients, we must clearly focus on quantitative algorithms and languages.

Algorithms can help in coherently extracting general biological principles that underlie the enormous amount of data produced by high-throughput technologies. Algorithms can also organize data in a clear and compact way, thus producing knowledge from information (data). This point actually aligns with the idea of Nobel laureate Sydney Brenner that biology needs a theory able to highlight causality and abstract data into knowledge so as to elucidate the architecture of biological complexity.

Algorithms need an associated syntax and semantics in order to specify their intended meaning so that an executor can precisely and unambiguously perform the steps needed to implement them. In this way, we are entering the realm of programming languages from both a theoretical and practical perspective.

The use of programming languages to model biological systems is an emerging field that enhances current modeling capabilities (richness of aspects that can be described as well as the easiness, composability, and reusability of models).42 The underlying metaphor is one that represents biological entities as programs being executed simultaneously and that represents the interactions of two entities by the exchange of messages between the programs.46 The biological entities involved in a biological process and the corresponding programs in the abstract model are in a 1:1 correspondence, thus avoiding the need to deal directly with the combinatorial explosion of variables needed in the mathematical approach.

This metaphor explicitly refers to concurrency. Indeed, concurrency is endemic in nature, and we see this in examples ranging from atoms to molecules in living organisms to the organisms themselves to populations to astronomy. If we are going to reengineer artificial systems to match the efficiency, resilience, adaptability, and robustness of natural systems, then concurrency must be a core design principle that, at the end of the day, will simplify the entire design and implementation process. Concurrency, therefore, must not be considered as just a tool to improve the performance of sequential-programming languages and architectures, which is the standard practice in most actual cases.

Some programming languages—those that address concurrency as a core primitive issue and that aim at modeling biological systems—are in fact emerging from the field of process calculi.5,15 These concurrent programming languages are very promising for establishing a link between artificial concurrent programming and natural phenomena, thus contributing to the exposure of computer science to experimental natural sciences. Further, concurrent programming languages are suitable candidates for easily and efficiently expressing the mechanistic rules that propel algorithmic systems biology. The suitability of these languages is reinforced by their clean and formal definition, which supports both the verification of properties and the analysis of systems and provides no engineering surprises, as could happen with classical thread and lock mechanisms.52

A recent paper by Nobel laureate Paul Nurse maintains that a better understanding of living organisms requires “both the development of the appropriate languages to describe information processing in biological systems and the generation of more effective methods to translate biochemical descriptions into the functioning of the logic circuits that underpin biological phenomena.”38 This description perfectly reflects the need for a deeper involvement of computer science in biology and the need of an algorithmic description of life based on a suitable language that makes analyses easier. Nurse’s statement implicitly assumes that the modeling techniques adopted so far are not adequate to address the new challenges raised by systems biology.

Design principles of large software systems can help in developing an algorithmic discipline not only for systems biology but also for synthetic biology—a new area of research that aims at building, or synthesizing, new biological systems and functions by exploiting new insights from science and engineering.

Finally, it is important to note that process calculi are not the only theoretical basis of algorithmic systems biology. Petri nets, logic, rewriting systems, and membrane computing are other relevant examples of formal methods applied to systems biology (for a collection of tutorials see Bernardo et al6). Other approaches that are more closely related to software-design principles are the adaptation of UML to biological issues (see www.biouml.org) and statecharts.28 Finally, cellular automata27 need to be considered as well, with their game of life.

The Role of Computing

According to Denning,18 the field of computing addresses information processes, both artificial and natural, by manipulating different layers of abstraction at the same time and structuring their automation on a machine.53 These abilities are relevant in the convergence of computer science and biology in assuring that the correct modeling abstraction for biological systems be found that is not created solely by mimicking nature. A beautiful example of this statement is that airplanes do not flap their wings, though they fly.50

Augmenting the range of applicative domains taken from other fields is the main strategy for making computer science grow as a discipline, for improving the core themes developed so far, and for making it more accessible to a broader community.32 Therefore adding an algorithmic component to systems biology is an especially valuable opportunity, as this new approach covers, in a unique challenge, four core practices of computer science: programming, systems thinking, modeling, and innovation.17 Algorithmic systems biology is a bona fide case of innovation fostered by computer science, as it uses novel ideas for modeling and analyzing experiments. Moreover, the biotech and pharmaceuticals industries could adopt algorithmic systems biology in order to streamline their organizations and internal processes with the aim of improving productivity.52

To have an impact on the scientific community and to truly foster innovation, algorithmic systems biology must provide conceptual and software tools that address real biological problems. Hence the techniques and prototypes developed and tested on proof-of-concept examples must scale smoothly to real-life case studies. Scalability is not a new issue in computing; it was first raised several decades ago when computers started to become connected over dispersed geographical regions and the first high-performance architectures were emerging.39 Algorithmic systems biology can build on the large set of successfully defined and novel techniques that subsequently were developed—particularly in the areas of programming languages, operating systems, and software-development environments—to address the scalable specification and implementation of large distributed systems.

Consider the exploitation, at user level, of the Internet. The dynamics (evolution and use) of the Internet have no centralized point of control and are based both on the interaction between nodes and on the unpredictable birth and death of new nodes—characteristics similar to the simultaneously active threads of interactions in living systems. Yet although biological processes share many similarities with the dynamics of large computer networks, they still have some unique features. These include self-reproduction of components (dating back to von Neumann’s self-reproducing automata in computing and to Rosen’s systems, closed under efficient causality, in biology), auto-adaptation to different environments, and self-repair. Therefore it seems natural to check whether the programming and analysis techniques developed for computer networks and their formal theories could shed light on biology when suitably adapted.

Such innovation should be facilitated in the life sciences community by presenting computers as high-throughput tools for quantitatively analyzing information processes and systems—tools that can be made greatly customized through software to work with specific processes or systems. In other words, software may be used to plan and control information experiments to serve a myriad of purposes.

The topic of simulation in particular needs some consideration. Simulation has evolved since the early days of computing into a more quantitative algorithmic discipline: the rules of interaction between components are used to build programs, as opposed to abstracting overall behavior through equations. The execution of algorithmic simulations relies on deep computing theories, while mathematical simulations are solved with the support of computer programs (where computing is just a service).22 Execution of algorithms therefore exhibits emergent behavior produced at system level, through the set of local interactions between components, without the need to specify that behavior from the beginning. This property is crucial to the predictive power of the simulation approach, especially for biological applications. The complex interactions of species, the sensitivity of their interactions (expressed through stochastic parameters), and the localization of the components in a three-dimensional hierarchical space make it impossible to understand the dynamic evolution of a biological system without a computational execution of the models. The algorithms that are executed on top of stochastic engines and governed by the quantities described here are fundamental to discovering new organismic behavior and thus to creating new biological hypotheses.

Algorithmic systems biology completely adopts the main assets of our computing discipline: hierarchical, systems, and algorithmic thinking in modeling, programming and innovating.

Algorithmic systems biology can also be easily integrated with bioinformatics. An example that would benefit from such integration is the modeling of the immune system, because the dynamics of an immune response involve a genomic resolution scale in addition to the dimensions of time and space. Inserting genomic sequences of viruses into models is quite easy for an algorithmic modeling approach, but it is extremely difficult in a classic mathematical model,45 which suffers from generalization because a population of heterogeneous agents is usually abstracted into a single continuous variable.8 Deepening our understanding of the immune system through computing models is fundamental to properly attacking infectious illnesses such as malaria or HIV as well as autoimmune diseases that include rheumatoid arthritis and type I diabetes. Also, computer science can exploit such models to further propel research on artificial immune systems in the field of security.23

Design principles of large software systems can help in developing an algorithmic discipline not only for systems biology but also for synthetic biology—a new area of biological research that aims at building, or synthesizing, new biological systems and functions by exploiting new insights from science and engineering. An algorithmic approach can help propel this field by providing an in-silico library of biological components that can be used to derive models of large systems; such models could be ready for simulation and analysis just by composing the available modules.16

The notion of a library of (biological) components, equipped with attributes governing their interaction capabilities and automatically exploited by the implementation of the language describing systems dynamics, substantially contributes to overcoming the misleading concept of pathways that fills biological papers, where a pathway is posited as an almost-sequential chain of interactions. The theory of concurrency, however, maintains that neglecting the context of interactions (all the other possible routes of the system) produces an incomplete and untrustworthy understanding of the system’s dynamics. Metaphorically, it is not possible to understand the capacity of the traffic organization of a city by looking at single routes from one point to another, or to fully appreciate a goal in a team sport by looking at the movements of a single player.

At a different level of abstraction, the study of pathways is a reductionist approach that does not take pathway interactions (crosstalk) into account and does not help in unraveling emergent network behavior. The management of hierarchies of interconnected specifications, so typical of computer science, is fundamental for interpreting what systems behavior means, depending on the context and the properties of interest. It could be easy to move to biological networks by considering the biological entities as a collection of interacting processes and by studying the behavior of the network through the conceptual tools of concurrency theory.

Note also that a model repository, representing the dynamics of biological processes in a compact and mechanistic manner, would be extremely valuable in heightening the understanding of biological data and the basic principles governing life. Such a repository would favor predictions, allow for the optimal design of further experiments, and consequently stimulate the movement from data collection to knowledge production.

Algorithmic systems biology raises novel issues in computing by stepping away from the qualitative descriptions typical of programming languages toward a new quantitative computing. Thus computing can fully become an experimental science, as advocated by Denning,20 that is suitable to supporting systems biology. Core computing fields would themselves benefit from a quantitative approach; a measure of the level of satisfaction from Web service contracts, for example, or the quality of services in telecommunication networks could enhance our current software-development techniques. Another example is robotics, where a myriad of sensors must be synchronized according to quantitative values. Quantitative computing would also foster the move toward a simulation-based science that is needed to address the increasingly larger dimension and complexity of scientific questions.

It will easily become impossible to have the whole system we design available for testing (examples are the new Boeing and Airbus aircraft) and hence we need to find alternatives for studying and validating the system’s behavior. Simulation of formal specifications is one possibility. Indeed, the programming languages used to model biological systems implement stochastic runtime supports that help in addressing extremely relevant questions in biology such as “How does order emerge from disorder?”44 The answers could provide us with completely new ways of organizing robust and self-adapting networks both natural and technological. Further, the discrete-state nature of algorithmic descriptions makes them suitable for implementing the stochastic simulation algorithm by Gillespie25 or its variants. This approach, originally developed for biochemical simulations, is also suitable for quantitatively simulating systems from other domains; in fact, there are cases in which it can be much faster than classical event-driven simulation.34

Algorithmic systems biology completely adopts the main assets of our computing discipline: hierarchical, systems, and algorithmic thinking in modeling, programming and innovating. Moreover, because breakthrough results are sometimes the outcome of processes that do not perfectly adhere to the scientific method based on experiment and observations, creativity can play a crucial role in opening minds and propelling visions of new findings in the future. In fact, we can further exploit algorithmic descriptions of biology for the synthesis (in silico) of completely new organisms by using our conceptual tools in an imaginative way that is similar to the engineering of novel solutions and applications via software in computer science. This approach would parallel that of another emerging field—synthetic biology—which aims to create unnatural systems assembled from natural components to study their behavior. Synthesis, in other words, is a fundamental process that allows us to understand phenomena that cannot be easily captured by analysis and modeling. For instance, the synthesis of a minimal cell would help in understanding the fundamental principles of self-replicating systems and evolution, which are the core elements of life.10,24 Once again, computer science is a perfect vehicle for such inquiry, in which analysis and synthesis are always interwoven. Hence its past experience can substantially help in addressing the key issues of systems and synthetic biology.

Challenges and Future Directions

The main challenges inherent in building algorithmic models for the system-level understanding of biological processes include the relationship between low-level local interactions and emergent high-level global behavior; the partial knowledge of the systems under investigation; the multilevel and multiscale representations in time, space, and size; the causal relations between interactions; and the context-awareness of the inner components. Therefore the modeling formalisms that are candidates for propelling algorithmic systems biology should be complementary to and interoperable with mathematical modeling, address parallelism and complexity, be algorithmic and quantitative, express causality, and be interaction-driven, composable, scalable, and modular.

Composability—the ability to characterize a system starting from the descriptions of its subsystems and a set of rules for assembling them—is fundamental to addressing the complexity of the applicative domain and at the same time to exploiting the benefits of parallel architectures such as many-multicore processors. Composability can either be shallow (that is, syntactic) or deep (semantic).13 Algorithmic systems biology needs both of these aspects of composability: models of biological systems must be built by shallow composition of building blocks taken from a library, and the specification of the overall system’s behavior must be obtained by deep composition of the representation of the building blocks’ behavior.

A relevant example relates to interactions, which can be studied on the molecular-machinery level (at one extreme) and on the population level (at the other). Metagenomics—the analysis of complex ecosystems as metaorganisms or complex biological networks—is an exciting and challenging field in which algorithms could help explain fundamental phenomena that are still not completely understood; one such phenomenon is horizontal gene transfer between bacteria (whereby bacteria exchange pieces of genome within the same generation to improve their adaptation to the environment, as in developing resistance to antibiotics). The success of these investigations is strictly tied to the identification of the right level of abstraction within the hierarchies of interactions (from molecules to organisms). Because the comprehension of how life organizes itself into cells, organisms, and communities is a major challenge that systems biology strives to understand,40 and because computer science is continuously shifting between various coherent views of the same artificial system, depending on the properties of interest, its capabilities could be crucial in addressing such issues in natural systems.

Another example is rhythmic behavior, which is so common in biological systems that understanding it is crucial to unraveling the dynamics of life.26 Rhythms have been a key point of interest in mathematical and computational biology since their earliest days51—a century of studies identified feedback processes (both positive/forward and negative/backward loops) and cooperativity as main sources of unstable behavior. These general control structures have a strong similarity to the primitives of concurrent programming languages used to specify the flow of control—for instance, the dichotomy of the cooperation and competition of processes to access resources; the infinite behavior of drivers of resources in operating systems; and conditional guarded commands to choose the next step to be performed. Once again, the full theory developed to cope with concurrency in artificial systems perfectly couples with algorithmic descriptions of biological systems, yielding a new reference framework in which computer science is a novel foundation for studying and understanding cellular rhythms.

Multiscale integration (in space, time, and size) is a major issue in current systems biology3 as well. The very essence of the multiple levels of abstraction that govern computer science—enabling it to address phenomena that span several orders of magnitude (from one clock cycle [nanoseconds] to a whole computations [hours])21—can help unravel and master the complexity of genome-wide modeling of biological systems. Thus the dynamic relationship between the parts and the whole of a system that seems to be the essence of systems biology is also a keystone for managing artificial (computing) systems. Such a relationship was even used to define computer science.36

Another relevant aspect of systems biology is the sensitivity of a network’s behavior to the quantitative parameters that govern its dynamics41,51—for instance, the concentration of species in a system or their affinity for interaction affects the speed of the reactions, thereby affecting the system’s overall behavior. Current developments such as variance or uncertainty output analysis usually consider a biological system as a black box that implements a function from inputs to outputs, assuming the system is deterministic.35 But as discussed earlier, I/O relationships are not the best way, or even a correct way, of defining the semantics of concurrent programs; different runs with the same inputs may generate different outcomes because of the relative speed of subcomponents. Given that biological systems are massively concurrent—not deterministic—a new algorithmic language-based modeling approach can certainly create new avenues for the sensitivity analysis of networks. That is, simulation-based science can turn sensitivity analysis of highly parallel systems into an observation-driven analysis, based on model-checking and verification techniques developed over the last 30 years for concurrent systems. The new findings could in turn benefit computer science itself.

Algorithmic systems biology will be innovative and successful if the life-sciences community actually uses the available conceptual and computational tools for modeling, simulation, and analysis. To ease this task, computing tools must hide as many formal details as possible from users, and here the growing and important area of software visualization can play a critical role. Visual metaphors of algorithm animations will help biologists understand how systems evolve, even while the scientists remain bound to their classical “picture” representations. Such pictures, however, may more profitably be mapped into “films.”

A final remark pertains to the comparison of different systems. Equivalences are a main tool in computer science for verifying computing systems; they can be used, for instance, to ensure that an implementation is in agreement with a specification. They abstract as much as possible from syntactic descriptions and instead focus on specifications’ and implementations’ semantics. So far, biology has focused on syntactic relationships between genes, genomes, and proteins, but an entirely new avenue of research is the investigation of the semantic equivalences of biological entities’ interactions in complex networks. This approach could lead to new visions of systems and reinforce computer science’s ability to enhance systems biology.

Impact

The integration of computer science and systems biology into algorithmic systems biology is a win-win strategy that will affect both disciplines, scientifically and technologically.

The scientific impact of accomplishing our vision, aided by feedback from the increased understanding of basic biological principles, will be in the definition of new quantitative theoretical frameworks. These frameworks can then help us address the increasing concurrency and complexity—observed in asynchronous, heterogeneous, and decentralized (natural/artificial) systems—in a verifiable, modular, incremental, and composable manner. Further, the definition of novel mechanisms for quantitative coordination and orchestration will produce new conceptual frameworks able to cope with the growing paradigm of distributing the logic of application between local software and global services. The definition of new schemas to store data related to the dynamics of systems and the new query languages needed to retrieve and examine such records will create novel perspectives. They, in turn, will allow the building of data centers that provide added value to globally available services.

Algorithmic systems biology can contribute to the future both of life sciences and natural sciences through interconnecting models and experiments.

Another major scientific impact will be the definition of a new philosophical foundation of systems biology that is algorithmic in nature and allows scientists to raise new questions that are out of range for the current conceptual and computational supports. An example is the interpretation of pathways (which do not exist per se in nature) as a reductionist approach for understanding the behavior of networks (collections of interwoven pathways interacting and working simultaneously) at the system level.

The technological impact of merging computer science and systems biology will be the design and implementation of artificial biology laboratories capable of performing many more experiments than what is currently feasible in real labs—and at lower cost (in terms both of human and financial resources) and in less time. These labs will allow biologists to design, execute, and analyze experiments to generate new hypotheses and develop novel high-throughput tools, resulting in advances in experimental design, documentation, and interpretation as well as a deeper integration between “wet” (lab-based) and “dry” research. Moreover, the artificial biology laboratories will be a main vehicle for moving from single-gene diseases to multifactorial diseases, which account for more than 90% of the illnesses affecting our society.

A deeper look at the causes of multifactorial diseases can positively influence their diagnosis and management. But health is not the only practical application of algorithmic systems biology. Comprehension of the basic mechanisms of life, coupled with engineered environments for synthetic biological design, can lead us toward the use of ad hoc bacteria to repair environmental damages as well as to produce energy.

Another major technological impact will be computer scientists’ ability to properly address the challenges posed by the hardware revolution—increasingly stressing parallelism in place of speed of processors—through new integrated programming environments amenable to concurrency and complexity.

Conclusion

Quantitative algorithmic descriptions of biological processes add causal, spatial, and temporal dimensions to molecular machinery’s behavior that is usually hidden in the equations. Algorithmic systems biology allows us to take a step forward in our understanding of life by transforming collections of pictures (cartoons) into spectacular films (the mechanistic dynamics of life). In fact, the languages and algorithms emerging from quantitative computing can be instrumental not only to systems biology but also to the scientific understanding of interactions in general.

Unraveling the basic mechanisms adopted by living organisms for manipulating information goes to the heart of computer science: computability. Life underwent billions of years of tests and was optimized during this very long time; we can learn new computational paradigms from it that will enhance our field. The same arguments apply to hardware architectures as well. Starting from the basics, we can use these new computational paradigms to strengthen resource management and hence operating systems, to develop primitives to instruct highly parallel systems and hence (concurrent) programming languages, and to develop software environments that ensure higher quality and better properties than current software applications.

Algorithmic systems biology can contribute to the future both of life sciences and natural sciences through interconnecting models and experiments. New conceptual and computational tools, integrated in a user-friendly environment, can be employed by life scientists to predict the behavior of multilevel and multiscale biological systems, as well as of other kinds of systems, in a modular, composable, scalable, and executable manner.

Algorithmic systems biology can also contribute to the future of computer science by developing a new generation of operating systems and programming languages. They will enable advanced simulation-based research, within a quantitative framework that connects in-silico replicas and actual systems, and enabled by biologically inspired tools.

Acknowledgments

The author thanks the CoSBi team for the numerous inspiring discussions.

Figures

Figure 1. Algorithms enable a transformation from “pictures” to “films.” The current practice in biological systems entails modeling the variation of measures through equations, with no causal explanation given (upper part of the figure). But algorithms describe the steps from one picture to the next in a causal continuum of the actions that make the measures change, thus providing a dynamic view of the system in question.

Figure 1. Algorithms enable a transformation from “pictures” to “films.” The current practice in biological systems entails modeling the variation of measures through equations, with no causal explanation given (upper part of the figure). But algorithms describe the steps from one picture to the next in a causal continuum of the actions that make the measures change, thus providing a dynamic view of the system in question.

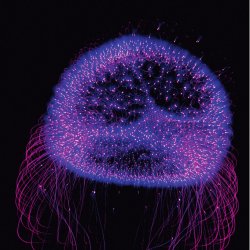

Figure 2. The biological systems observed through the window showing the life sciences (green rectangle) can be closely and mechanistically modeled through the use of algorithms (written on the glass of the window) that add causal, spatial, and temporal dimensions to classical biological descriptions. Moreover, algorithms can concisely represent the large quantities of data produced by high-throughput experiments (the river of numbers originating from biological elements within the window). Equations, currently considered the stars of modeling, are more abstract and hence more distant from living matter. The goal of algorithmic systems biology is to “reach for the moon” through a complete mechanistic model of living systems. (The lighted hemisphere in the picture represents a cell under a digitalization process.)

Figure 2. The biological systems observed through the window showing the life sciences (green rectangle) can be closely and mechanistically modeled through the use of algorithms (written on the glass of the window) that add causal, spatial, and temporal dimensions to classical biological descriptions. Moreover, algorithms can concisely represent the large quantities of data produced by high-throughput experiments (the river of numbers originating from biological elements within the window). Equations, currently considered the stars of modeling, are more abstract and hence more distant from living matter. The goal of algorithmic systems biology is to “reach for the moon” through a complete mechanistic model of living systems. (The lighted hemisphere in the picture represents a cell under a digitalization process.)

Join the Discussion (0)

Become a Member or Sign In to Post a Comment