As Arun Murthy’s 2019 post “Hadoop is dead, long live Hadoop“ put it, barring any specialized or legacy requirements, racking and stacking servers either in an on-premise or commercial data center for a Hadoop cluster was no longer a preferred pattern given advancements in public cloud computing. As the post said, done and dusted as typically deployed in the prior decade.

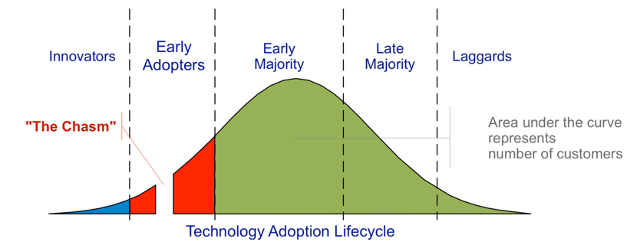

That said, I’ve seen some instances where people have written the Hadoop-era off entirely as if it “didn’t work all.” That is several steps too far. Hadoop environments could be complex —especially at scale—and did take non-trivial effort to manage. To paraphrase Crossing The Chasm, Hadoop in that form might have had some trouble finding a home at the top of the technology adoption lifecycle curve—or at least staying there—due in part to this complexity, but it was still an incredibly powerful platform in the hands of Innovators and Early Adopters. Hadoop was an advancement in distributed computing that owed much to the Google GFS, MapReduce, and BigTable papers, but it was also a response to a broader technology community need. Specifically, the frustrations with available data management and analytic patterns of the time, and those patterns were overwhelmingly proprietary.

Source: https://en.wikipedia.org/wiki/Crossing_the_Chasm

While computing patterns have moved significantly from on-premise to cloud, it is worth reviewing the fact that many of the frameworks from the Hadoop ecosystem are still in use.

Lucene

Apache Lucene is a search engine created in 1999, still very much in use within other higher-level search frameworks such as Elastic and Solr.

Search was a use-case that forced distributed computing—both in storage and in processing—and arguably was the first use-case of the Hadoop ecosystem. Nutch is a framework for distributed web crawling that began as a sub-project of Lucene. Hadoop started as a sub-project of Nutch. Although Lucene was created years before Hadoop existed, in a way it was the genesis of the ecosystem.

Hive

Apache Hive is a distributed SQL-based query engine with metadata repository that goes back to the earliest days of Hadoop. Programmers might be variously frustrated with commercial versions of relational database management systems, but SQL itself is a lingua franca for data analysts, and Hive provided the first SQL support in the ecosystem. The earliest Apache Hive Jira ticket goes back to 2008, but was built upon preceding efforts at Facebook.

Hive Metastore

While the Hive query engine attracted many competitors, the Hive Metastore was utilized in a number of distributed SQL engines, such as Impala, Drill, Presto, BigSQL, Shark, and SparkSQL, among others. The Hive Metastore is also utilized directly or as an integration option for cloud data platforms such as Databricks and Snowflake.

Hive Clones

Speaking of Hive clones, the AWS query service Athena is actually the Presto framework under the hood. As the saying goes, imitation is the sincerest form of flattery.

Serialization Frameworks

The Hadoop ecosystem saw the development of multiple serialization frameworks for both row and columnar formats.

Row oriented

Avro is a row-oriented serialization framework that dates to 2009 within Hadoop, but its usage spread.

Columnar

Parquet is a columnar serialization framework that dates to 2012/2013 from Cloudera’s efforts on the Impala query engine. ORC is another columnar framework created in 2013 by Hortonworks utilized by Hive. Columnar formats were a huge step forward for data management and analytics on large datasets in the Hadoop ecosystem as previously this feature was only available from proprietary column-oriented databases like Vertica. Both Parquet and ORC continue to be widely utilized.

Compression

The Snappy compression library was developed by Google and released open source in 2011. The Hadoop ecosystem provided a great showcase of Snappy’s capabilities across a number of projects, aided by a distribution-friendly license. Snappy continues to be widely utilized.

Streaming

Apache Kafka emerged in 2011 as a distributed streaming platform from efforts at LinkedIn. While not strictly speaking a “Hadoop” project, Kafka was frequently utilized in solutions that utilized Hadoop for streaming data ingress or egress from clusters, among other use-cases.

A historical implementation detail worth noting is that until recently, Kafka utilized the Apache ZooKeeper consensus service. ZooKeeper is yet another project which originally started as a Hadoop sub-project and then eventually split out as its own top-level project.

Spark

Speaking of responses, Spark was a response to MapReduce’s early successes as the 2010 paper “Spark: Cluster Computing with Working Sets” made clear (https://people.csail.mit.edu/matei/papers/2010/hotcloud_spark.pdf), particularly where iterative algorithms were needed. Spark eventually was utilized in Hadoop solutions via the Hadoop resource manager YARN, in both batch and streaming cases. A decade after its creation, Spark moved from being a response to being the dominant distributed processing framework, which is quite an achievement. But that achievement didn’t happen in a vacuum.

BigTable – What Goes Around Comes Around

Google BigTable, a distributed key-value storage framework, inspired a host of “NoSQL” clones, one of them being Apache HBase (~2007). In a circular twist, BigTable now supports HBase-compatible APIs.

Finally

To echo Arun’s sentiments at the end of his post, as long as there is data, there will be “Hadoop.” May that spirit of innovation continue, in whatever form it takes.

References

- “Hadoop is dead, long live Hadoop”

- Crossing The Chasm, Geoffrey Moore

- Apache Hadoop

- Apache Spark (2010)

- Google

- GFS paper (2003)

- MapReduce paper (2004)

- BigTable paper (2006)

- BigTable

- Related BLOG@CACM Posts

- “The continual re-creation of the KeyValue Datastore”

- “Why are there so many programming languages?”

Doug Meil is a software architect in healthcare data management and analytics. He also founded the Cleveland Big Data Meetup in 2010. More of his BLOG@CACM posts can be found at https://www.linkedin.com/pulse/publications-doug-meil

Join the Discussion (0)

Become a Member or Sign In to Post a Comment