As I write this article, an earlier installation from this blog, about nastiness in computer science, has just been republished in the Communications of the ACM (the actual journal) and has triggered a plethora of new reader comments, showing how sensitive the community is to the state of our publication culture. Indeed most of my recent posts have been about publication. This was supposed to be a software engineering blog, and I do intend to return to software engineering; after all software development is what I do. Before that, however, there is more to say about publication; in particular, how much the very concept has changed, half of its traditional meaning having disappeared in hardly more than a decade. Or to put it differently (if you will accept the metaphor, explained below), how it has lost its duality: no longer particle, just wave.

Process and product

Some words ending with ation (atio in Latin) describe a change of state: restoration, dilatation. Others describe the state itself, or one of its artifacts: domination, fascination. And yet others play both roles: decoration can denote either the process of embellishing (she works in interior decoration), or an element of the resulting embellishment (Christmas tree decoration).

Since at least Gutenberg, publication has belonged to that last category: both process and artifact. A publication is an artifact, such as an article or a book accessible to a community of readers. We are referring to that view when we say “she has a long publication list or “Communications of the ACM is a prestigious publication.” But the word also denotes a process, built from the verb “publish” the same way “restoration” is built from “restore” and “insemination” from “inseminate”: the publication of her latest book took six months.

The thesis of this article is that the second view of publication will soon be gone, and its purpose is to discuss the consequences for scientists.

Let me restrict the scope: I am only discussing scientific publication, and more specifically the scientific article. The situation for books is less clear; for all the attraction of the Kindle and other tablets, the traditional paper book still has many advantages and it would be risky to talk about its demise. For the standard scholarly article, however, electronic media and the web are quickly destroying the traditional setup.

That was then . . .

Let us step back a bit to what publication, the process, was a couple of decades ago. When you wrote something, you could send it by post to your friends (Edsger Dijkstra famously turned this idea into his modus operandi, regularly xeroxing his “EWD” memos [1] to a few dozen people) , but if you wanted to make it known to the world you had to go through the intermediation of a PUBLISHER — the mere word was enough to overwhelm you with awe. That publisher, either a non-profit organization or a commercial house, was in charge not only of selecting papers for a conference or journal but of bringing the accepted ones to light. Once you got the paper accepted began a long and tedious process of preparing the text to the publisher’s specifications and correcting successive versions of “galley proofs.” That step could be painful for papers having to do with programming, since in the early days typesetters had no idea how to lay out code. A few months or a couple of years later, you received a package in the mail and proudly opened the journal or proceedings at the page where YOUR article appeared. You would also, usually for a fee, receive fifty or so separately printed (tirés à part) reprints of just your article, typeset the same way but more modestly bound. Ah, the discrete charm of 20-th century publication!

. . . and this is now

Cut to today. Publishers stopped long ago to do the typesetting for you. They impose the format, obligingly give you LaTex, Word or FrameMaker templates, and you take care of everything. We have moved to WYSIWYG publishing: the version you write is the version you submit through a site such as EasyChair or CyberChair and the version that, after correction, will be published. The middlemen have been cut out.

We moved to this system because technology made it possible, and also because of the irresistible lure, for publishers, of saving money (even if, in the long term, they may have removed some of the very reasons for their existence). The consequences of this change go, however, far beyond money.

Integrating change

To understand how fundamentally the stage has changed, let us go back for a moment to the old system. It has many advantages, but also limitations. Some are obvious, such as the amount of work required, involving several people, and the delay from paper completion to paper publication. But in my view the most significant drawback has to do with managing change. If after publication you find a mistake, you must convince the journal to include an erratum: a new mini-article, subject to the same process. That requirement is reasonable enough but the scheme does not support a significant mode of scientific writing: working repeatedly on a single article and progressively refining it. This is not the “LPU” (Least Publishable Unit) style of publishing, but a process of studying an important idea or research project and aiming towards the ideal paper about it by successive approximation. If six months after the original publication of an article you have learned more about the topic and how to present it, the publication strategy is not obvious: resubmit it and risk being accused of self-plagiarism; avoid repetition of basic elements, making the article harder to read independently; artificially increase differences. This conundrum is one of the legitimate sources of the LPU phenomenon: faced with the choice between freezing material and repeating it, people end up publishing it bit by bit.

Now back again to today. If you are a researcher, you want the world to know about your ideas as soon as they are in a clean form. Today you can do this easily: no need to photocopy page after page and lick postage stamps on envelopes the way Dijkstra did; just generate a PDF and put it on your Web page or (to help establish a record if a question of precedence later comes up) on ArXiv. Just to make sure no one misses the information, tweet about it and announce it on your Facebook and LinkedIn pages. Some authors do this once the paper has been accepted, but many start earlier, at the time of submission or even before. I should say here that not all disciplines allow such author behavior; in biology and medicine in particular publishers appear to limit authors’ rights to distribute their own texts. Computer scientists would not tolerate such restrictions, and publishers, whether nonprofit or commercial, largely leave us alone when we make our work available on the Web.

But we are talking about far more than copyright and permissions (in this article I am in any case staying out of these emotionally and politically charged issues, open access and the like, and concentrating on the effect of technology changes on the process of publishing and the publication culture). The very notion of publication has changed. The process part is gone; only the result remains, and that result can be an evolving product, not a frozen artifact.

Particle, or wave?

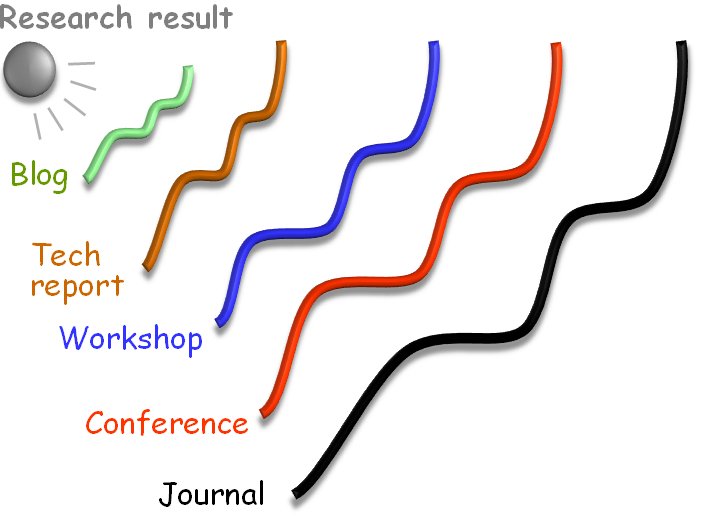

Another way to describe the difference is that a traditional publication, for example an article published in a journal, is like a particle: an identifiable material object. With the ease of modification, a publication becomes more like a wave, which allows an initial presentation to propagate to successively wider groups of readers:

Maybe you start with a blog entry, then you register the first version of the work as a technical report in your institution or on ArXiv, then you submit it to a workshop, then to a conference, then a version of record in a journal.

In the traditional world of publication each of these would have to be made sufficiently different to avoid the accusation of plagiarism. (There is some tolerance, for example a technical report is usually not considered prior publication, and it is common to submit an extended version of a conference paper to a journal — but the journal will require that you include enough new material, typically “at least 30%.”)

For people who like to polish their work repeatedly, that traditional model is increasingly hard to accept. If you find an error, or a better way to express something, or a complementary result, you just itch to make the change here and now. And you can. Not on a publisher’s site, but on your own, or on ArXiv. After all, one of the epochal contributions of computer technology, not heralded loudly enough, is, as I argued in another blog article [2], the ease with which we can change, extend and refine our creations, developing like a Beethoven and releasing like a Mozart.

The “publication as product” becomes an evolving product, available at every step as a snapshot of the current state. This does not mean that you can cover up your mistakes with impunity: archival sites use “diff” techniques to maintain a dated record of successive versions, so that in case of doubt, or of a dispute over precedence, one can assess beyond doubt who released what statement when. But you can make sure that at any time the current version is the one you like best. Often, it is better than the official version on the conference or journal site, which remains frozen forever like it was on the day of its release.

What then remains of “publication as process“? Not much; in the end, a mere drag-and-drop from the work folder to the publication folder.

Well, there is an aspect I have not mentioned yet.

The sanction

Apart from its material side, now gone or soon to be gone, the traditional publication process has another role: what a recent article in this blog [3] called sanction. You want to publish your latest scientific article in Communications of the ACM not just because it will end up being printed and mailed, but because acceptance is a mark of recognition by experts. There is a whole gradation of prestige, well known to researchers in every particular field: conferences are better than workshops, some journals are as good as conferences or higher, some conferences are far more prestigious than others, and so on.

That sanction, that need for an independent stamp of approval, will remain (and, for academics, young academics in particular, is of ever growing importance). But now it can be completely separated from the publication process and largely separated (in computer science, where conferences are so important today) from the conference process.

Here then is what I think scientific publication will become. The researcher (the author) will largely be in control of his or her own text as it goes through the successive waves described above. A certified record will be available to verify that at time t the document d had the content c. Then at specific stages the author will submit the paper. Submit in the sense of appraisal and, if the appraisal is succesful, certification. The submission may be to a conference: you submit your paper for presentation at this year’s ICSE, POPL or SIGGRAPH. (At the recent Dagstuhl publication culture workshop, Nicolas Hozschuch mentioned that some graphics conferences accept for presentation work that has already been published; isn’t this scheme more reasonable than the currently dominant practice of conference-as-publication?) You may also submit your work, once it reaches full maturity, to a journal. Acceptance does not have to mean that any trees get cut, that any ink gets spread, or even that any bits get moved: it simply enables the journal’s site to point to the article, and your site to add this mark of recognition.

There may also be other forms of recognition, social-network or Trip Advisor style: the community gets to pitch in, comment and assess. Don’t laugh too soon. Sure, scientific publication has higher standards than Wikipedia, and will not let the wisdom of the crowds replace the judgment of experts. But sometimes you want to publish for communication, not sanction, and especially if you have the privilege of no longer being trapped in the publish-or-perish race you simply want to make your research known, and you have little patience for navigating the meanders of conventional publication, genuflecting to the publications of PC members, and following the idiosyncratic conventional structure of the chosen conference community. Then you just publish and let the world decide.

In most cases, of course, we do need the sanction, but there is no absolute reason it should be tied to the traditional structures of journal publication and conference participation. There will be resistance, if only because of the economic interests involved; some of what we know today will remain, albeit with a different focus: conferences, as a place where the best work of the moment is presented (independently of its publication); printed books, as noted; and printed journals that bring real added value in the form of high-quality printing, layout and copy editing (and might still insist that you put on their site a copy of your paper rather than, or in addition to, a reference to your working version).

The trend, however, is irresistible. Publication is no longer a process, it is a product, increasingly under the control of the authors. As a product it is no longer a defined particle but a wave, progressively improving as it reaches successive classes of readership, undergoes successive steps of refinement and receives, informally from the community and officially from more or less prestigious sources, successive stamps of approval.

References

[1] Dijkstra archive at the University of Texas at Austin, here.

[2] Bertrand Meyer: Computer Technology: Making Mozzies out of Betties, blog article, 2 August 2009, available here.

[3] Bertrand Meyer: Conferences: Publication, Communication, Sanction, article on this blog, 10 January 2013, available here.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment