Academic rankings have a huge presence in academia. College rankings by U.S. News and World Report (USNWR) help undergraduate students find the "perfect school." Graduate-program rankings by USNWR are often the most significant decision-making factor for prospective graduate students. The Academic Ranking of World Universities (known also as the "Shanghai Ranking") is one that attracts much attention from university presidents and governing boards. New academic rankings, of many different forms and flavors, have been popping up regularly over the last few years.

Yet, there is also deep dissatisfaction in the academic community with the methodology of such rankings and with the outsize role that commercial entities play in the ranking business. The recent biennial meeting of the Computing Research Association (CRA) dedicated a session to this topic (see http://cra.org/events/snowbird-2016/#agenda), asserting that "Many members of our community currently feel the need for an authoritative ranking of CS departments in North America" and asking "Should CRA be involved in creating a ranking?" The rationale for that idea is the computing-research community will be better served by helping to create some "sensible rankings."

The methodology currently used by USNWR to rank computer-science graduate program is highly questionable. This ranking is based solely on "reputational standing" in which department chairs and graduate directors are asked to rank each graduate program on a 1–5 scale. Having participated in such reputational surveys for many years, I can testify that I spent about a second or two coming up with a score for the over 100 ranked programs. Obviously, very little contemplation went into my scores. In fact, my answers have clearly been influenced by prior-year rankings. It is a well-known "secret" that rankings of graduate programs of universities of outstanding reputation are buoyed by the halo effect of their parent institutions’ reputations. Such reputational rankings have no academic value whatsoever, I believe, though they clearly play a major role in academic decision making.

But the problem is deeper than the current flawed methodology of USNWR’s ranking of graduate programs. Academic rankings, in general, provide highly misleading ways to inform academic decision making by individuals. An academic program or unit is a highly complex entity with numerous attributes. An academic decision is typically a multi-objective optimization problem, in which the objective function is highly personal. A unidimensional ranking provides a seductively easy objective function to optimize. Yet such decision making ignores the complex interplay between individual preferences and programs’ unique patterns of strengths and weaknesses. Decision making by ranking is decision making by lazy minds, I believe.

Furthermore, academic rankings have adverse effects on academia. Such rankings are generally computed by devising a mapping from the complex space of program attributes to a unidimensional space. Clearly, many such mappings exist. Each ranking is based on a specific "methodology," that is, a specific ranking mapping. The choice of mapping is completely arbitrary and reflects some "judgement" by the ranking organization. But the academic value of such a judgement is dubious. Furthermore, commercial ranking organizations tweak their mappings regularly in order to create movement in the rankings. After all, if you are in the business of selling ranking information, then you need movement in the rankings for the business to be viable. Using such rankings for academic decision making is letting third-party business interests influence our academic values.

Thus, to the question "Should CRA get involved in creating a ranking?" my answer is "absolutely not." I do not believe that "sensible rankings" can be defined. The U.S. National Research Council’s attempt in 2010 to come up with an evidence-based ranking mapping is widely considered a notorious failure. Furthermore, I believe the CRA should pass a resolution encouraging its members to stop participating in the USNWR surveys and discouraging students from using these rankings for their own decision making. Instead, CRA should help well-informed academic decision making by creating a data portal providing public access to relevant information about graduate programs. Such information can be gathered from an extended version of the highly respected Taulbee Survey that CRA has been running for over 40 years, as well as from various open sources. CRA could also provide an API to enable users to construct their own ranking based on the data provided.

Academic rankings are harmful, I believe. We have a responsibility to better inform the public, by ceasing to "play the ranking games" and by providing the public with relevant information. The only way to do that is by asserting the collective voice of the computing-research community.

Follow me on Facebook, Google+, and Twitter.

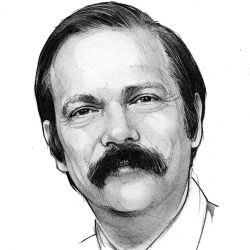

Moshe Y. Vardi, EDITOR-IN-CHIEF

Join the Discussion (0)

Become a Member or Sign In to Post a Comment