The idea that the human brain can be thought of as a fancy computer has existed as long as there have been computers. In a simple way, the analogy makes sense: A brain takes in information and manipulates it to produce desirable output in the form of physical actions or, more abstractly, plans and ideas. Within the brain information moves in the form of electrical signals zipping through and among neurons, the nerve cells that form the elementary signal processing units of biological systems. But the likeness between brains and electronic computers only goes so far. Neurons, as well as the connections between them, work in unpredictable and probabilistic ways; they are not simple gates, switches, and wires. For researchers working in computational neuroscience, the looming puzzle is to understand how a brain built from fundamentally unreliable components can so reliably perform tasks that digital computers have barely begun to crack.

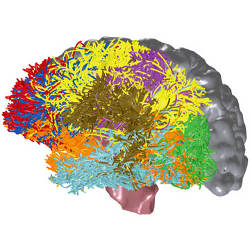

The brain of a human adult contains approximately 100 billion neurons, each of which has an average of several thousand connections to other neurons. As neuroscientists have long realized, it’s the complex connectivity as much as the sheer number of neurons that make understanding brain function such a daunting task. To that end, researchers study individual neurons and small collections of them with the aim of building an explanation for neural activity from the ground up. But that task is also far from simple.

Typically, a neuron constantly receives signals from thousands of other neurons. Whether a neuron fires and thereby passes along a signal to the thousands of connecting neurons depends on the nature of the input it receives. Neural firing and signal transmission are chemical processes and the fundamental reason they are not completely reliable, says Hsi-Ping Wang, a researcher at the Salk Institute for Biological Studies, is that “biology is messy. Molecules have to bind and unbind, and chemical and signal elements have to mix and diffuse.”

Nature bypasses this messiness in part by resorting to statistical methods. For example, Wang and his colleagues combined recordings of in vivo neural activity, with a computer simulation of neuron function in the visual cortex of a cat, to show that neurons fired most reliably when they were stimulated by the almost simultaneous arrival of approximately 30 input signals. With fewer than 20 signals arriving at once, the neuron was significantly less likely to fire, but the simultaneous arrival of more than 40 signals brought no gain in the reliability of the output signal. It’s not inconceivable that nature could make more reliable neurons, says Wang, but “there is a cost to making things perfectly reliable—the brain can’t afford to expend resources for that additional perfection when slightly probabilistic results are good enough.”

Neuron firing is not the brain’s only probabilistic element. Signals pass from one neuron to another through biomolecular junctions called synapses. In a phenomenon known as plasticity, the likelihood that a synapse will transmit a spike to the next neuron can vary depending on the rate and timing of the signals it receives. Therefore, synapses are not mere passive transmitters but are information processors in their own right, sending on a transformed version of the received signals.

These complications cast light on the usefulness of thinking the brain is similar to a massively parallel super-computer, with many discrete processors exchanging information back and forth. Information processing by the brain crucially depends on loops and feedbacks that operate down to the level of individual neurons, says Larry Abbott, co-director of the Center for Theoretical Neuroscience at Columbia University, and that aspect makes the nature of the processing exceedingly difficult to analyze. “The brain is stochastic and dynamical,” Abbott says, “but we can control it to an incredible degree. The mystery is how.”

The current puzzle is to understand how a brain built from fundamentally unreliable components can reliably perform tasks that digital computers have barely begun to crack.

As an illustrative example, Jim Bednar, a lecturer at the Institute for Adaptive and Neural Computation School of Informatics at the University of Edinburgh, considers what happens when a person catches a flying ball. It’s tempting—but wrong—to suppose the visual area of the brain figures out the ball’s path, then issues precise instructions to the muscles of the arm and hand so that they move to just the right place to catch the ball. In reality, neural processes are messy and stochastic all the way from the visual cortex down to our fingertips, with multiple and complex signals going back and forth as we try to make the hand and ball intersect. In this system, Bednar says, “the smartness is everywhere at once.”

It’s also important to realize the operation of the brain cannot be overly sensitive to the behavior of individual neurons, Bednar adds, if for no other reason than that “neurons die all the time.” What matters, he says, must be the collective behavior of thousands of neurons or more, and on those scales the vagaries of individual neural operation may be insignificant. He regards the brain as having a type of computational workspace that simultaneously juggles many possible solutions to a given problem, and relies on numerous feedbacks to strengthen the appropriate solution and diminish unwanted ones—although how that process works, he admits, no one yet knows.

Empirical Knowledge

Alexandre Pouget, associate professor of brain and cognitive sciences and bioengineering at Rochester University, uses empirical knowledge of neuron function to build theoretical and computational models of the brain. Noting that neural circuitry exhibits common features through much of the cortex, he argues that the brain relies on one or a handful of general computational principles to process information. In particular, Pouget believes the brain represents information in the form of probability distributions and employs methods of statistical sampling and inference to generate solutions from a wide range of constantly changing sensory data. If this is true, the brain does not perform exact, deterministic calculations as a digital computer does, but reliably gets “good enough answers in a short time,” says Pouget.

To explore these computational issues, Pouget adopts a cautious attitude to the question of how important it is to know in precise detail what individual neurons do. For example, the exact timing of output spikes may vary from one neuron to another in a given situation, but the spike rate may be far more consistent—and may be the property that a computational method depends on. In their modeling, Pouget says he and his colleagues “simplify neurons as much as possible. We add features when we understand their computational role; we don’t add details just for the sake of adding them.”

The distinction between understanding individual neurons and understanding the computational capacity of large systems of neurons is responsible for “a huge schism in the modeling community,” Bednar says. That schism came into the open last year when Dharmendra Modha, manager of cognitive computing at IBM Almaden Research Center, and colleagues reported a simulation on an IBM Blue Gene supercomputer of a cortex with a billion neurons and 10 trillion synaptic connections—a scale that corresponds, the authors say, to the size of a cat’s brain. This claim drew a public rebuke from Henry Markram, director of the Blue Brain Project at the École Polytechnique Fédérale de Lausanne, which is using supercomputers to simulate neurons in true biological detail. Markram charged that the neurons in Modha’s simulation were so oversimplified as to have little value in helping to understand a real neural system. In contrast, the Blue Brain project exhausts the capacity of a Blue Gene supercomputer in modeling just some tens of thousands of neurons.

“Both those approaches are important,” says Abbott, although he thinks the task of understanding brain computation is better tackled by starting with large systems of simplified neurons. But the big-picture strategy raises another question: In simplifying neurons to construct simulations of large brains, how much can you leave out and still obtain meaningful results? Large-scale simulations such as the one by Modha and his colleagues don’t, thus far, do anything close to modeling specific brain functions. If such projects “could model realistic sleep, that would be a huge achievement,” says Pouget.

With today’s currently available computing power, computational neuroscientists must choose between modeling large systems rather crudely or small systems more realistically. It’s not yet clear, Abbott says, where on that spectrum lies the sweet spot that would best reveal how the brain performs its basic functions.

Abbott, L.F.

Theoretical neuroscience rising, Neuron 60, 3, Nov. 6, 2008.

Ananthanarayanan, R., Esser, S.K., Simon, H.D., and Modha, D.S.

The cat is out of the bag: cortical simulations with 109 neurons, 1013 synapses, Proc. Conf. on High Performance Computing, Networking, Storage, and Analysis, Portland, OR, Nov. 1419, 2009.

Miikkulainen, R., Bednar, J.A., Choe, Y., and Sirosh, J.

Computational Maps in the Visual Cortex. Springer, New York, NY, 2005.

Pouget, A., Deneuve, S., and Duhamel, J.-R.

A computational perspective on the neural basis of multisensory spatial representations, Nature Reviews Neuroscience 3, Sept. 2002.

Wang, H.-P., Spencer, D., Fellous, J.-M., and Sejnowski, T.J.

Synchrony of thalamocortical inputs maximizes cortical reliability, Science 328, 106, April 2, 2010.

Join the Discussion (0)

Become a Member or Sign In to Post a Comment